Today the world of open science received its greatest asset in the form of the Summit supercomputer at Oak Ridge National Laboratory (ORNL). This represents an historic milestone because it is the world’s first supercomputer fusing high performance, data-intensive, and AI computing into one system. Summit is capable of delivering a peak 200 petaflops, ten times faster than its Titan predecessor, the first GPU-accelerated system that started it all at ORNL.

Today the world of open science received its greatest asset in the form of the Summit supercomputer at Oak Ridge National Laboratory (ORNL). This represents an historic milestone because it is the world’s first supercomputer fusing high performance, data-intensive, and AI computing into one system. Summit is capable of delivering a peak 200 petaflops, ten times faster than its Titan predecessor, the first GPU-accelerated system that started it all at ORNL.

Summit brings together several key NVIDIA technologies, including the Volta GPU and the high-speed NVLink interconnect. In addition, Summit manages substantial boosts in performance with only a modest increase in power consumption. Let’s examine how Summit came to become the pinnacle of GPU computing.

A Brief History of Accelerated Supercomputing

Every supercomputer begins with listening to the scientists about what complex problems they want to solve. Researchers outgrow these computers on average, every 4-5 years. They create ever more complex simulations by using or generating even larger datasets with each generation. Summit is replacing another GPU based supercomputer at ORNL named Titan. Each resulted from the needs of scientists for more computational power beyond Moore’s Law. Moore’s Law states that the number of transistors in a given area doubles every two years. But what does Moore’s law have to do with Summit or Titan? Let’s take a bit of a history detour to find out.

One way to make a processor compute faster is to add more transistors. More transistors usually means more computational horsepower and, therefore, more math performed. But there are only so many transistors you can squeeze into a given area; to squeeze more you need to shrink them down ever smaller. Today’s processors are based on 12nm technology, meaning the feature size, or the ways they can make tiny transistors is approximately 12nm – roughly 20 silicon atoms in a row. So, what happens when your transistor size becomes so small that they’re no bigger than a few atoms?

This brings us to Dennard scaling. Transistors require power to run. As they get smaller, you should be able to use the same amount of power that you did 24 months prior on twice as many transistors. Because they are closer to one another you can run them faster. If you’re old enough to remember buying computers in the 1990s, you’ll remember how computers were advertised by how fast their CPUs ran. 500MHz, 1GHz, 2GHz… those were the days where you could speed up your application just by buying a new computer! But at a higher clock frequency, current leakages within junctions turns into heat. Soon chip makers found themselves unable to increase clock frequencies beyond roughly 4GHz because temperatures inside the chips neared that of nuclear reactors. That’s unnecessarily hot and also consumes far too much power.

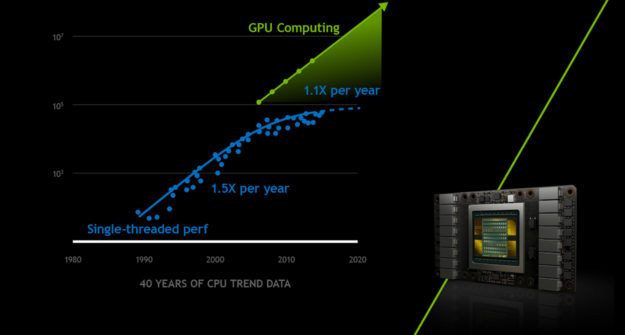

If we can’t cram more transistors into an area, or make them do math faster, then how can we speed up applications? NVIDIA took a different direction: the future of faster computing would be based on increased parallelism using a higher number of more efficient compute cores. Figure 1 shows how well NVIDIA GPUs have scaled performance over CPUs. NVIDIA applied this paradigm to graphics very successfully, since graphics require parallel computing. For example, determining what color to shade all 4K pixels on your monitor is a highly parallel operation because it’s often the same operation for every pixel. Historically, supercomputers increased the number of nodes in order to run ever large scientific applications.

For example, if I wanted to simulate a gas, I could apply F=ma when a particle interacts with another particle in the system. For a large problem that I can’t fit in one computer, say our gas is the water vapor contained in the whole atmosphere of our planet Earth, I could break up the problem up and run it across computers that are connected via network. One begins to see how GPUs can be used for the same purpose.

Around 2008 GPUs began gaining traction as massive parallel compute architectures, especially in the field of materials science, being used for molecular dynamics simulations. Instead of using 500 nodes, with all their power-hungry peripherals, scientists figured out that 500 CUDA cores in a 9600GT could run scientific applications as fast as a few hundred nodes of a supercomputer at a fraction of the power, with a single card. GPUs enable a massive increase in parallelism for each node, reducing the need to add more nodes.

Oak Ridge National Laboratory took notice, and when the time came to plan for the upgrade of their supercomputer at the time, Jaguar, designers submitted a proposal to upgrade Jaguar by adding GPUs to the PCIe slots on the existing boards. In 2012, Jaguar, a 2.7PF system using 7MW of peak power, became Titan, a 27PF system using 8.2MW of peak power. A 10x gain in peak performance came about using a fraction of the power necessary to build an equivalent system out of CPUs only.

ORNL recognized the advent of a major architectural shift into accelerated computing in 2008: using GPUs was needed to continue to advance computational science. This meant developers needed to port applications to use accelerators alongside CPUs. Developers needed to move the most computationally intensive parts of their applications using a combination of GPU-accelerated libraries, directives, and/or CUDA to get the most performance out the NVIDIA Tesla K20X GPUs. The Titan supercomputer debuted as #1 in the TOP500 list in 2012 and consequently NVIDIA named the top of the line gaming card “Titan” that year. The GeForce product family continues to use the “Titan” name for its flagship developer/gamer card even today.

GPUs offers dense compute capacity consisting of thousands of cores that could be used to do math in parallel on very large scientific datasets. At first, developers found they could run their applications faster, but as they implemented more complex models the need for even more math, in the form of both greater compute power and increased memory capacity, arose. This is the cat-and-mouse game that supercomputing centers like the Oak Ridge Leadership Computing Facility must play. Before Titan had ended its first year in production, another round of requirements were already being drawn to address the new computational needs of the scientific community.

Supercomputers: From Titan to Summit

Titan proved it could meet these needs using GPU-accelerated computing; Summit is a continuation of this success. When the time came for DOE to upgrade its supercomputers, it took an unusual and unprecedented step of combining three labs’ needs into a single call for proposals. The DOE had seen the benefit in taking a bold step with Titan and this time around, it required that the two leadership computing facilities, at Oak Ridge and Argonne, select different architectures from one another. The third lab, Livermore National Laboratory, would then choose from one of these two selected architectures. The most important requirements of these new systems were simple: stay within a power envelope of no more than 15MW and also accelerate applications anywhere between 5-10x over baselines obtained on the then state of the art supercomputers.

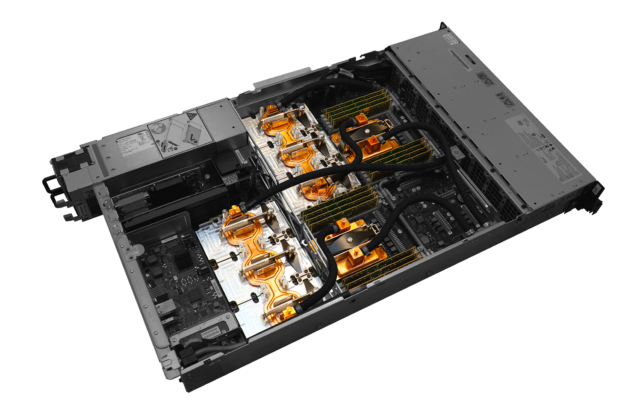

NVIDIA partnered with IBM to propose an accelerator-based computer with 2 CPUs + 6 GPUs. Go big or go home, right? These nodes were powerful — almost too powerful; “could users even program them?” As the training coordinator for OLCF at the time, I could feel the hesitation in the room across all three labs as we reviewed the proposals. However, I remained confident about the programmability and potential performance of what this machine could bring. Today’s NVIDIA DGX-2 includes 16 GPUs, but in 2013 six GPUs in a single box seemed exorbitant

to some reviewers.

ORNL had experience with Titan, and the choice to go with GPUs felt not only comfortable but necessary. We can’t depend on Moore’s Law or Dennard Scaling, and the more we thought about the architecture, the more 3:1 ratio of GPUs to CPUs made sense. Compared to Titan’s 18,688 nodes, Summit would have 4608 nodes, just a quarter of those available with Titan. Fewer nodes equals lower energy consumption and reduced infrastructure such as switches and cabling. Each six-GPU node delivers an impressive 30,720 CUDA and 3,840 Tensor cores from Volta GPUs; you can do a lot of math with that.

In Titan, the K20X GPU depended on a PCIe slot to connect the CPU and GPU memories, limiting the data transfer bandwidth to just 8GB/s. NVIDIA and IBM proposed NVLink a high-speed interconnect with 300GB/s aggregate bandwidth thus removing PCIe bandwidth constraints between GPU, CPU, and memory. With unified memory and NVLink, the developer could view the entire node memory (CPU memory, GPU memory, even non-volatile RAM) as a single addressable space and make migrating new applications to the Summit node architecture easier. Any CPU or GPU can read from or write to any memory location on the node, even atomically.

Once migrated to the GPU, your data could be fed to the CUDA and Tensor cores at a fantastic 900GB/s. When it came time to select the system, ORNL chose this architecture above all others. Livermore National Laboratory, with the choice between the two top selections, went with ORNL in choosing GPU acceleration based on Power9 + Voltas for their next supercomputer, Sierra. (https://computation.llnl.gov/computers/sierra) All of this computational power requires only around 13MW of power.

| Titan | Summit | |

| Compute Nodes | 18,688 | 4,608 |

| GPU | 1x NVIDIA K20x per node; 18,688 GPUs total |

6x NVIDIA Volta per node; 27,648 GPUs total |

| CPU |

1x AMD Opteron 16-core per node; 18,688 CPUs total |

2x IBM Power9 per node; 9,216 CPUs total |

| Node Performance | 1.44 teraflops | 49 teraflops |

| Peak Performance | 27 petaflops | 200 petaflops |

| System Memory | 710 terabytes | 10 petabytes |

|

CPU-GPU-Memory Interconnect |

PCI Gen 2 (8GB/s) | NVLink (300GB/s) |

| Power Consumption | 9MW | 13MW |

Table 1. Titan and Summit specs compared

Lessons Learned

The scientific outcomes of Titan inspired all who went to the whiteboard to design Summit, from basic building blocks to overall data center. Building a supercomputer is unlike buying a car. It’s a collaboration between national labs and the winning bidders. Staying at the bleeding edge of technology means making a selection decision based on architectures that don’t yet exist, but are on the roadmap maybe one or two generations in the future. Labs joke internally that they get “serial #0”, meaning they typically get the first items out of the factory of the latest technology, and then assemble it at scale onsite for the first time. Imagine buying a car, never having seen it, where they bring the parts to your house and build it on the spot, for the first time.

However, part of the partnership means there’s room to tweak the architecture along the way. For example, NVIDIA recognized during the Summit project that AI would become an important tool for science, and continued to innovate, delivering beyond the original 2014 specifications for Summit. Engineers included 640 Tensor Cores in each Volta GPU in Summit, allowing scientists the option to use FP16 precision not only in AI applications but also for simulation that depend heavily on matrix multiplications. This flexibility of simulation and AI in one single system makes Summit unique in its design.

Today we see that dedication and collaboration come to life in Summit. This system will allow scientists to simulate brain cells signals to reveal the origins of neurological disorders such as epilepsy, Alzheimer, and Parkinson’s Disease. Astrophysicists can explore how heavy metals get created by simulating 100s of nuclei that are created during supernova explosions, where in the past they could simulate less than 15. With over 10 PBytes of memory, Summit can do analytics on large health and genomic datasets, mining them for novel health insights.

The towering performance of Summit is the result of the work of several thousands of engineers at NVIDIA and IBM working closely with ORNL. The smartest people coming together to build the best of what technology has to offer is what makes Summit the smartest supercomputer ever built.