Data centers are an essential part of a modern enterprise, but they come with a hefty energy cost. To complicate matters, energy costs are rising and the need for data centers continues to expand, with a market size projected to grow 25% from 2023 to 2030.

Globally, energy costs are already negatively affecting data centers and high-performance computing (HPC) systems. To alleviate the energy cost burden, data center managers are deferring the purchase of new systems, limiting the capabilities of current systems, and even reducing operational hours. Because of this compounding growth in cost and need, it is essential to find alternative energy sources or maximize energy efficiency as quickly and cost-effectively as possible.

In this post, we discuss four practical strategies for reducing data center energy consumption. By implementing these strategies, you can reduce energy costs and improve the performance and reliability of your data center. Ultimately, these strategies are just the first steps in improving your environmental, social, and governance (ESG) investing appeal, an increasing priority for global investors.

Before diving in, we want to note that energy efficiency is only one step on the path to sustainability. As it stands today, data center energy efficiency sits under the umbrella of sustainable computing, the design, manufacture, use, and disposal of computers, chips, and other technologies to achieve a net-zero impact on the environment.

For more information about sustainable computing through the use of AI, see the Three Strategies to Maximize Your Organization’s Sustainability and Success in an End-to-End AI World GTC session with NVIDIA Accelerated Computing Chief Technology Officer Steve Oberlin.

Here are some technology-based factors and operational considerations to maximize energy efficiency in your data center:

- Accelerated computing

- Scheduling and containers

- Efficient cooling

Accelerated computing

“The amount of power that the world needs in the data center will grow. That’s a real issue for the world. The first thing that we should do is: every data center in the world, however you decide to do it, for the goodness of sustainable computing, accelerate everything you can.”

Jensen Huang, NVIDIA founder and CEO

Moore’s law is the observation that the number of transistors in an integrated circuit and the speed and capability of computers double roughly every 2 years. However, this historical trend has reached its end as the speed of transistors stopped increasing around 2009.

Now, as the frequency benefits of Moore’s law wane, single-threaded performance has reached a plateau. Software tool vendors have had to seek other methods of improving performance.

Industry leaders are turning to parallelism and GPU-powered accelerated computing. They see these strategies as the clear solution to maximize performance within the data center power envelope cap by maximizing energy efficiency.

Accelerated computing is also the most cost-effective way to achieve energy efficiency in a data center. By using specialized hardware, such as GPUs and DPUs, to carry out certain common complex computations faster and more efficiently than general-purpose CPUs, data centers can perform more computations with less energy. This reduces energy consumption and time-to-solution and also lowers the carbon footprint per computation.

Energy-efficient hardware

Energy-efficient hardware is a central part of the accelerated computing spectrum and is a powerful investment for any sustainable computing strategy.

For example, high-speed interconnects like direct chip-to-chip (C2C) data transfer paths provide direct memory access between processing cores. For instance, NVIDIA Grace Hopper pairs an NVIDIA Grace CPU and an NVIDIA Hopper H100 GPU with a 900GB/s interconnect, enabling rapid direct data transfers and making sure that the GPU is always fully utilized. This minimizes the energy consumed to perform a workload.

When you are choosing new hardware for your data center, it is important to consider both efficiency and performance when making your selection. However, not all energy-efficient technologies provide superior performance.

Fortunately, a new wave of full-stack, data center-scale, energy-efficient hardware is available for a wide variety of use cases. NVIDIA Grace CPU, NVIDIA Grace Hopper, and NVIDIA BlueField-3 are new chips for super energy-efficient accelerated data centers.

Mainstream applications are seeing 2x gains over x86 in energy-efficient performance on microservices, analytics, simulations, and more from the NVIDIA Grace CPU alone.

Scheduling and containers

Containerization is a software deployment process that bundles an application’s code with all the files and libraries it needs for running on any infrastructure.

While containerization and scheduling may not be applicable to supercomputing centers and HPC workloads that run at capacity in most cases, it is a valuable energy-efficiency solution for enterprise workloads.

Data centers can achieve greater resource utilization by encapsulating applications and their dependencies within lightweight, isolated containers. Containers enable fine-grained control over resource allocation, enabling you to allocate only the necessary CPU, memory, and storage resources for each application or service.

This efficient resource utilization translates into reduced energy consumption as unnecessary resources are not allocated or wasted. Containerization also enables rapid deployment, scaling, and migration of applications, leading to improved agility and optimized utilization of data center resources.

Scheduling mechanisms and techniques are vital for maximizing energy efficiency in data centers. Advanced scheduling algorithms, such as workload-aware and power-aware schedulers, consider both the computational requirements of applications and the available resources to make intelligent scheduling decisions. By strategically placing and consolidating workloads on servers, scheduling algorithms can ensure the efficient utilization of resources. This minimizes energy waste from underused or idle servers.

Dynamic power management techniques, such as power capping and frequency scaling, can be integrated into scheduling algorithms to optimize energy consumption by dynamically adjusting the power usage of servers based on workload demands. By using intelligent scheduling mechanisms, data centers can achieve higher resource utilization and reduce energy consumption, resulting in improved energy efficiency.

Efficient cooling

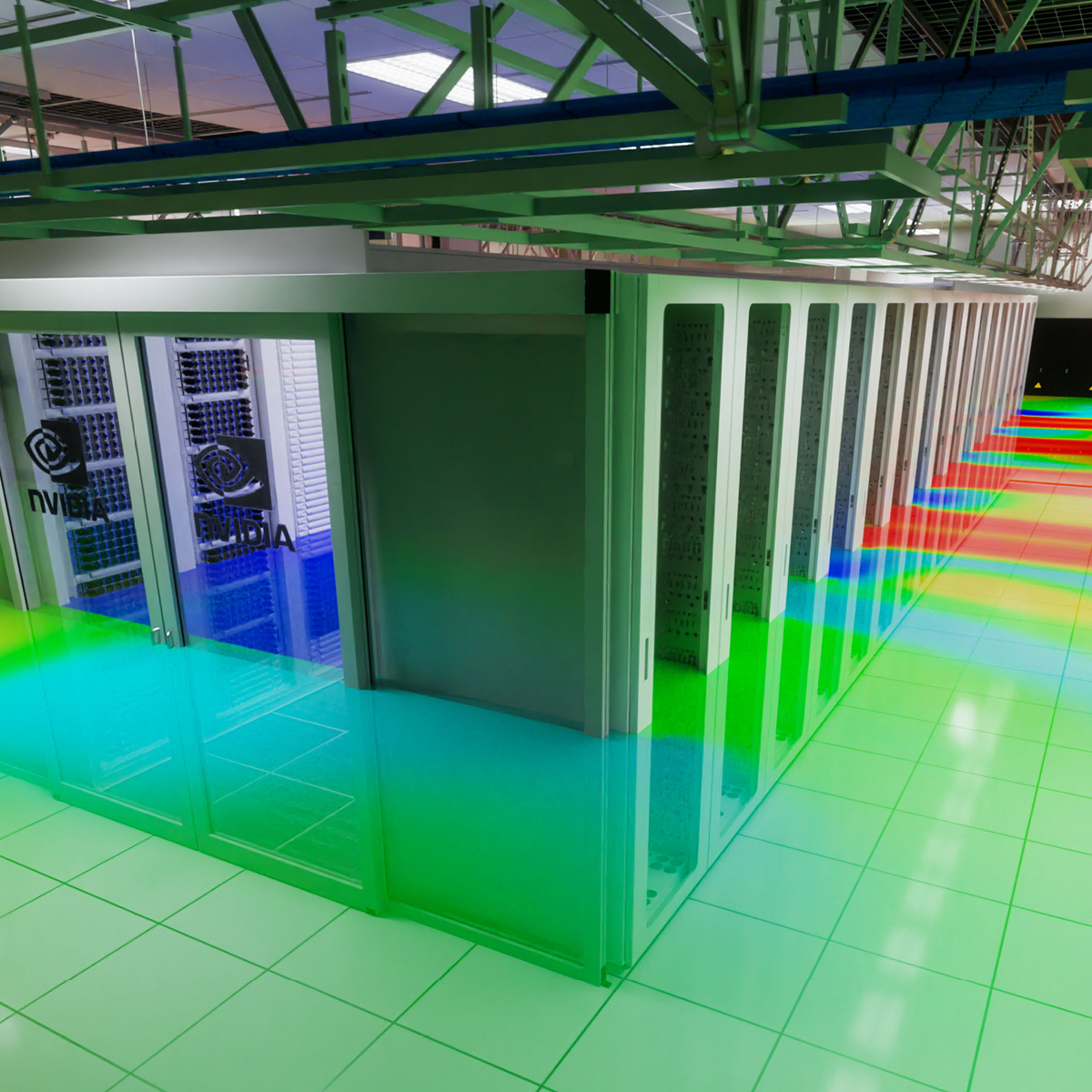

The average cooling system consumes an eye-watering 40% of the data center’s total power. This amount of energy consumption makes such systems a top priority for targeting as part of an energy efficiency strategy:

- Directing hot aisle and cold aisle containment

- Optimizing airflow management

- Using efficient cooling technologies, such as direct liquid cooling (DLC)

With hot aisle/cold aisle containment, cold air from the air conditioning system is directed into the cold aisle, while hot air from servers and other equipment is directed into the hot aisle. This helps to ensure that cold air is not wasted on hot equipment, minimizing energy loss.

In addition to hot aisle/cold aisle containment, airflow management should also be optimized to reduce power consumption. By monitoring the flow of air between servers and other IT equipment, potential blockages can be identified and removed, which will help ensure that cool air reaches all areas of the data center efficiently. Furthermore, this practice helps maintain safe temperatures throughout the facility by preventing hotspots from forming due to trapped warm air.

Finally, efficient cooling technologies such as DLC can profoundly reduce energy consumption in data centers. Direct liquid cooling circulates liquid directly in contact with electronic components, such as CPUs and GPUs, to dissipate heat more efficiently. This enables DLC to offer several energy-saving advantages such as improved heat transfer, reduced airflow requirements, targeted cooling, and waste heat reuse.

Best practices for energy-efficient data center design and operation

The current period in data center evolution presents a unique opportunity to lead the charge toward a more sustainable future by prioritizing energy efficiency in your data center. By implementing the top four strategies to maximize energy efficiency in your data center, you can reduce your carbon footprint, save on operating costs, and position your organization as a leader in sustainable computing.

But it is not just about the immediate benefits. By embracing sustainable computing practices, you are also future-proofing your organization against the growing demands for environmental responsibility and meeting ESG goals.

As more customers and stakeholders prioritize sustainability, your commitment to energy efficiency and sustainable computing can help you attract and retain top talent. It can also help build stronger relationships with your customers and position your organization as a responsible and forward-thinking leader.

So, as you consider the top four ways to maximize energy efficiency in your data center, remember that this is more than just a cost-saving measure. It is an opportunity to make a positive impact on the planet, build a stronger and more resilient organization, and create a better future for all.

For more information about power efficiency and energy efficiency solutions, see the NVIDIA Sustainable Computing Resources Center.