Every week we bring you the top NVIDIA updates and stories for developers. In this week’s edition of our top 5 videos, we highlight a new GPU-accelerated supercomputer at MIT, a new Jetson-based drone, a GAN for fashion, and the brand new RTX Broadcast Engines SDK.

Watch below:

5 – New Skydio 2 Drone Powered by NVIDIA Jetson

Redwood City, California-based Skydio and member of NVIDIA’s startup accelerator, Inception, has just released the latest version of their AI capable GPU-accelerated drone, Skydio 2.

Comprised of six 4K cameras, with an NVIDIA Jetson TX2 as the processor for the autonomous system, Skydio 2 is capable of flying for up to 23 minutes at a time and can be piloted by either an experienced pilot or by the AI-based system.

The Jetson TX2 has 256 GPU cores and is capable of 1.3 trillion operations a second. According to the team, the drone uses nine custom deep neural networks that help the drone track up to 10 objects while traveling at speeds of 36 miles per hour.

4 – MIT Lincoln Laboratory Supercomputing Center Installs World’s Fastest Supercomputer at a University

To power AI applications and research across engineering, science, and medicine, the Massachusetts Institute of Technology (MIT) Lincoln Laboratory Supercomputing Center has just installed a new GPU-accelerated supercomputer, powered by 896 NVIDIA Tensor Core V100 GPUs.

According to MIT, the new system named TX-GAIA for Green AI Accelerator was ranked by TOP500 as the most powerful AI supercomputer at any university in the world.

The new supercomputer has a peak performance of 100 AI petaFLOPs, as measured by the computing speed required to perform mixed-precision floating-point operations commonly used in building deep neural networks. Overall, the system features a measured performance of 4.725 petaFLOPs and is based on the HPE Apollo 2000 system, which is specifically designed for HPC and optimized for AI.

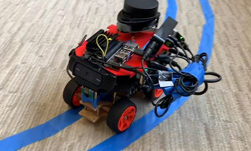

3 – Interns Top Competition with Jetson Nano at Booz Allen Summer Games Challenge

This summer, student interns at Booz Allen Hamilton bested the competition on edge computing with the help of NVIDIA Jetson Nano.

The Booz Allen Summer Games Challenge (SGC) calls on student interns across the U.S. to develop breakthrough solutions for its clients’ most pressing problems. This summer, Project RAZOR placed top 10 among artificial intelligence and machine learning projects with a fully autonomous ground vehicle powered by Nano.

The team competed against 80 other teams of four to five students developing projects over 10 weeks. Previous SGC winning projects include technology that helps the blind navigate, as well as ways to fight human trafficking and global disease.

2 – Entrepreneur Brings GPUs to Fashion

Pinar Yanardag and cofounder Emily Salvador, also from the MIT Media Lab, recently started a company that creates dresses based on AI-generated designs.

The AI component invents outfits humans might not think of — in Glitch-AI’s reimagining of the classic Little Black Dress, one arm of the dress is a bell sleeve, and the other is straight.

Yanardag sat down with AI Podcast host Noah Kravitz to talk about this project, along with her other new creations.

1 – NVIDIA RTX Broadcast Engine Brings Twitch Livestreams to Life with AI

Powered by Tensor Cores on RTX GPUs, the new RTX Broadcast Engine SDKs enable virtual greenscreens, style filters and augmented reality effects — the kind of techniques used by major broadcast networks — all using AI and without the need for special equipment.

The new SDKs include:

- RTX Greenscreen, to deliver real-time background removal of a webcam feed, so only your face and body show up on the livestream. The RTX Greenscreen AI model understands which part of an image is human and which is background, so gamers get the benefits of a greenscreen without needing to buy one.

- RTX AR, which can detect faces, track facial features such as eyes and mouth, and even model the surface of a face, enabling real-time augmented reality effects using a standard web camera. Developers can use it to create fun, engaging AR effects, such as overlaying 3D content on a face or allowing a person to control 3D characters with their face.

- RTX Style Filters, which use an AI technique called style transfer to transform the look and feel of a webcam feed based on the style of another image. With the press of a hotkey, you can style your video feed with your favorite painting or game art.