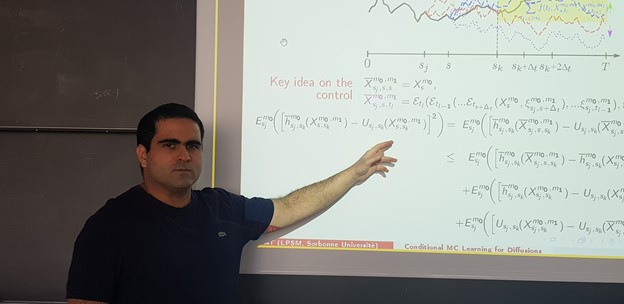

‘Meet the Researcher’ is a monthly series in which we spotlight researchers who are using GPUs to accelerate their work. This month we spotlight Lokman Abbas Turki, lecturer and researcher at Sorbonne University in Paris, France.

What area of research is your lab focused on?

If I had to sum it up in a few words, I would say computer science probability. More precisely, I apply probability and high performance computing to computationally complex problems in mathematical finance, and recently to increase the resilience of asymmetric cryptosystems against side-channel attacks. Quantitative (mathematical) finance has the desirable property of usually using Monte Carlo-based methods which are most suited to parallel architecture. Besides, studying numerically the resilience of cryptosystems is only satisfactory when conducted at a large-scale and thus has to be efficiently distributed on parallel machines.

What motivated you to pursue this research area?

It originally started during my engineering studies in signal processing at Supélec (a French graduate school of engineering). I was impressed by the beauty of some mathematical results and the technicalities of their implementation on machines, especially the work of Young Turks at Bell Labs that included famous names like Claude Shannon, Richard Hamming, and John Tukey. Then, during my Master’s degree in Mathematics 2007/2008, I was fortunate enough to work at CERAMICS research center of École des Ponts on the parallelization of finance simulations on GPUs. I originally started using CG shading language then CUDA on one GPU, then CUDA+OpenMP+MPI on a cluster of GPUs.

Once I learned how to program on GPUs and had significant speedup, I could not quit using them. Also, I was convinced that the gaming community cannot stop getting bigger which ensures that GPUs are here to stay, unlike other co-processors. As a result, I continued my research in probability with a special focus on the scalability of the proposed methods on massively parallel architectures.

Tell us about a few of your current research projects.

I usually find myself involved in different projects that allow me to have continuous collaborations with colleagues in both Mathematics and Computer Science. For example, until last year I participated in the ANR project ARRAND studying the impact of randomization in asymmetric cryptography. Since 2016, I nurture various collaborations with Crédit Agricole in probability/high performance computing/deep learning applied to finance. Some resulting codes are provided to Premia consortium project led by INRIA. Since September 2020, I also started participating in the chair Stress Testing established between École Polytechnique and BNP Paribas.

What problems or challenges does your research address?

In the last two years, I spent the main part of my research on the following problems:

In asymmetric cryptography, as the continuation of the work [1], my Ph.D. student and I studied a sophisticated version of Template attacks based on a relaxed conditional maximum likelihood estimator.

In the Monte Carlo simulation of nonlinear parabolic PDEs (Partial Differential Equations) [2], we present a new conditional learning method that allows us to control the bias.

In high performance computing, as the continuation of the work [3], we are gradually improving and extending the batch parallel divide and conquer algorithm for eigenvalues.

In deep learning [4], we show a new method to reduce the variance of the loss estimator and thus accelerate the convergence to the optimal choice of the network parameters.

The solution of problem 3 is used in problems 1 and 2. The solution to problem 4 allows unconditional learning presented in problem 2.

What is the (expected) impact of your work on the field/community/world?

In research, I really like being at the interface of various disciplines. Although very involved, I contribute to generating collaborations between colleagues specialized in different areas. For example, at Sorbonne Université, I have various collaborations with members from the probability/statistics laboratory LPSM and also with members from computer science research institute LIP6. In both applied mathematics and computer science communities, I defend the fact that the old sequential deterministic methods are becoming less and less relevant because of the massively parallel architecture that we have today and their democratization through public cloud computing. As alternatives, I argue for the use of stochastic methods based on Monte Carlo that is more scalable and can be more naturally combined with neural networks.

In teaching activities, my “Programming GPUs” course is a meaningful success since it allows me to explain the new paradigms of parallelization to about 150 students/researchers each year coming from various specialties in mathematics and computer science. Among all my courses, “Programming GPUs” is the one that evolved the most. Started 9 years ago, its first version was an introduction to CUDA then it changed into the use of GPUs for parabolic PDEs (Partial Differential Equations) simulation. The current version is rather dedicated to batch parallel processing and will soon include elements from deep learning.

What technological breakthroughs are you most proud of from your work?

In the various collaborations I had, we were able to push the envelope a bit further. For example, for parabolic PDEs in [2], we simulate and control the bias of very complicated high-dimensional problems that cannot be efficiently simulated using other methods. It is worth mentioning that the very competitive execution times that we present in [2] are possible because of the parallelization of the CUDA of the batch processing strategies presented in [3]. Regarding contribution [4] in which we use deep learning with an inexpensive over-simulation trick, we are first to propose a training that converges (cf. flash video) for a very high dimensional problem of CVA (Credit Valuation Adjustment) simulation.

How have you used NVIDIA technology either in your current or previous research?

An efficient CUDA parallelization of my codes on NVIDIA GPUs provides me the computing power that speeds up the critical parts of my algorithms by a factor that always exceeds 20 even when compared to a vectorization using AVX on CPUs. The speedup exceeds sometimes 200 for embarrassingly parallel highly caching operations. Consequently, each algorithm that I write or that I supervise is automatically implemented using either C++/CUDA or Python/CUDA.

What’s next for your research?

In the chair Stress Testing, I will be involved in exploring new methods for hedging risks of extreme events. The majority of current methods for high quantiles computation are essentially sequential. With a colleague at LISN (Laboratoire Interdisciplinaire des Sciences du Numérique), we already started exploring a new parallel promising method that trains NNs (Neural Networks) using nested Monte Carlo. In the numerical probability community, the use of NNs with Monte Carlo is becoming a standard. However, the current contributions implement NNs for their ability to provide a solution to complex problems and not for their genericity to be reused by other applications (automation) and for a large variety of data (scalability). Automation and scalability are key features in AI that help to overcome the difficulty of high quantiles computation.

Any advice for new researchers?

Avoid working on anything that is not scalable with respect to an increasing size of data or not scalable with respect to greater computing power. Moreover, as each scalability needs either sufficient technical skills in probability/statistics or low-level programming capabilities, make sure to have an edge by mastering the latest advances in both of these fields.

References

[1] J. Courtois, L. Abbas‑Turki and J.‑C. Bajard (2019): Resilience of randomized RNS arithmetic with respect to side-channel leaks of cryptographic computation, IEEE Transactions on Computers, vol. 68 (12), pp. 1720-1730.

[2] L. A. Abbas-Turki, B. Diallo and G. Pagès (2020): Conditional Monte Carlo Learning for Diffusions I & II https://hal.archives-ouvertes.fr/hal-02959492/ & https://hal.archives-ouvertes.fr/hal-02959494/

[3] L. A. Abbas-Turki and S. Graillat (2017): Resolving small random symmetric linear systems on graphics processing units, The Journal of Supercomputing, vol. 73, pp. 1360-1386.

[4] L. A. Abbas-Turki, S. Crépey and B. Saadeddine (2020): Deep X-Valuation Adjustments (XVAs) Analysis, presented in NVIDIA GTC and QuantMinds International.