Many of you may not recognize my company, Ribbon Communications. We are best known for building and securing large telecom networks for communication service providers (also known as phone companies). However, there’s a good chance that in the next day or two, you’ll place a call that traverses a piece of our gear somewhere in the world. In addition to service providers, we have substantial practice working with large enterprises, the kinds of organizations that need carrier-grade services, either because of their size or the critical nature of their communications. That includes universities, healthcare institutions, financial services, government agencies, and so on.

A short while ago, one of our customers, one of the largest investment banks in the world, approached Ribbon with a problem. They wanted to use advanced AI to analyze their contact center calls, in real-time, so that they could make immediate business decisions based on AI-based observations. They wanted to be able to ingest the audio stream, immediately transcribe it into text, and then also immediately analyze the text to look for issues such as customer satisfaction, threatening behavior, and fraud attempts. The sooner the text was transcribed, the easier it would be to store and search. Our customer could also use it for other forms of trend analysis that could spot upcoming issues, for example, customer sentiment with a certain agent.

Anyone that has ever tried to search a recording can appreciate why a bank with thousands of calls a day would rather store transcriptions than audio and would rather use AI tools to search for issues compared to traditional search tools. Unfortunately, the bank was stymied by several common technical issues that stood in their way:

- The bank needed a secure element that could sit in the middle of thousands of contact center calls and replicate all the call media streams so the streams could be sent to an AI engine.

- Because the element is in the middle of these calls, it can’t ever fail, and it can’t degrade the calls. It also had to be extremely secure such that a third party couldn’t find a way to intercept the streams. Nor could it be compromised or overloaded using a DoS attack.

- The telephone network uses a different media format than AI engines accept: Real-time Transport Protocol (RTP). The bank could not just send raw audio streams of all calls to an AI engine.

- The bank wanted to use the real-time audio streams to execute multiple AI-based services at the same time. That means that they needed multiple copies of the real-time audio sent to different AI services simultaneously to enable different constituencies in the bank to analyze the data and use the results for their own purposes.

Because the bank could not overcome these issues, they were forced to record calls in another format, store them, and then send the recordings to an AI engine for analysis. Recording was not acceptable as it introduced two drawbacks:

- The transcription and analysis are not real-time so there’s no way to leverage AI to react to issues happening right now. That dramatically reduces the value.

- Recordings can reduce audio quality. As you all know, lower audio quality inherently reduces the transcription accuracy of an AI platform.

Ribbon, working in collaboration with NVIDIA, created a solution. We used our extensive experience in managing telephone network audio and signaling and combined that with the NVIDIA Riva advanced conversational AI platform, powered by GPU technology.

Ribbon is well-known for its telecom network security software—session border controllers (SBCs) —that provides wire-speed packet inspection and media manipulation. We took that know-how and created a secure interface to the telecom network so that we could securely access and replicate thousands of high-quality streams of telephone audio from the bank’s contact center (or any telephony source).

In real-time, we convert those streams from RTP into AI-acceptable audio. The audio goes to Riva, to be transcribed in real-time. Line-of-business owners can then use that data for many different applications. The bank already has distinct use cases in mind but it’s obvious that developers could find thousands of use cases and the value could be applied across hundreds of different industries. Any organization that receives a high volume of calls is a potential target. Target applications include:

- Regulatory compliance

- Real-time security or fraud analysis

- Real-time sentiment analysis

- Real-time translation

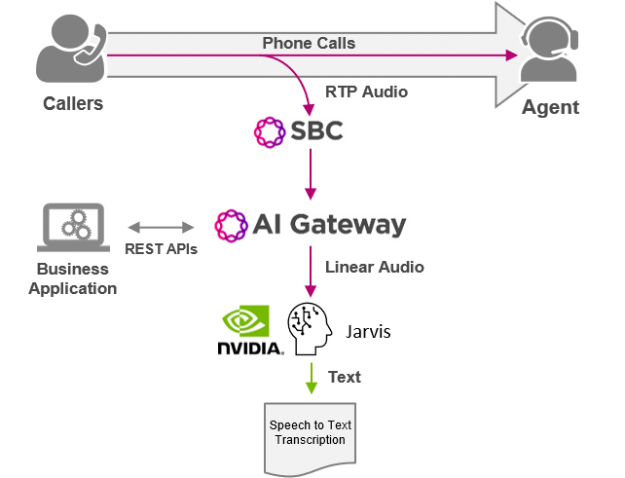

Figure 1 shows that the Ribbon AI gateway becomes a secure bridge between the telephone network and AI data analytics domain. After the audio moves into text, the breadth of potential applications grows exponentially. The ability to get that almost instantaneously expands the potential opportunities to use the data and value of that data.

Ribbon’s AI gateway architecture

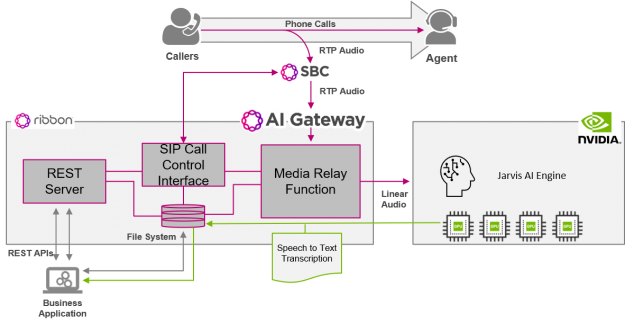

Figure 2 provides a view of the AI gateway components, how they connect to the contact center and integrate with the Riva AI engine.

In the diagram, the Ribbon SBC acts as a secure spigot that delivers thousands of high-quality streams of audio to the AI gateway, using standard telecoms protocols: Session Initiation Protocol (SIP) for call signaling and RTP with various codecs for call audio. Each call participant has a separate audio stream sent to the AI gateway, to ensure the quality of the audio.

The Media Relay component then converts each audio stream from RTP to AI-acceptable audio and delivers it to the AI engine for conversion to text or other Riva application functions. The text for each audio stream is then sent back to the AI gateway.

The AI gateway is controlled using REST APIs. This allows a business application to dynamically instruct and control how each call is handled. For example, a business application might be set up to focus on the quality of engagements for an organization’s premium customers. The application would match incoming caller ID to the premium customers’ phone number. When there is a match, those calls would be selected for transcription and real-time analysis. The same type of filtering could be used to look for new customers, customers in a certain geography, time of day, and so on. Alternatively, they could target all calls or only a percentage to sample calls, based on defined rules.

An application can instruct the AI gateway whether to use the Riva AI engine to convert a call’s audio to text from speech or use some other Riva AI function. It is even possible to instruct the AI gateway to perform different functions on the same audio stream.

Finally, the application can instruct the AI gateway to stream the AI data output either in real-time or at the end of the call. It can choose one or multiple destinations. The AI gateway can provide multiple AI streams from an individual audio call to different business functions, in parallel. Businesses often have siloed organizations that have distinct requirements. They want their own feed of data so that they can unilaterally act on it. This allows different departments—like compliance, operations, or security—to use the call data to address their own specific business needs.

To demonstrate the AI gateway capabilities, we deployed a single Amazon EC2 instance in AWS. For benchmarking performance, we deployed a separate test harness and drove hundreds of simultaneous voice calls at the AI gateway instance. Using the g4dn.2xlarge EC2 instance type running Riva EA2 ASR, with T4 GPU, we generated 220 simultaneous voice streams in 110 simultaneous calls. Each GPU provided an order of magnitude capacity improvement over CPUs.

The AI gateway can direct call traffic to multiple GPUs to scale well beyond 100 simultaneous calls, to support the thousands of concurrent calls that a large contact center would field.

Conclusion

The speech-to-text use case is only the beginning. By providing the ability to convert from text back to speech and inject this into the call path to the caller, the AI gateway can provide a basis for real-time conversational AI agents to engage directly with contact center customers.

This AI gateway capability opens literally thousands of potential application use cases that can be tailored to fit specific business verticals and go beyond the confines of the contact center environment.

If you are interested in learning more, look for our conference talk at the upcoming GTC session, Real-time Integration of Telephony Network with AI Speech-to-Text Translation or contact me directly at Ribbon.