Breast cancer is the most frequently diagnosed cancer among women worldwide. It’s also the leading cause of cancer-related deaths. Identifying breast cancer at an early stage before metastasis enables more effective treatments and therefore significantly improves survival rates.

Although mammography is the most widely used imaging technique for early detection of breast cancer, it is not always available in low-resource settings. Its sensitivity also drops for women with dense breast tissue.

Breast ultrasound is often used as a supplementary imaging modality to mammography in screening settings, and as the primary imaging modality in diagnostic settings. Despite its advantages, including lower costs relative to mammography, it is difficult to interpret breast ultrasound images as evident by the considerable intra-reader variability. This leads to increased false-positive findings, unnecessary biopsies, and significant discomfort to patients.

Previous work using deep learning for breast ultrasound has been based predominantly on small datasets on the scale of thousands of images. Many of these efforts also rely on expensive and time-consuming manual annotation of images to obtain image-level (presence of cancer in each image) or pixel-level (exact location of each lesion) labels.

Using AI to improve breast cancer detection

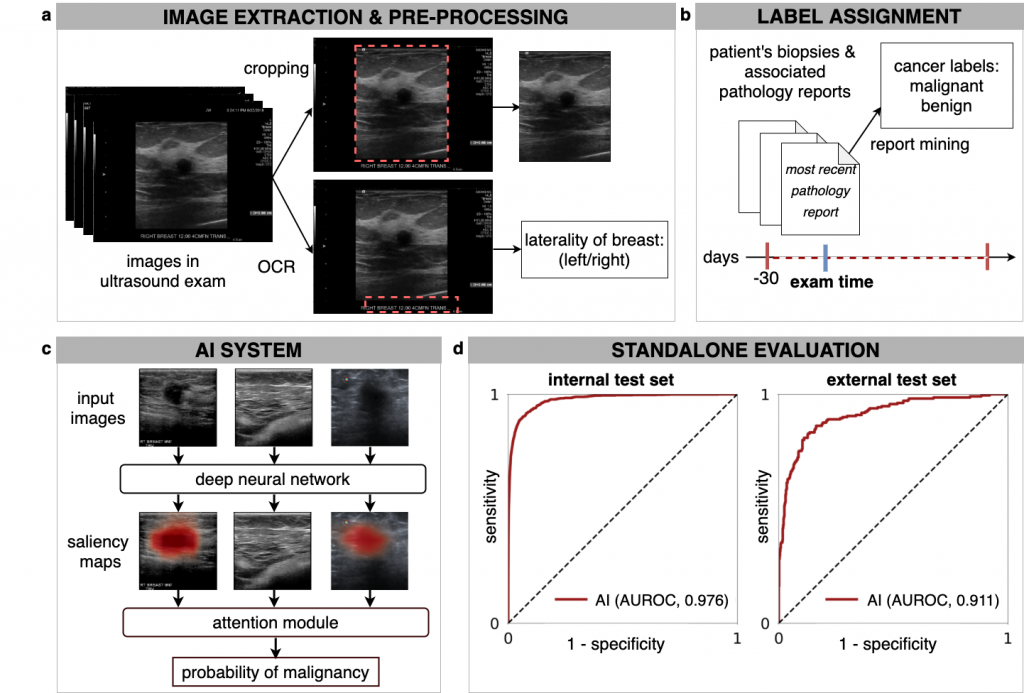

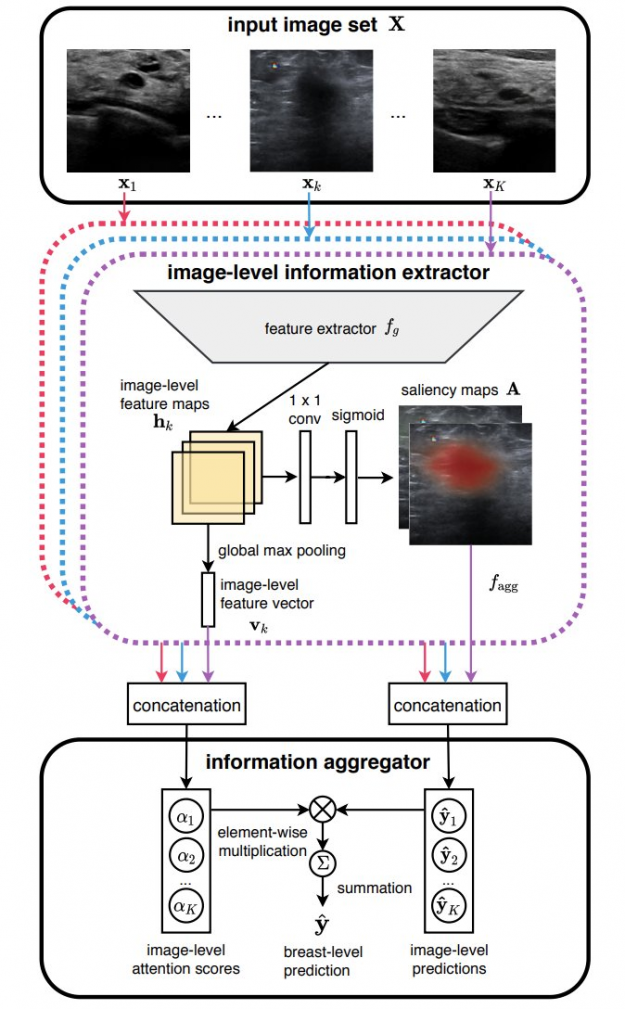

In our recent paper, Artificial Intelligence System Reduces False-Positive Findings in the Interpretation of Breast Ultrasound Exams, we leverage the full potential of deep learning and eliminate the need for manual annotations by designing a weakly supervised deep neural network whose working resembles the diagnostic procedure of radiologists (Figure 1).

Radiologist diagnostic procedure compared to AI

The following table compares how radiologists make predictions compared to our AI system.

| RADIOLOGIST | AI NETWORK |

| Looks for abnormal findings in each image within a breast ultrasound exam. | Processes each image within an exam independently using a ResNet-18 model and generates saliency map for it, indicating the most important parts. |

| Concentrates on images that contain suspicious lesions. | Assigns attention scores to each image based on its relative importance. |

| Considers signals in all images to make a final diagnosis | Aggregates information from all images using an attention mechanism to compute the final predictions for benign and malignant findings. |

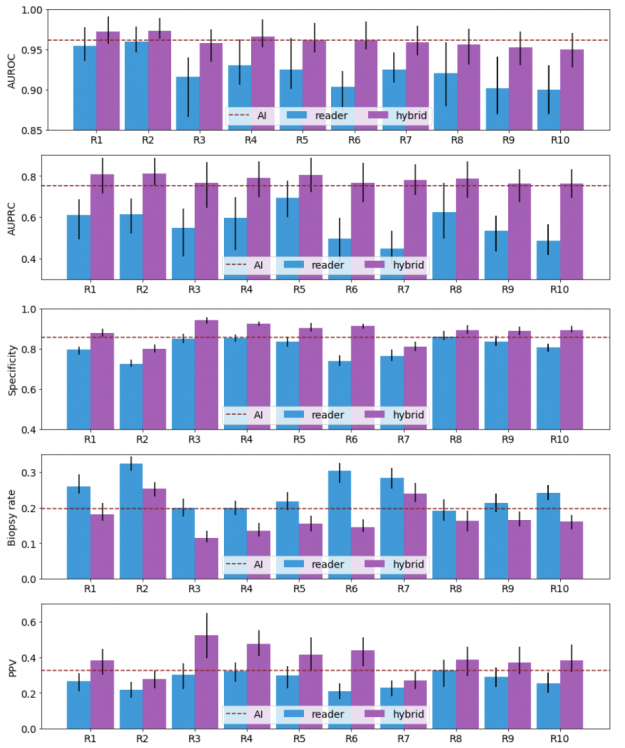

We compared the performance of the trained network to 10 board-certified breast radiologists in a reader study and to hybrid AI-radiologist models, which average the prediction of the AI and each radiologist.

The neural network was trained with a dataset consisting of approximately four million ultrasound images on an HPC cluster powered by NVIDIA technologies. The cluster consists of 34 computation nodes each of which is equipped with 80 CPUs and four NVIDIA V100 GPUs (16/32 GB). With this cluster, we performed hyperparameter search by launching experiments (each taking around 300 GPU hours) over a broad range of hyperparameters.

A large-scale dataset

To complete this ambitious project, we preprocessed more than eight million breast ultrasound images collected at NYU Langone between 2012 and 2019 and extracted breast-level cancer labels by mining pathology reports.

- Training set: 3,930,347 images within 209,162 exams collected from 101,493 patients.

- Validation set: 653,924 images within 34,850 exams collected from 16,707 patients.

- Internal test set: 858,636 images within 44,755 exams collected from 25,003 patients.

Results: the most exciting part!

Our results show that a hybrid AI-radiologist model decreased false positive rates by 37.4% (that is, false suspicions of malignancy). This would lead to a reduction in the number of requested biopsies by 27.8%, while maintaining the same level of sensitivity as radiologists (Figure 3).

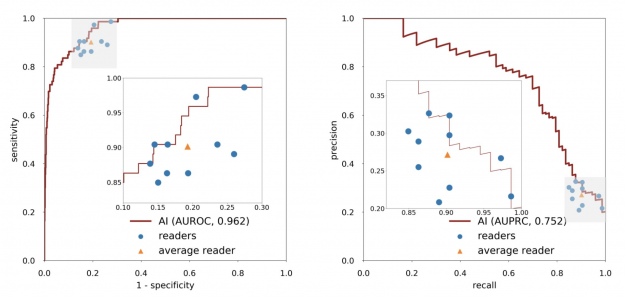

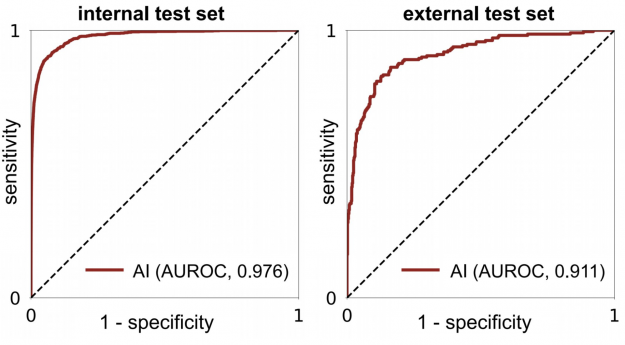

When acting independently, the AI system achieved higher area under the receiver operating characteristic curve (AUROC) and area under the precision recall curve (AUPRC) than individual readers. Figure 3 shows how each reader compares to the network’s performance.

Within the internal test set, the AI system maintained high diagnostic accuracy (0.940-0.990 AUROC) across all age groups, mammographic breast densities, and device manufacturers, including GE, Philips, and Siemens. In the biopsied population, it also achieved a 0.940 AUROC.

In an external test set collected in Egypt, the system achieved 0.911 AUROC, highlighting its generalization ability in patient demographics not seen during training (Figure 4).

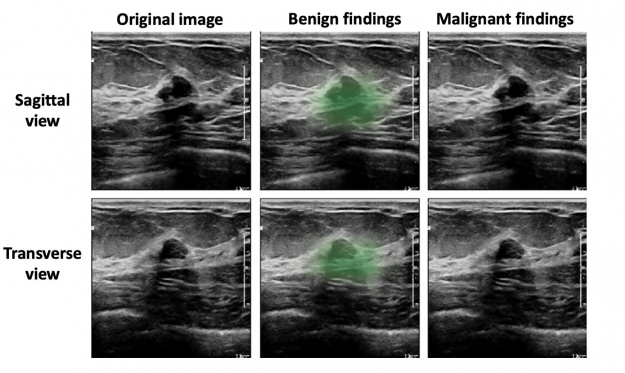

Based on qualitative assessment, the network produced appropriate localization information of benign and malignant lesions through its saliency maps. In the exam shown in Figure 4, all 10 breast radiologists thought the lesion appeared suspicious for malignancy and recommended that it undergo biopsy, while the AI system correctly classified it as benign. Most impressively, locations of lesions were never given during training, as it was trained in a weakly supervised manner!

Future work

For our next steps, we’d like to evaluate our system through prospective validation before it can be widely deployed in clinical practice. This enables us to measure its potential impact in improving the experience of women who undergo breast ultrasound examinations each year on a global level.

In conclusion, our work highlights the complementary role of an AI system in improving diagnostic accuracy by significantly decreasing unnecessary biopsies. Beyond improving radiologists’ performance, we have made technical contributions to the methodology of deep learning for medical imaging analysis.

This work would not have been possible without state-of-the-art computational resources. For more information, see the preprint, Artificial Intelligence System Reduces False-Positive Findings in the Interpretation of Breast Ultrasound Exams.