This post was written by Nathan Whitehead

A few days ago, a friend came to me with a question about floating point. Let me start by saying that my friend knows his stuff, he doesn’t ask stupid questions. So he had my attention. He was working on some biosciences simulation code and was getting answers of a different precision than he expected on the GPU and wanted to know what was up.

Even expert CUDA programmers don’t always know all the intricacies of floating point. It’s a tricky topic. Even my friend, who is so cool that he wears sunglasses indoors, needed some help. If you look at the NVIDIA CUDA forums, questions and concerns about floating point come up regularly.

Getting a handle on how to effectively use floating point is obviously very important if you are doing numeric computations in CUDA.

In an attempt to help out, Alex Fit-Florea and I have written a short paper about floating point on NVIDIA GPUs, Floating Point and IEEE 754 Compliance for NVIDIA GPUs. In the paper, we talk about various issues related to floating point in CUDA:

- How the IEEE 754 standard fits in with NVIDIA GPUs

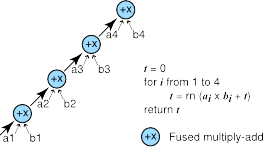

- How fused multiply-add improves accuracy

- There’s more than one way to compute a dot product (we present three)

- How to make sense of different numerical results between CPU and GPU