Training LLMs requires periodic checkpoints. These full snapshots of model weights, optimizer states, and gradients are saved to storage so training can resume after interruptions. At scale, these checkpoints become massive (782 GB for a 70B model) and frequent (every 15-30 minutes), generating one of the largest line items in a training budget. Most AI teams chase GPU utilization, training throughput, and model quality. Almost none look at what checkpointing is costing them.

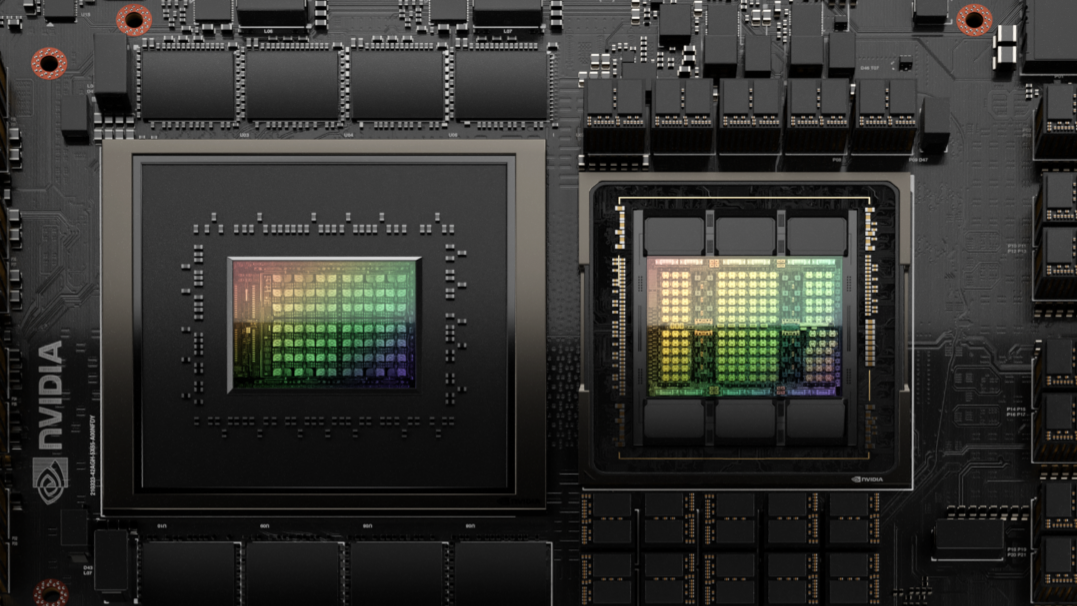

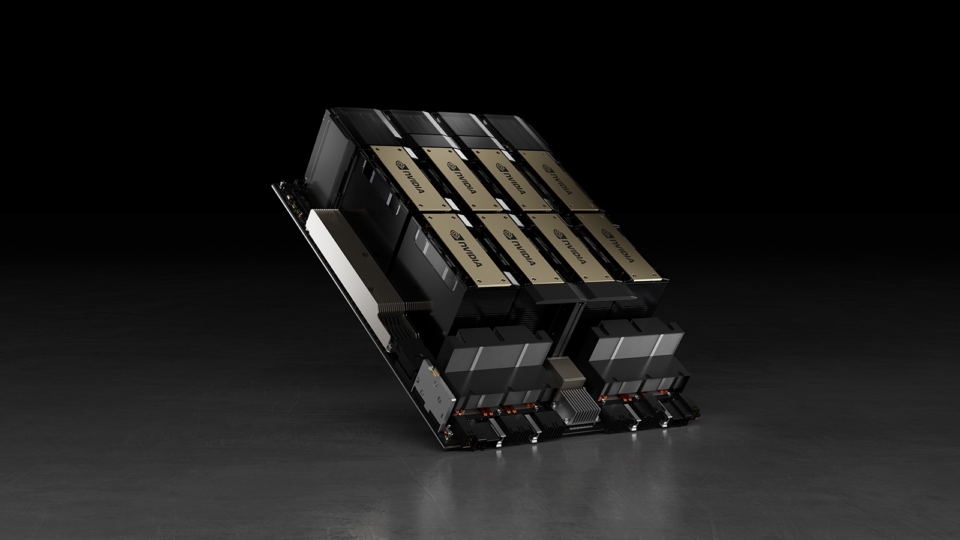

This is an expensive oversight. The synchronous checkpoint overhead of a 405B model on 128 NVIDIA DGX B200 GPUs alone can cost $200,000 a month. By introducing a lossless compression step implemented with about 30 lines of Python, we can reduce storage costs by $56,000 every month. Mixture of experts (MoE) models save even more. We’ll break down how we got to that calculation and how NVIDIA nvComp can improve checkpointing efficiency in this blog post.

Inside a single checkpoint

Hardware interruptions at 1000+ GPU scale aren’t rare. Meta reported 419 unexpected interruptions across 54 days of Llama 3 training on 16,384 NVIDIA H100 GPUs (~one every 3 hours). This is why most teams checkpoint every 15-30 minutes; it’s load-bearing infrastructure, not optional overhead.

| Component | Size | Contents |

| Model weights (BF16) | 130 GB | 70B params × 2 bytes |

| Optimizer state (FP32 momentum + variance) | 521 GB | 70B params × (4 + 4) bytes |

| Gradients (BF16) | 130 GB | Same shape as weights |

| Total per checkpoint | 782 GB |

This breakdown surprises people who see it for the first time. The optimizer state—AdamW’s first and second moment estimates, both stored in FP32—is 4x larger than the model weights. It’s the bulk of every checkpoint, and why they are far larger than a deployed model.

By checkpointing every 30 minutes, standard practice for fault tolerance, that’s 48 checkpoints per day. Over a month of continuous training:

782 GB × 48/day × 30 days = 1.13 PB written to storage per month

And here’s the part most teams miss. During every single synchronous checkpoint write, all 8 GPUs sit completely idle. Nothing overlaps with a checkpoint save—the training loop blocks until the last byte hits storage.

At $4.40/GPU/hour (representative of on-demand B200 cloud pricing) and 5 GB/s shared storage throughput (typical for Lustre or GPFS over InfiniBand), we do the math to figure out the cost of idle GPUs during these waiting periods:

- Write time per checkpoint: 782 GB / 5 GB/s = 156.4 seconds (~2.6 minutes)

- Total wait time per month: 156.4s × 48/day × 30 days = 225,216 seconds = 62.6 hours

- Cost of idle GPUs during these waiting periods: 62.6 hours × 8 GPUs × $4.40/GPU/hour = $2,203/month

The wait time for synchronous checkpoints adds up to over $2,200/month—before counting storage fees.

Scale that to a 64-GPU cluster, and the monthly cost jumps to over $17,500. At 128 GPUs, training a 405B model, idle costs exceed $200,000/month. The idle-GPU cost dominates storage fees by an order of magnitude.

Asynchronous checkpointing eases part of the problem. However, framework support is still maturing and not widely adopted, plus managing memory watermarks remains a challenge. A complementary technique that can be easily used is checkpoint compression, which has the added benefit of reducing cold start time when the state is being restored, since cold-start is serial in nature.

NVIDIA nvCOMP introduces GPU-accelerated compression

The core idea is simple. Compress the checkpoint before it leaves GPU memory. No CPU roundtrip. No extra data movement. The data is already on the GPU, so we can compress it there.

NVIDIA nvCOMP is a GPU-accelerated lossless compression library that does exactly this. By providing a single library with support for both standard algorithms, like Zstandard (ZSTD), and highly optimized, GPU-specific formats, like gANS, it tackles data bottlenecks natively on the device. Developers can easily integrate high-throughput compression directly into their Python workflows (such as PyTorch or TensorFlow).

Measured checkpoint compression ratios

We fine-tuned two model architectures (dense transformer and mixture of experts) for 50 steps, saved full training checkpoints (weights + AdamW optimizer state + gradients), and compressed every component with nvCOMP on NVIDIA H200 and B200 GPUs. The compression ratios depend on data, not hardware—they’re identical across all GPUs.

ZSTD is a widely adopted general-purpose compression algorithm developed by Meta that balances strong compression ratios with reasonable speed. It’s the same algorithm behind ZSTD on the Linux command line and is used extensively in databases, file systems, and data pipelines. Asymmetric numeral systems (ANS) is a modern entropy coding technique that nvCOMP implements as a GPU-native codec (gANS) optimized for raw throughput. Both are lossless and exploit statistical patterns in 1B/2B words distributions (entropy coding) rather than just matching repeated byte sequences.

The key difference is the trade-off. ZSTD squeezes slightly higher compression ratios (1-2% higher than ANS in our benchmarks) but compresses at ~16-19 GB/s on B200. ANS achieves nearly the same ratios at 10x the throughput (181-190GB/s). As we’ll show below, which to pick depends on how fast your storage is.

| Checkpoint component | % of Ckpt | ZSTD | gANS | Why |

| BF16 model weights | 17% | 1.27-1.28× | 1.25-1.27× | High-entropy trained floats |

| FP32 optimizer momentum | 33% | 1.25-1.39× | 1.23-1.37× | Shared exponent bytes in moving averages |

| FP32 optimizer variance | 33% | 1.32-1.48× | 1.30-1.45× | Non-negative, small values |

| BF16 gradients | 17% | 1.25-1.46× | 1.23-1.44× | Architecture-dependent sparsity |

| Full checkpoint (dense) | 100% | ~1.27× | ~1.25× | Dense: no natural sparsity |

| Full checkpoint (MoE) | 100% | ~1.40× | ~1.39× | MoE: expert routing creates gradient sparsity |

The ranges reflect the key finding that compression depends on model architecture, not hardware. Not all compression algorithms work on floating-point tensors. Byte-level codecs like LZ4 and Bitcomp look for repeated byte sequences—like finding duplicate words in a document. But trained neural network parameters look essentially random at the byte level, so these codecs find almost nothing to compress (~1.00× on dense checkpoints in our benchmarks).

ZSTD and ANS use entropy coding, which exploits statistical patterns in how frequently certain byte values occur—even when no exact sequences repeat. This is why they achieve 1.25-1.40x with the same data, where byte-level codecs achieve nothing. Between the two, ZSTD delivers slightly stronger ratios (1-2% higher in our benchmarks), while ANS matches it closely at 10x the compression throughput. This trade-off matters when storage gets faster, as we’ll show.

- Dense transformers (Llama, GPT, Qwen): All parameters participate in every forward pass. ~0% exact zeros → ~1.25× ANS, ~1.27× ZSTD.

- MoE models (Mixtral, DeepSeek, OLMoE): Only a subset of experts activate per token. 12-14% exact zeros → ~1.39× ANS, ~1.40× ZSTD.

Our benchmarks use BF16 weights and FP32 optimizer state (AdamW), which is standard for most large-scale training today. Teams using FP8 training (e.g., with NVIDIA Transformer Engine) will see lower compression ratios, as reduced-precision data carries higher entropy and less statistical redundancy for lossless compression to exploit. At FP4 (NVFP4), quantization already removes most redundancy—lossless compression provides negligible additional benefit (~5%). The optimizer state, however, remains in FP32 regardless of weight precision, and that’s where the bulk of checkpoint size and compression savings come from.

The math: How nvCOMP saves money

Using our 70B dense model with measured ZSTD 1.27× ratio:

- Without nvCOMP: Write 782 GB to disk at 5 GB/s → 156 seconds of GPU wait

- With nvCOMP ZSTD (1.27x): Compress 782 GB at ~16 GB/s (~49s), write 616 GB at 5 GB/s (123s) → ~123 seconds of GPU wait

Why does the 49-second compression time disappear? Because compression and storage writes can be pipelined: while one chunk writes to disk, the next chunk compresses on the GPU. As long as the codec compresses faster than storage can absorb the output, the compression step is fully hidden behind the write — the GPU wait equals the write time alone. At 5 GB/s shared storage, ZSTD at 16 GB/s is 3× faster than the write, so compression overlaps completely. The wait drops from 156 to 123 seconds—21% smaller files, 33 seconds faster per checkpoint. Over a month: 48 × 30 = 47,520 fewer seconds of idle time — 13+ hours reclaimed. MoE at 1.40× does even better: 29% smaller, 44 seconds faster, 17+ hours reclaimed.

When storage gets faster, codec throughput matters

Your storage speed depends on infrastructure: 2-10 GB/s for shared network filesystems (Lustre, GPFS, NFS), or 15-50+ GB/s for GPUDirect Storage (GDS) with local NVMe.

At faster storage, codec throughput determines whether compression helps or hurts:

| Storage speed | No compression | ZSTD (1.27×, ~16 GB/s) | ANS (1.25×, ~181 GB/s) | Winner |

| 5 GB/s | 156s | 123s (−21%) | 125s (−20%) | ZSTD |

| 15 GB/s | 52s | 49s (−6%) | 42s (−20%) | ANS |

| 25 GB/s | 31s | 49s (+57%) ⚠️ | 25s (−20%) | ANS |

To illustrate, we compare checkpoint write times for a 70B dense model (782 GB) on a B200 GPU across three storage speeds. Because compression and writing are pipelined — one chunk compresses while the previous chunk writes to disk — the total GPU wait time equals whichever stage is slower: pipelined wait = max(compress time, write time).

In table 3 ~16 GB/s on B200, ZSTD’s compression step becomes the bottleneck, and wait time increases. ANS at 181-190 GB/s never hits this wall. At 25 GB/s with 64 B200s, ANS nets ~$2,800/month while ZSTD nets only ~$240/month. For shared file systems (5-10 GB/s), ZSTD remains the right default. For GDS/high-performance storage at 15+ GB/s, ANS is the clear winner.

Measured savings in Figure 4 assume GPU wait reduction + storage at $0.14/GB/month, 96 retained checkpoints, and 5 GB/s shared storage:

Dense models (measured on Qwen2.5-3B):

| Model | GPUs | Ckpt | ZSTD (1.27×) | ANS (1.25×) |

| Llama 3 8B | 8× B200 | 89 GB | 70 GB / ~$300/mo | 71 GB / ~$290/mo |

| Llama 3 70B | 8× B200 | 782 GB | 616 GB / ~$2,700/mo | 626 GB / ~$2,500/mo |

| Llama 3 70B | 64× B200 | 782 GB | 616 GB / ~$6,000/mo | 626 GB / ~$5,600/mo |

| Llama 3 405B | 128× B200 | 4,529 GB | 3,566 GB / ~$56,000/mo | 3,623 GB / ~$53,000/mo |

MoE models (measured on OLMoE-1B-7B):

| Model | GPUs | Ckpt | ZSTD (1.40×) | ANS (1.39×) |

| Mixtral 8x22B (141B) | 64× B200 | 1,575 GB | 1,125 GB / ~$16,000/mo | 1,133 GB / ~$16,000/mo |

| DeepSeek-V3 (671B) | 256× B200 | 7,490 GB | 5,350 GB / ~$222,000/mo | 5,389 GB / ~$218,000/mo |

The savings scale with model size (bigger checkpoints = more to compress) and GPU count (more GPUs idle during waits = higher cost per second of wait time). The second factor is what makes this particularly brutal at scale—256 idle B200s cost $1,126/hour. Every second you shave off a checkpoint write saves money.

The industry is moving toward MoE architectures (like DeepSeek-V3, Mixtral, and Grok), which produce larger and more compressible checkpoints. Compression isn’t a nice-to-have anymore, and adding a few lines of Python code can save costs.

Integration: ~30 Lines of Python

The integration effort is minimal. Here’s a drop-in replacement for torch.save / torch.load:

# CUDA 13.x (Blackwell — B200, B300, GB200, GB300):

pip install nvidia-nvcomp-cu13 cupy-cuda13x

# CUDA 12.x (Hopper — H100, H200):

pip install nvidia-nvcomp-cu12 cupy-cuda12x

import torch

import cupy as cp

from nvidia import nvcomp

codec = nvcomp.Codec(algorithm="Zstd") # or "ANS" for near-ZSTD ratios at 10× throughput

def _compress_state(obj):

"""Recursively compress GPU tensors in a state dict tree."""

if isinstance(obj, torch.Tensor) and obj.is_cuda:

flat = obj.contiguous().reshape(-1).view(torch.uint8)

# torch → cupy (zero-copy) → nvcomp (zero-copy) → GPU compress

compressed = codec.encode(nvcomp.as_array(cp.from_dlpack(flat)))

# .cpu() → bytes(): official nvcomp pattern (Cell 15 of nvcomp_basic)

return {"__nvcomp__": True,

"data": bytes(compressed.cpu()),

"shape": list(obj.shape), "dtype": str(obj.dtype)}

if isinstance(obj, dict):

return {k: _compress_state(v) for k, v in obj.items()}

if isinstance(obj, (list, tuple)):

return type(obj)(_compress_state(v) for v in obj)

return obj

def _decompress_state(obj, device):

"""Recursively decompress nvCOMP tensors back to GPU."""

if isinstance(obj, dict) and obj.get("__nvcomp__"):

# codec.decode(bytes): official nvcomp pattern (Cell 27 of nvcomp_basic)

decompressed = codec.decode(obj["data"])

# nvcomp → cupy (zero-copy) → torch (zero-copy), stays on GPU

dtype = getattr(torch, obj["dtype"].replace("torch.", ""))

return torch.from_dlpack(cp.asarray(decompressed)).view(dtype).reshape(obj["shape"])

if isinstance(obj, torch.Tensor):

return obj.to(device)

if isinstance(obj, dict):

return {k: _decompress_state(v, device) for k, v in obj.items()}

if isinstance(obj, (list, tuple)):

return type(obj)(_decompress_state(v, device) for v in obj)

return obj

def save_compressed_checkpoint(model, optimizer, path):

state = {"model": model.state_dict(), "optimizer": optimizer.state_dict()}

torch.save(_compress_state(state), path)

def load_compressed_checkpoint(path, device="cuda"):

return _decompress_state(

torch.load(path, map_location="cpu", weights_only=True), device)

Swap torch.save for save_compressed_checkpoint, and torch.load for load_compressed_checkpoint.

That’s it. No changes to your training loop, model code, or optimizer configuration.

If you’re using a training framework with custom checkpoint hooks (DeepSpeed, Megatron) the same pattern applies. Walk the state dict, compress GPU tensors, and serialize.

For teams using NVIDIA GPUDirect Storage (GDS), there’s an even faster path. nvCOMP can compress directly into a GDS buffer, writing compressed data straight from GPU memory to NVMe storage. There’s zero CPU involvement.

NVIDIA Blackwell Decompression Engine

NVIDIA Blackwell GPUs include a dedicated Blackwell Decompression Engine (DE) that decompresses LZ4, Snappy, and Deflate at up to 280 GB/s with zero SM overhead. However, the byte-level codecs achieve ~1.00x on floating-point tensors. For checkpoint compression, the entropy-based codecs (ANS and ZSTD) on SMs deliver the real savings. During restores, GPUs are idle and waiting for data before resuming training. SM availability isn’t a constraint, and ANS on SMs delivers comparable throughput (247-264 GB/s) while producing significantly smaller files.

Get started

Checkpoint compression is one of the highest-ROI optimizations you can add to your training pipeline and one of the easiest:

- Install nvCOMP:

pip install nvidia-nvcomp-cu13(Blackwell) or pip install nvidia-nvcomp-cu12(Hopper) - Try it now: Drop the

save_compressed_checkpoint / load_compressed_checkpointfunctions from this post into your training loop. No other changes needed. - Explore the code: Samples and benchmarks on GitHub.

- Read the docs: nvCOMP documentation

- Go deeper: For teams using GPUDirect Storage, nvCOMP can compress directly into GPU buffers that GDS writes to NVMe with zero CPU involvement. For details, see:

Your checkpoints are the largest files in your training pipeline. Compress them.