A computation is considered deterministic if multiple runs with the same input data produce the same bitwise result. While this may seem like a simple property to guarantee, it can be difficult to achieve in practice, especially in parallel programming and floating-point arithmetic. This is because floating-point addition and multiplication aren’t strictly associative—that is, (a + b) + c may not equal a + (b + c)—due to rounding that occurs when intermediate results are stored with finite precision.

With NVIDIA CUDA Core Compute Libraries (CCCL) 3.1, CUB—a low-level CUDA library for speed-of-light parallel device algorithms—added a new single-phase API that accepts an execution environment, enabling users to customize algorithm behavior. We can use this environment to configure the reduce algorithm’s determinism property. This can only be done through the new single-phase API, since the two-phase API doesn’t accept an execution environment.

The following code shows how to specify the determinism level in CUB (find the complete example online using compiler explorer).

auto input = thrust::device_vector<float>{0.0f, 1.0f, 2.0f, 3.0f};

auto output = thrust::device_vector<float>(1);

auto env = cuda::execution::require(cuda::execution::determinism::not_guaranteed); // can be not_guaranteed, run_to_run (default), or gpu_to_gpu

auto error = cub::DeviceReduce::Sum(input.begin(), output.begin(), input.size(), env);

if (error != cudaSuccess)

{

std::cerr << "cub::DeviceReduce::Sum failed with status: " << error << std::endl;

}

assert(output[0] == 6.0f);

We begin by specifying the input and output vectors. We then use cuda::execution::require() to construct a cuda::std::execution::env object, setting the determinism level to not_guaranteed.

There are three determinism levels available for reduction, which are:

not_guaranteedrun_to_rungpu_to_gpu

Determinism not guaranteed

In floating-point reductions, the result can depend on the order in which elements are combined. If two runs apply the reduction operator in different orders, the final values may differ slightly. In many applications, these minor differences are acceptable. By relaxing the requirement for strict determinism, the reduction implementation can rearrange the operations in any order, which can improve runtime performance.

In CUB, not_guaranteed relaxes the determinism level. This enables atomic operations—whose unordered execution across threads results in a different order of operations between runs—to compute both the block-level partial aggregates and the final reduction value. The entire reduction can also be performed in a single kernel launch, since the atomic operations combine the block-level partial aggregates into the result.

The nondeterministic reduce variant is typically faster than the run-to-run deterministic version—particularly for smaller input arrays, where performing the reduction in a single kernel reduces latency from multiple kernel launches, minimizes extra data movement, and avoids additional synchronization. The tradeoff is that repeated runs may yield slightly different results due to the lack of deterministic behavior.

Run-to-run determinism

While nondeterministic reductions offer potential performance gains, CUB also provides a mode that guarantees consistent results across runs. By default, cub::DeviceReduce is run-to-run deterministic, which corresponds to setting the determinism level to run_to_run in the single-phase API. In this mode, multiple invocations with the same input, kernel launch configuration, and GPU will produce identical outputs.

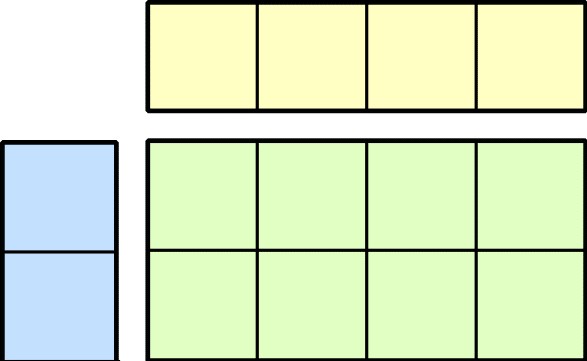

This determinism is achieved by structuring the reduction as a fixed, hierarchical tree rather than relying on atomics, whose update order can vary across runs. At each stage of the reduction, elements are first combined within individual threads. The intermediate results are then reduced across threads within a warp using shuffle instructions, followed by a block-wide reduction using shared memory. Finally, a second kernel aggregates the per-block results to produce the final output. Because this sequence is predetermined and independent of the relative timing of thread execution, the same inputs, kernel configuration, and GPU yield the same bitwise result.

GPU-to-GPU determinism

For applications that require the highest level of reproducibility, CUB also provides GPU-to-GPU determinism, which guarantees identical results across multiple runs with the same input on different GPUs. This mode corresponds to setting the determinism level to gpu_to_gpu.

To achieve this level of determinism, CUB uses a Reproducible Floating-point Accumulator (RFA), a solution based on the NVIDIA GTC 2024 session, Restoring the Scientific Method to HPC: High Performance Reproducible Parallel Reductions. The RFA counters floating-point non-associativity—which arises when adding numbers with different exponents—by grouping all input values into a fixed number of exponent ranges (the default is three bins). This fixed, structured accumulation order ensures the final result is independent of GPU architecture.

The accuracy of the final result depends on the number of bins: more bins provide greater accuracy, but also increase the number of intermediate summations, which can reduce performance. The current implementation defaults the number of bins to three, an optimal default providing balanced performance and accuracy. It’s worth noting that this configuration is not just strictly deterministic, but also guarantees numerically correct results, providing tighter error bounds than the standard pairwise summation traditionally used in parallel reductions.

How results vary based on the determinism levels

The three determinism levels differ in the amount of variation they produce across multiple runs:

- Not-guaranteed determinism produces slightly different summation values on each invocation.

- Run-to-run determinism ensures the same value for every invocation on a single GPU, but the result may vary if a different GPU is used.

- GPU-to-GPU determinism guarantees that the summation value is identical for every invocation, regardless of which GPU executes the reduction.

This is shown in Figure 1, with the summation of an array for each determinism level—represented by green, blue, and red circles—plotted against the run number. A flat horizontal line shows that the reduction produces the same result.

Determinism performance comparison

The level of determinism selected affects the performance of cub::DeviceReduce. Not-guaranteed determinism, with its relaxed requirements, provides the highest performance. The default run-to-run determinism delivers good performance but is slightly slower than not-guaranteed determinism. GPU-to-GPU determinism, which enforces the strictest reproducibility across different GPUs, can significantly reduce performance, increasing execution time by 20% to 30% for large problem sizes.

Figure 2 compares the performance of the different determinism requirements for float32 and float64 inputs on an NVIDIA H200 GPU (lower is better). They clearly show how the choice of determinism level impacts execution time across different data types.

Conclusion

With the introduction of the single-phase API and explicit determinism levels, CUB provides an enhanced toolbox for controlling both the behavior and performance of reduction algorithms. Users can choose the level of determinism that best suits their needs: from the high-performance and flexible, not-guaranteed mode, to the reliable run-to-run default, and up to the strictest GPU-to-GPU reproducibility.

Determinism in CUB isn’t limited to reductions. We plan to extend these capabilities to additional algorithms for developers to control reproducibility across a wider range of parallel CUDA primitives. For updates and discussion, see the ongoing GitHub issue on expanded determinism support, to follow our roadmap, and provide feedback on algorithms you’d like to see deterministic versions of.