Posts by Maggie Zhang

Conversational AI

Jul 14, 2025

Enhancing Multilingual Human-Like Speech and Voice Cloning with NVIDIA Riva TTS

While speech AI is used to build digital assistants and voice agents, its impact extends far beyond these applications. Core technologies like text-to-speech...

10 MIN READ

Agentic AI / Generative AI

Feb 26, 2025

Building a Simple VLM-Based Multimodal Information Retrieval System with NVIDIA NIM

In today’s data-driven world, the ability to retrieve accurate information from even modest amounts of data is vital for developers seeking streamlined,...

15 MIN READ

Agentic AI / Generative AI

Oct 22, 2024

Scaling LLMs with NVIDIA Triton and NVIDIA TensorRT-LLM Using Kubernetes

Large language models (LLMs) have been widely used for chatbots, content generation, summarization, classification, translation, and more. State-of-the-art LLMs...

16 MIN READ

AR / VR

Jan 12, 2023

Autoscaling NVIDIA Riva Deployment with Kubernetes for Speech AI in Production

Speech AI applications, from call centers to virtual assistants, rely heavily on automatic speech recognition (ASR) and text-to-speech (TTS). ASR can process...

13 MIN READ

Data Center / Cloud

Aug 30, 2022

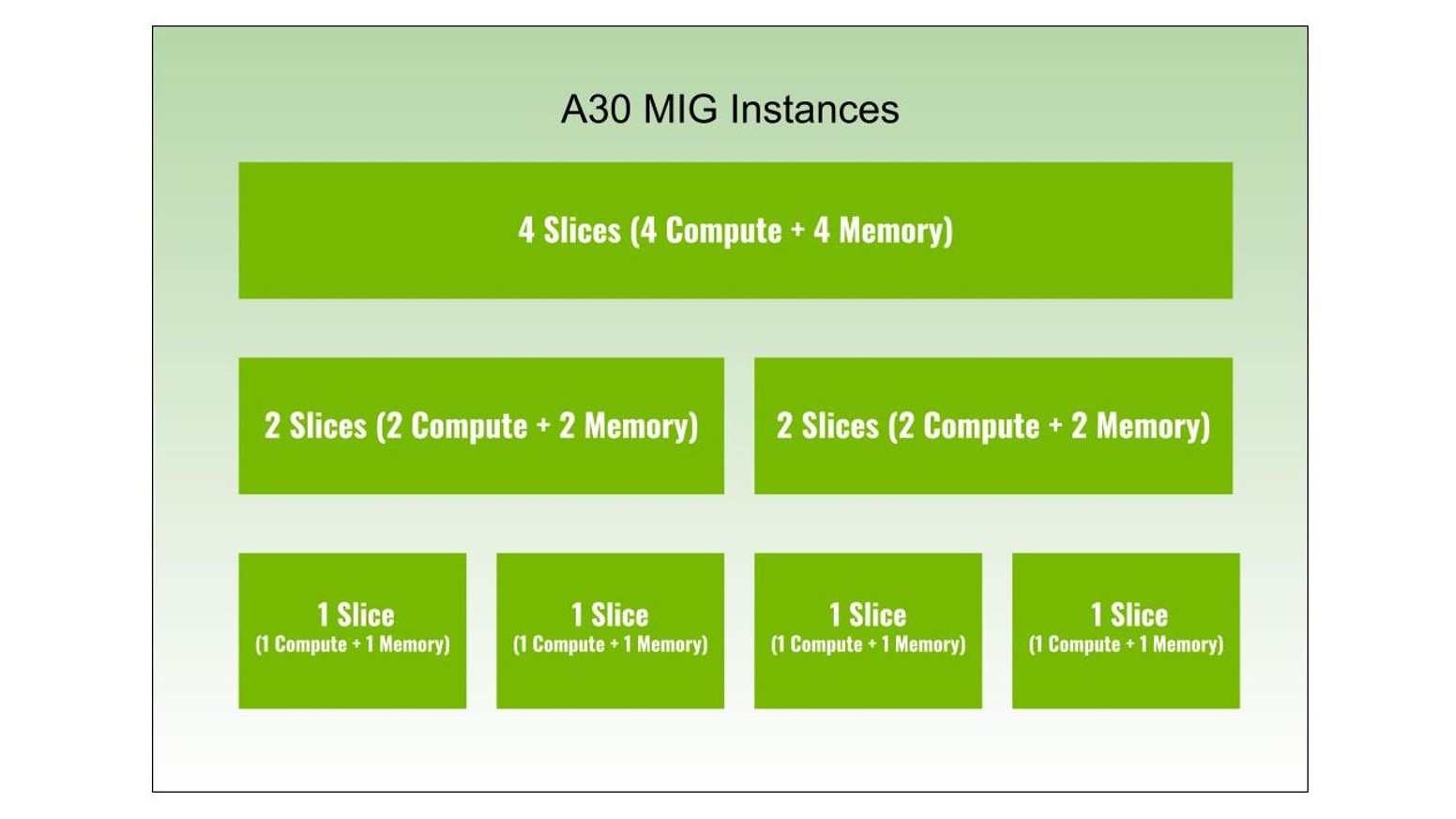

Dividing NVIDIA A30 GPUs and Conquering Multiple Workloads

Multi-Instance GPU (MIG) is an important feature of NVIDIA H100, A100, and A30 Tensor Core GPUs, as it can partition a GPU into multiple instances. Each...

9 MIN READ

Data Center / Cloud

May 11, 2022

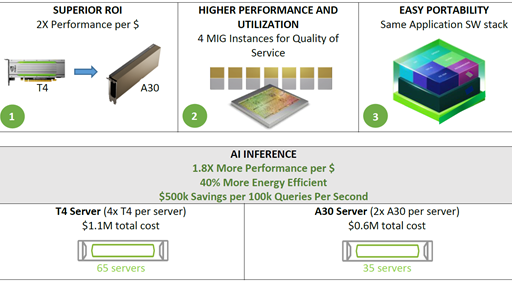

Accelerating AI Inference Workloads with NVIDIA A30 GPU

NVIDIA A30 GPU is built on the latest NVIDIA Ampere Architecture to accelerate diverse workloads like AI inference at scale, enterprise training, and HPC...

6 MIN READ