Posts by Ashwin Nanjappa

Data Center / Cloud

Sep 09, 2025

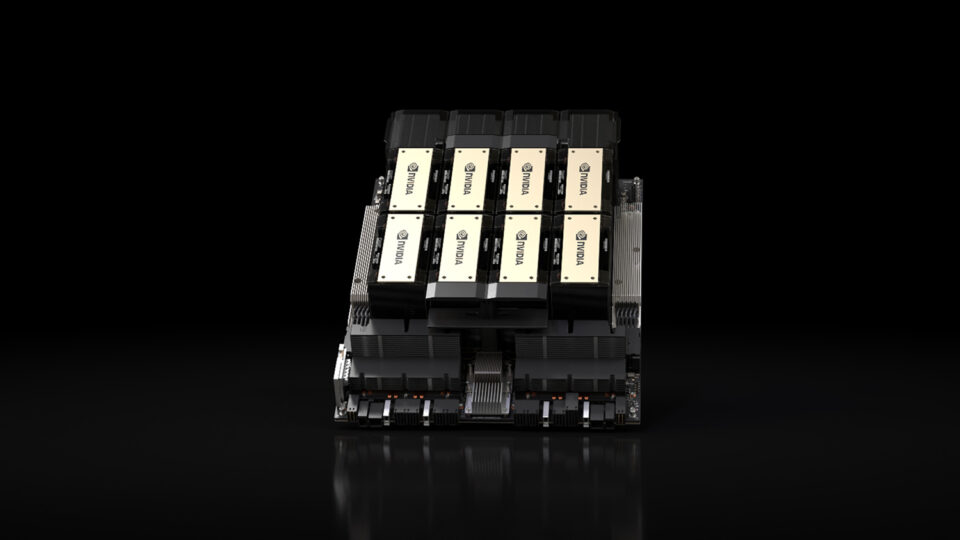

NVIDIA Blackwell Ultra Sets New Inference Records in MLPerf Debut

As large language models (LLMs) grow larger, they get smarter, with open models from leading developers now featuring hundreds of billions of parameters. At the...

10 MIN READ

Data Center / Cloud

Apr 02, 2025

NVIDIA Blackwell Delivers Massive Performance Leaps in MLPerf Inference v5.0

The compute demands for large language model (LLM) inference are growing rapidly, fueled by the combination of growing model sizes, real-time latency...

10 MIN READ

Data Center / Cloud

Aug 28, 2024

NVIDIA Blackwell Platform Sets New LLM Inference Records in MLPerf Inference v4.1

Large language model (LLM) inference is a full-stack challenge. Powerful GPUs, high-bandwidth GPU-to-GPU interconnects, efficient acceleration libraries, and a...

13 MIN READ

Agentic AI / Generative AI

Mar 27, 2024

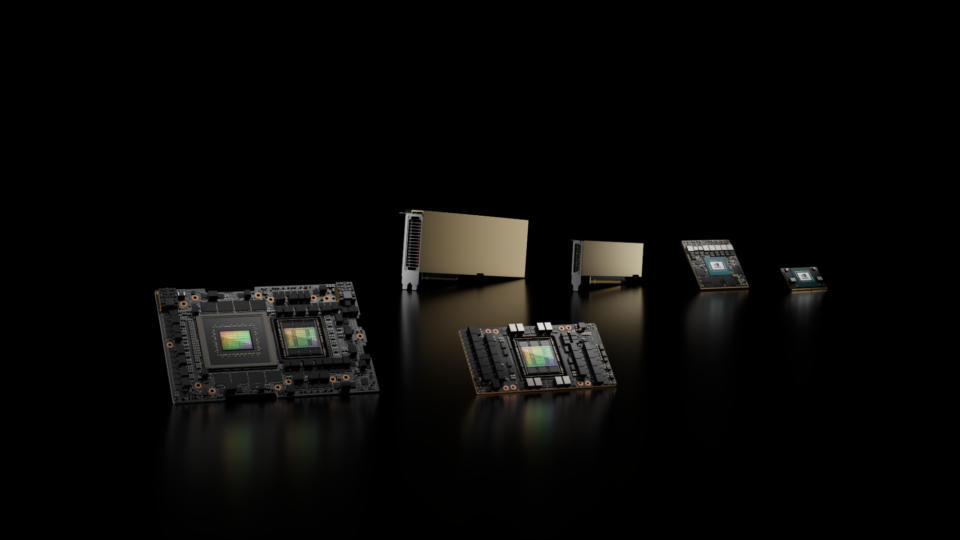

NVIDIA H200 Tensor Core GPUs and NVIDIA TensorRT-LLM Set MLPerf LLM Inference Records

Generative AI is unlocking new computing applications that greatly augment human capability, enabled by continued model innovation. Generative AI...

11 MIN READ

Data Center / Cloud

Sep 09, 2023

Leading MLPerf Inference v3.1 Results with NVIDIA GH200 Grace Hopper Superchip Debut

AI is transforming computing, and inference is how the capabilities of AI are deployed in the world’s applications. Intelligent chatbots, image and video...

13 MIN READ

Networking / Communications

Jul 06, 2023

New MLPerf Inference Network Division Showcases NVIDIA InfiniBand and GPUDirect RDMA Capabilities

In MLPerf Inference v3.0, NVIDIA made its first submissions to the newly introduced Network division, which is now part of the MLPerf Inference Datacenter...

9 MIN READ