JPEG 2000 (.jp2, .jpg2, .j2k) is an image compression standard defined by the Joint Photographers Expert Group (JPEG) as the more flexible successor to the still popular JPEG standard. Part 1 of the JPEG 2000 standard, which forms the core coding system, was first approved in August 2002. To date, the standard has expanded to 17 parts, covering areas like Motion JPEG2000 (Part 3) which extends the standard for video, extensions for three-dimensional data (Part 10), and so on.

Features like mathematically lossless compression and large precision and higher dynamic range per component helped JPEG 2000 find adoption in digital cinema applications. JPEG 2000 is also widely used in digital pathology and geospatial imaging, where image dimensions exceed 4K but regions of interest (ROI) stay small.

GPU acceleration using the nvJPEG2000 library

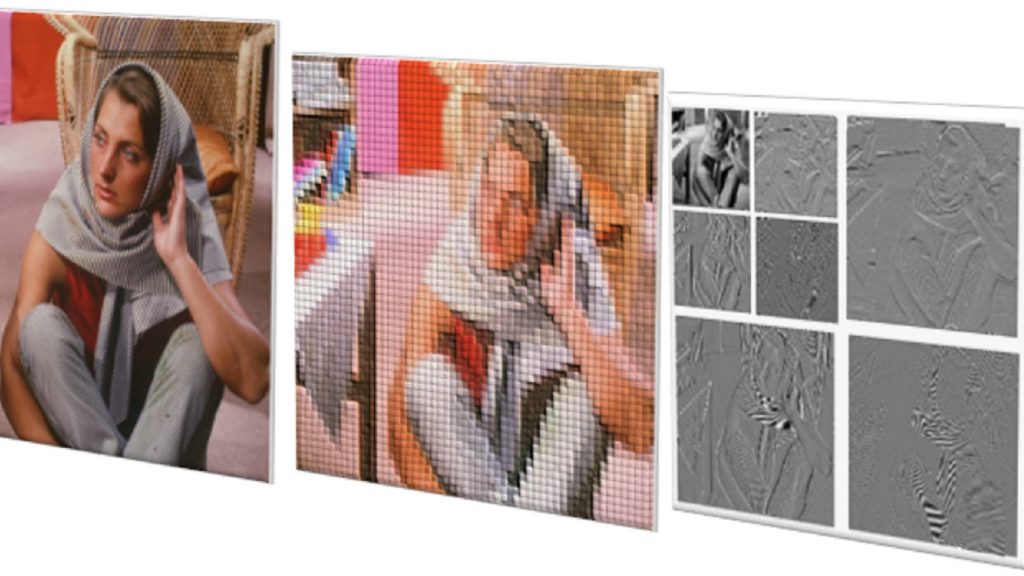

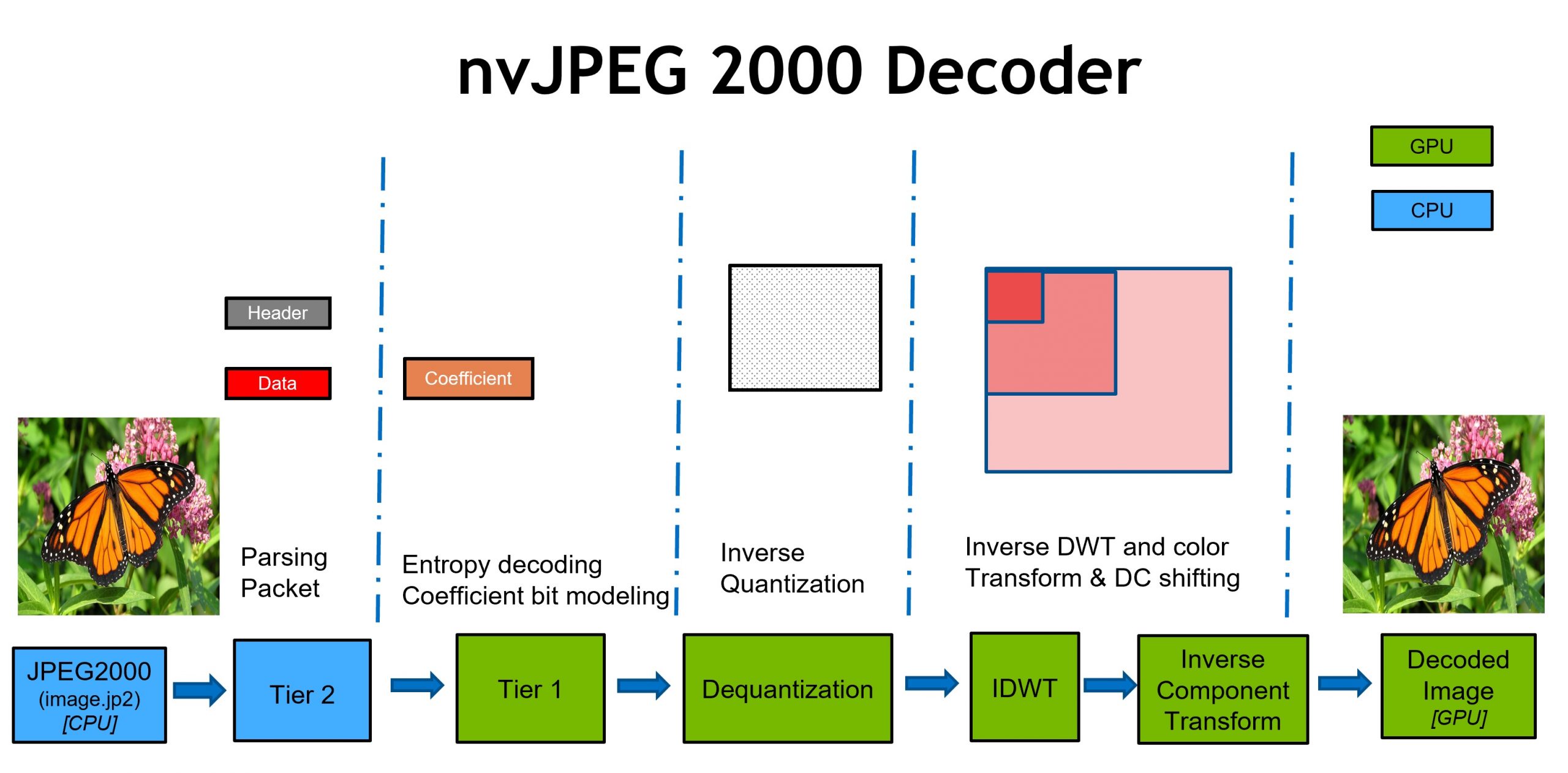

The JPEG 2000 feature set provides ample opportunities for GPU acceleration when compared to its predecessor, JPEG. Through GPU acceleration, images can be decoded in parallel and larger images can be processed quicker. nvJPEG2000 is a new library that accelerates the decoding of JPEG 2000 images on NVIDIA GPUs. It supports codec features commonly used in geospatial imaging, remote sensing, and digital pathology. Figure 1 overviews the decoding stages that nvJPEG2000 accelerates.

The Tier1 Decode (entropy decode) stage is the most compute-intensive stage of the entire decode process. The entropy decode algorithm used in the legacy JPEG codec was serial in nature and was hard to parallelize.

In JPEG 2000, the entropy decode stage is applied at a block-based granularity (typical block sizes are 64×64 and 32×32) that makes it possible to offload the entropy decode stage entirely to the GPU. For more information about the entropy decode process, see Section C of the JPEG 2000 Core coding system specification.

The JPEG 2000 core coding system allows for two types of wavelet transforms (5-3 Reversible and 9-7 Irreversible), both of which benefit from GPU acceleration. For more information about the wavelet transforms, see Section F of the JPEG 2000 Core coding system specification.

Decoding geospatial images

In this section, we concentrate on the new nvJPEG2000 API tailored for the geospatial domain, which enables decoding specific tiles within an image instead of decoding the full image.

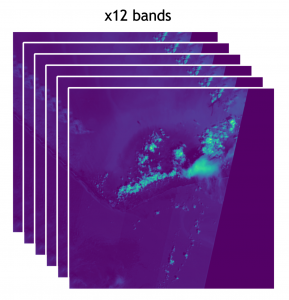

Imaging data captured by the European Space Agency’s Sentinel 2 satellites are stored as JPEG 2000 bitstreams. Sentinel 2 level 2A data downloaded from the Copernicus hub can be used with the nvJPEG2000 decoding examples. The imaging data has 12 bands or channels and each of them is stored as an independent JPEG 2000 bitstream. The image in Figure 2 is subdivided into 121 tiles. To speed up the decode of multitile images, a new API called nvjpeg2kDecodeTile has been added in nvJPEG2000 v 0.2, which enables you to decode each tile independently.

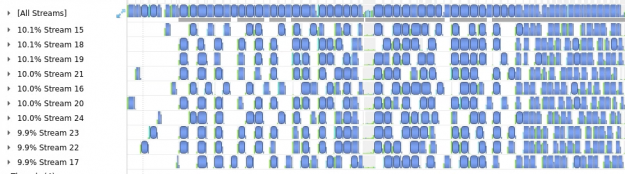

For multitile images, decoding each tile sequentially would be suboptimal. The GitHub multitile decode sample demonstrates how to decode each tile on a separate cudaStream_t. By taking this approach, you can simultaneously decode multiple tiles on the GPU. Nsight Systems trace in Figure 3 shows the decoding of Sentinel 2 data set consisting of 12 bands. By using 10 CUDA streams, up to 10 tiles are being decoded in parallel at any point during the decode process.

Table 1 shows performance data comparing a single stream and multiple streams on a GV100 GPU.

| # of CUDA streams | Average decode time (ms) | Speedup in % over single CUDA stream decode |

| 1 | 0.888854 | – |

| 10 | 0.227408 | 75% |

Using 10 CUDA streams reduces the total decode time of the entire dataset by about 75% on a Quadro GV100 GPU. For more information, see the Accelerating Geospatial Remote Sensing Workflows Using NVIDIA SDKs [S32150] GTC’21 talk. It discusses geospatial image-processing workflows in more detail and the role nvJPEG2000 plays there.

Decoding digital pathology images

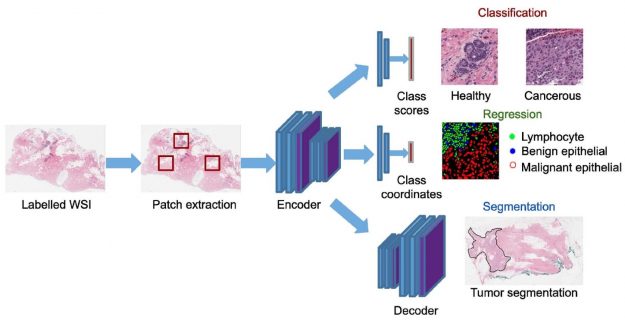

JPEG 2000 is used in digital pathology to store whole slide images (WSI). Figure 4 gives an overview of various deep learning techniques that can be applied to WSI. Deep learning models can be used to distinguish between cancerous and healthy cells. Image segmentation methods can be used to identify a tumor location in the WSI. For more information, see Deep neural network models for computational histopathology: A survey.

Table 2 lists the key parameters and their commonly used values of a whole slide image (WSI) compressed using JPEG 2000.

| Image size | 92000×201712 |

| Tile size | 92000×201712 |

| # of tiles | 1 |

| # of components | 3 |

| Precision | 8 |

The image in question is large and it is not possible to decode the entire image at one time due to the amount of memory required. The size of the decode output is around 53 GB (92000×201712 * 3). This is excluding the decoder memory requirements.

There are several approaches to handling such large images. In this post, we describe two of them:

- Decoding an area of interest

- Decoding the image at lower resolution

Both approaches can be easily performed using specific nvJPEG2000 APIs.

Decoding an area of interest in an image

The nvJPEG2000 library enables the decoding of a specific area of interest in an image supported as part of the nvjpeg2kDecodeTile API. The following code example shows how to set the area of interest in terms of image coordinates. The nvjpeg2kDecodeParams_t type enables you to control the decode output settings, such as the area of interest to decode.

nvjpeg2kDecodeParams_t decode_params; // all coordinate values are relative to the top-left corner of the image uint32_t top_coordinate, bottom_coordinate, left_coordinate, right_coordinate; uint32_t tile_id; nvjpeg2kDecodeParamsSetDecodeArea(decode_params, left_coordinate, right_coordinate, top_coordinate, bottom_coordinate); nvjpeg2kDecodeTile(nvjpeg2k_handle, nvjpeg2k_decode_state, jpeg2k_stream, decode_params, tile_id, 0, &nvjpeg2k_out, cuda_stream)

For more information about how to partially decode an image with multiple tiles, see the Decode Tile Decode GitHub sample.

Decoding lower resolutions of an image

The second approach to decode a large image is to decode the image at lower resolutions. The ability to decode only the lower resolutions is a benefit of JPEG 2000 using wavelet transforms. In Figure 5, wavelet transform is applied up to two levels, which gives you access to the image at three resolutions. By controlling how the inverse wavelet transform is applied, you decode only the lower resolutions of an image.

The digital pathology image described in Table 2 has 12 resolutions. This information can be retrieved on a per-tile basis:

uint32_t num_res; uint32_t tile_id = 0; nvjpeg2kStreamGetResolutionsInTile(jpeg2k_stream, tile_id, &num_res);

The image has a size of 92000×201712 with 12 resolutions. If you choose to discard the four higher resolutions and decode the image up to eight resolutions, that means you can extract an image of size 5750×12574. By dropping four higher resolutions, you are scaling the result by a factor of 16.

uint32_t num_res_to_decode = 8; // if num_res_to_decode > num_res nvjpeg2kDecodeTile will return an INVALID //PARAMETER ERROR nvjpeg2kDecodeTile(nvjpeg2k_handle, nvjpeg2k_decode_state, jpeg2k_stream, decode_params, tile_id, num_res_to_decode, &nvjpeg2k_out, cuda_stream)

Performance benchmarks

To show the performance improvement that decoding JPEG2000 on GPU brings, compare GPU-based nvJPEG2000 with CPU-based OpenJPEG.

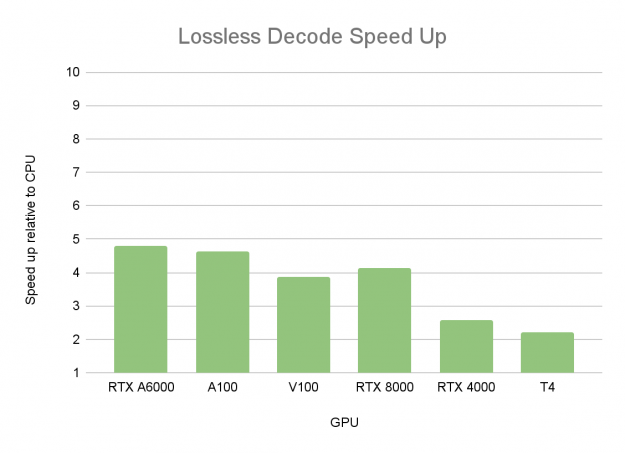

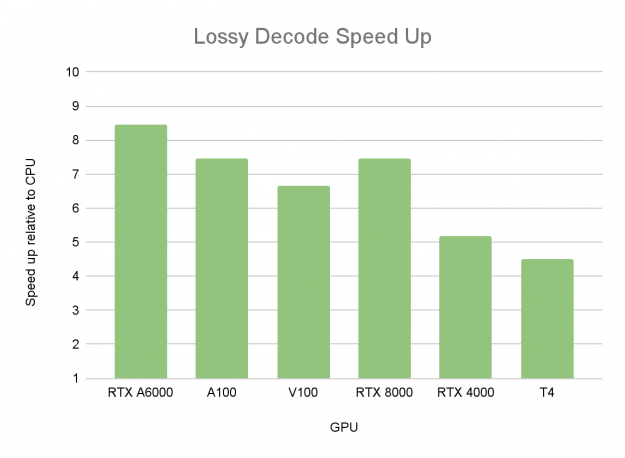

Figures 6 and 7 show the average speedup when decoding one image at a time. The following images are used in the measurements:

- 1920×1080 8-bit image with 444 chroma subsampling

- 3840×2160 8-bit image with 444 chroma subsampling

- 3328×4096 12-bit grayscale

The tables were compiled with OpenJPEG CPU Performance – Intel Xeon Gold 6240@2GHz 3.9GHz Turbo (Cascade Lake) HT On, Number of CPU threads per image=16.

On NVIDIA Ampere Architecture GPUs such as NVIDIA RTX A6000, the speedup factor is more than 8x for decoding. This speedup is measured for single-image latency.

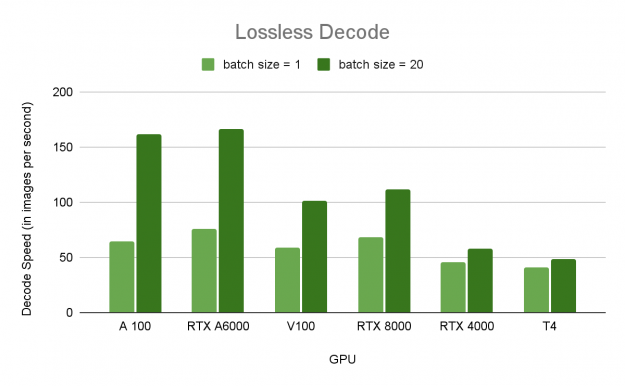

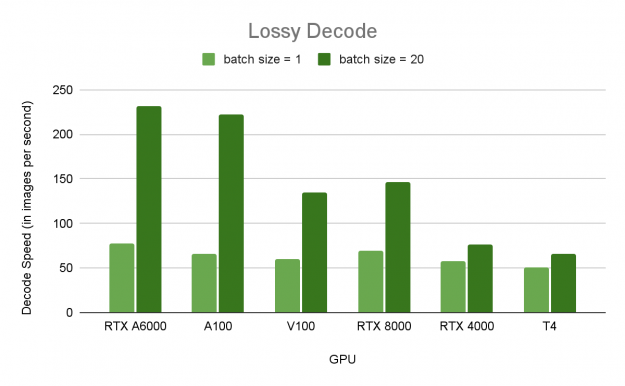

Even higher speedups can be achieved by batching the decode of multiple images. Figures 8 and 9 compare the speed of decoding a 1920×1080 8-bit image with 444 chroma subsampling (Full HD) in both lossless and lossy modes respectively across multiple GPUs.

Figures 8 and 9 demonstrate the benefits of batched decode using the nvJPEG2000 library. There’s a significant performance increase on GPUs with a large number of streaming multiprocessors (SMs), such as A100 and NVIDIA RTX A6000, than with smaller numbers of SMs, such as NVIDIA RTX 4000 and T4. By batching, you are making sure that the compute resources available are efficiently used.

As observed from Figure 8, the decode speed on an NVIDIA RTX A6000 is 232 images per second for a batch size of 20. This equates to an additional 3x speed over batch size = 1, based on a benchmark image with a low compression ratio. The compressed bitstream is only about 3x smaller than the uncompressed image. At higher compression ratios, the speedup is faster.

The following GitHub samples show how to achieve this speedup both at image and tile granularity:

- Image-based granularity: nvJPEG2000 decoding Pipelined

- Tile-based granularity: nvJPEG2000 Decoding Tile Partial

Conclusion

The nvJPEG2000 library accelerates the decoding of JPEG2000 images both in size and volume using NVIDIA GPUs by targeting specific image-processing tasks of interest. Decoding JPEG 2000 images using the nvJPEG2000 library can be as much as 8x faster on GPU (NVIDIA RTX A6000) than on CPU. A further speedup of 3x (24x faster than CPU) is achieved by batching the decode of multiple images.

The simple nvJPEG2000 APIs make it easy to include in your applications and workflows. It is also integrated into the NVIDIA Data Loading Library (DALI), a data loading and preprocessing library to accelerate deep learning applications. Using nvJPEG2000 and DALI together makes it easy to use JPEG2000 images as part of deep learning training workflows.

For more information, see the following resources:

- Download nvJPEG2000 for Linux and Windows

- nvJPEG2000 documentation

- nvJPEG2000 Examples on GitHub

- Accelerating Geospatial Remote Sensing Workflows Using NVIDIA SDKs [S32150] GTC’2021 session

- Fast Data Preprocessing (Image, Video, Audio and Signal) with DALI, NPP and nvJPEG [CWES1081] GTC’2021 session

- Recent Developments in NVIDIA Math Libraries [S31754] GTC’2021 session