Posts by Hao Wu

Developer Tools & Techniques

Apr 22, 2026

Advancing Emerging Optimizers for Accelerated LLM Training with NVIDIA Megatron

Higher-order optimization algorithms such as Shampoo have been effectively applied in neural network training for at least a decade. These methods have achieved...

9 MIN READ

Data Center / Cloud

May 08, 2025

Turbocharge LLM Training Across Long-Haul Data Center Networks with NVIDIA Nemo Framework

Multi-data center training is becoming essential for AI factories as pretraining scaling fuels the creation of even larger models, leading the demand for...

6 MIN READ

Data Science

Aug 31, 2022

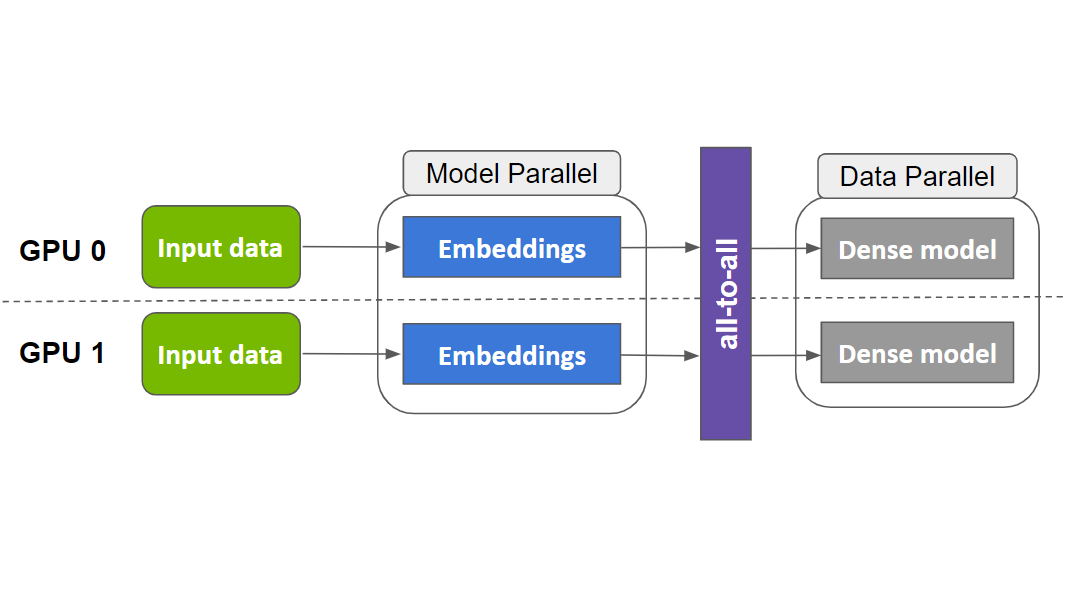

Fast, Terabyte-Scale Recommender Training Made Easy with NVIDIA Merlin Distributed-Embeddings

Embeddings play a key role in deep learning recommender models. They are used to map encoded categorical inputs in data to numerical values that can be...

8 MIN READ

Computer Vision / Video Analytics

Jul 20, 2021

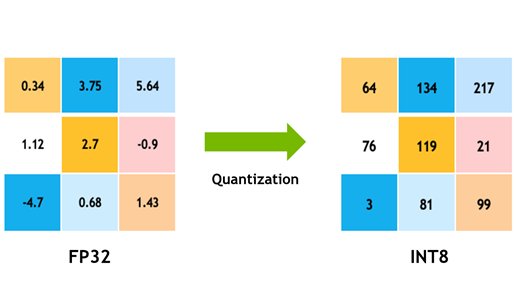

Achieving FP32 Accuracy for INT8 Inference Using Quantization Aware Training with NVIDIA TensorRT

Deep learning is revolutionizing the way that industries are delivering products and services. These services include object detection, classification, and...

17 MIN READ

Simulation / Modeling / Design

Nov 06, 2019

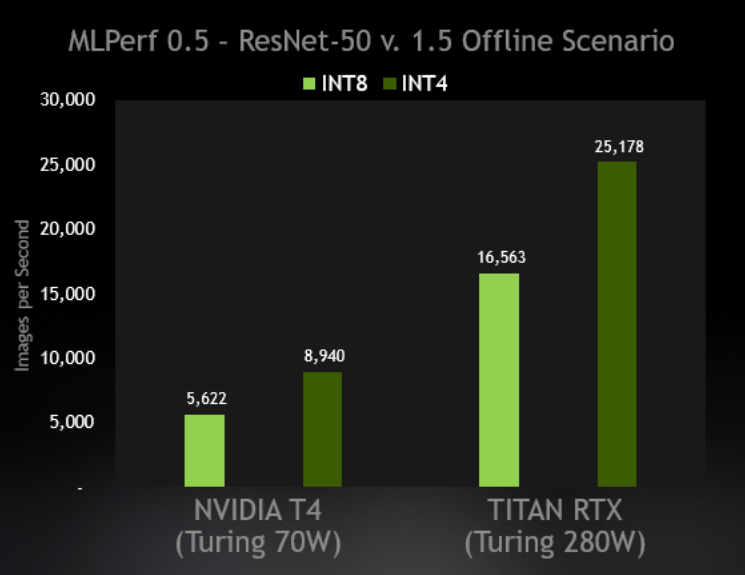

Int4 Precision for AI Inference

INT4 Precision Can Bring an Additional 59% Speedup Compared to INT8 If there’s one constant in AI and deep learning, it’s never-ending optimization to wring...

5 MIN READ