XDP (eXpress Data Path) is a programmable data path in the Linux kernel network stack. It provides a framework to BPF and can enable high performance packet processing at runtime. XDP works in concert with the Linux network stack and is not a kernel bypass.

Because XDP runs in the kernel network driver, it can read the ethernet frames from the RX ring of the NIC and take actions immediately. XDP plugs into the eBPF infrastructure through an RX hook implemented in the driver. XDP being an application of EBPF can trigger actions using return codes, modify packet contents and push/pull headers.

XDP has various use cases, such as packet filtering, packet forwarding, load balancing, DDOS mitigation, and more. A common use case is XDP_DROP, which instructs the driver to drop a packet. This can be done by running a custom BPF program for parsing the incoming packets received in the driver. This program returns a verdict or return code (XDP_DROP), where the packet is dropped right at the driver level without wasting any further resources. Ethtool counters can be used to verify the XDP program’s actions.

Running XDP_DROP

The XDP program runs as soon as it enters the network driver, resulting in higher network performance. It also boosts CPU utilization. The Mellanox ConnectX NIC family allows metadata to be prepared by the NIC hardware. This metadata can be used to perform hardware acceleration for applications that use XDP.

Here’s an example of how to run XDP_DROP using Mellanox ConnectX-5.

Check if the current kernel supports bpf and xdp:

sysctl net/core/bpf_jit_enable

If it is not found, compile and run a kernel with BPF enabled. You can use any upstream kernel greater than 5.0.

Enable the following kconfig flags:

- BPF BPF_SYSCALL

- BPF_JIT

- HAVE_BPF_JIT

- BPF_EVENTS

Then, reboot to the new kernel.

Install clang and llvm:

yum install -y llvm clang libcap-devel

Compile samples with the following steps:

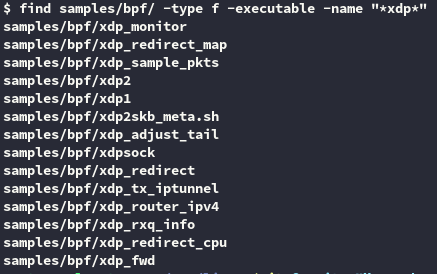

cd <linux src code> make samples/bpf/

This compiles all available XDP applications. After compilation finishes, you see all XDP applications under /sample/bpf (Figure 1).

With the earlier installations, you are now ready to run XDP applications. They can run in two modes:

- Driver path—Must have the implementation in the driver. Works in page resolution and no SKBs are created. Performance is significantly better. Mellanox NICs support this mode.

- Generic path—Works with any network device. Works in with SKBs, but the performance is worse.

Run XDP_DROP in the driver path. XDP_DROP is one of the simplest and fastest way to drop a packet in Linux. Here, it instructs the driver to drop the packet at the earliest Rx stage in the driver. This means that the packet is recycled back into the RX ring queue from which it just arrived.

The xdp1 application located at <linux_source>/samples/bpf/ implements XDP Drop.

Choose a traffic generator of your choice. We use Cisco TRex.

On the RX side, launch xdp1 in the driver path using the following command:

<PATH_TO_LINUX_SOURCE>/samples/bpf/xdp1 -N <INTERFACE> # -N can be omitted

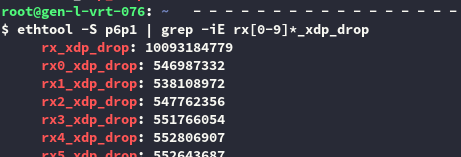

The XDP drop rate can be shown using the application output as well as ethtool counters:

ethtool -S <intf> | grep -iE rx[0-9]*_xdp_drop