The development of socially acceptable nuclear reactors requires that they are safe, clean, efficient, economical, and sustainable. Meeting these requirements calls for new approaches, driving growing interest in Small Modular Reactors (SMRs) and in Generation IV designs.

SMRs aim to improve project economics by standardising designs and shifting construction to controlled manufacturing environments, while Gen IV reactors target fundamental fuel-cycle challenges by better managing transuranics and reducing the radiotoxicity and longevity of waste. Together, these approaches offer a credible roadmap toward safer, cleaner, and more sustainable nuclear energy.

However, validating new designs presents significant challenges. Due to the expense, time constraints, and inherent complexities of physical experiments, numerical simulations are fundamental to the design of nuclear reactors. Yet, the high computational cost of these simulations often creates a major bottleneck in the design process, slowing the pace of innovation.

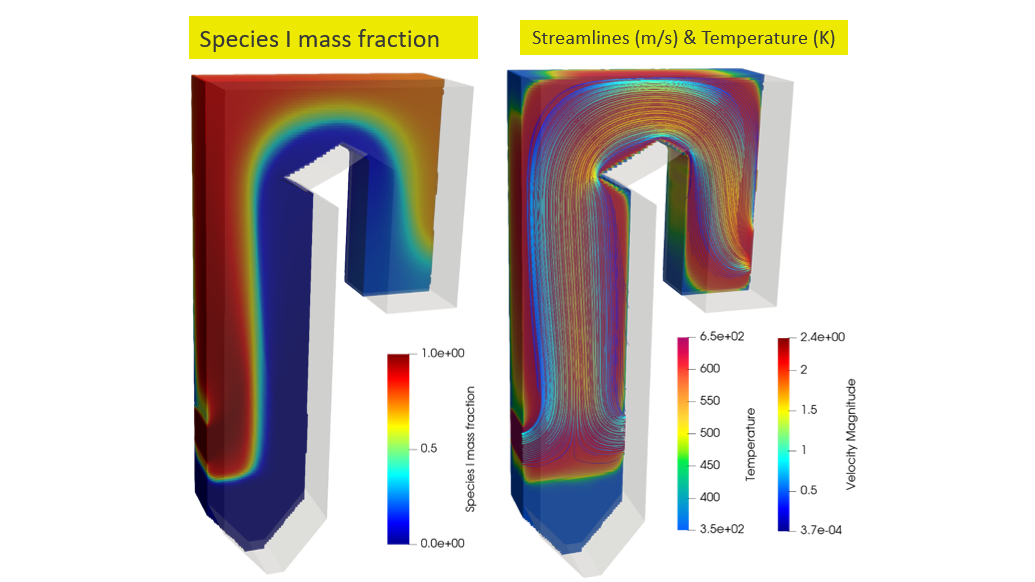

To address this, nuclear engineers are developing digital twins that enable the simulation, testing, and optimization of complex reactor systems and fuel cycles at a fraction of the cost and time required for full-scale prototypes. NVIDIA CUDA-X libraries, the PhysicsNeMo AI Physics framework, and the Omniverse libraries help developers in the nuclear industry address these challenges by delivering GPU-accelerated, AI-augmented simulation solutions for real-time digital twins. These capabilities enable engineers to explore innovative designs, rigorously assess safety, and accelerate the transition toward cleaner and more efficient nuclear technologies.

AI-augmented nuclear reactor simulation and design

This section outlines a modular reference workflow for building interactive digital twins of nuclear reactors that leverage the speed of AI surrogate models. Building interactive digital twins requires a full-stack approach, and the reference workflow below utilises elements of the NVIDIA accelerated computing stack at every stage.

- Data generation: Run high-fidelity reactor/multiphysics simulations (ideally GPU-accelerated) to produce training data.

- Data preprocessing: Use PhysicsNeMo Curator to curate/transform geometry and fields into GPU-ready training datasets.

- Model training: Use PhysicsNeMo to train surrogate models on multiple GPUs.

- Inference & deployment: Serve the surrogate model via an API to enable integration into interactive digital twins.

- Downstream workflows: Employ the surrogate model in downstream design tasks (for example, optimisation and uncertainty quantification workflows).

While the workflow above provides important context, the remainder of this guide focuses primarily on the model training stage and the need for surrogate models that predict full spatial fields, with an emphasis on reactor physics. The same workflow can be easily adapted to other domains that are important in the design of a nuclear reactor, such as CFD and structural analysis.

Building an AI surrogate model of a fuel pin cell

A fuel pin cell (or simply a pin cell) is the fundamental repeating unit employed in the modelling and simulation of a nuclear reactor core (see Figure 1, below).

A standard pin cell comprises a fuel pellet (typically uranium dioxide), a cladding layer providing mechanical and chemical protection, and the surrounding moderator (see Figure 2, below). It provides a simplified yet physically representative model for resolving local neutron transport and flux distributions prior to subsequent assembly-level and full-core analyses.

A typical reactor core contains on the order of 50,000 fuel pins, making full-core simulation at explicit pin cell resolution computationally impracticable. Consequently, reactor analysis relies on multi-scale methods in which fine-scale transport physics is condensed into effective parameters (notably homogenised cross-sections) that are utilized in coarse-mesh core simulators while preserving reaction rates.

In multi-scale reactor analysis, accurate core simulation depends on the generation of homogenised cross-sections, \(\Sigma_{homog}\), that preserve reaction rates within the coarse-mesh elements of full-core simulators. This is calculated via flux-weighted averaging:

\(\large \Sigma_{homog} = \frac{\int_{V} \Sigma(\mathbf{r}) \phi(\mathbf{r}) \, dV}{\int_{V} \phi(\mathbf{r}) \, dV} = \frac{\text{Total Reaction Rate}}{\text{Total Flux}}\)

where \(\Sigma(\mathbf{r})\) represents the spatial macroscopic cross-section distribution, which depends on the local material composition and neutron energy spectrum, and \(\phi(\mathbf{r})\) represents the neutron flux distribution, which acts as the spatial weighting function.

Standard volume-weighted averaging is insufficient because it neglects spatial self-shielding – the phenomenon where the neutron population is depressed within highly absorbing fuel regions. Consequently, determining the correct \(\Sigma_{homog}\) requires knowledge of both the precise neutron flux field \(\phi(\mathbf{r})\) and the macroscopic cross-section field \(\Sigma(\mathbf{r})\).

Conventionally, obtaining \(\phi(\mathbf{r})\) and \(\Sigma(\mathbf{r})\) requires solving the neutron transport equation using high-fidelity Monte Carlo methods. This process is computationally expensive and prohibits deep design space exploration. We bypass the expensive transport solve by training a surrogate model to jointly predict \(\phi(\mathbf{r})\) and \(\Sigma(\mathbf{r})\) directly from the geometry and fuel enrichment.

We demonstrate that by jointly predicting the spatially resolved flux and cross-section fields, and then computing the homogenised cross-section from these predicted fields, substantially higher accuracy is achieved than with standard regression models that map a set of scalar inputs directly to the homogenised cross-section. This physics-aligned approach captures important spatial effects—notably self-shielding—resulting in much better generalisability.

All code used to generate the dataset and train the baseline feature-based regression model and the surrogate model is available here.

Dataset generation: Efficient sampling

To build a dataset efficiently, we combine accelerated solvers with smart sampling techniques.

The first step is to parameterise a representative pin cell (see Figure 2, above). We vary the fuel enrichment (wt% fissile content) and key geometric inputs: p (pin pitch), rf (fuel rod radius), rg (gap radius) and rc (cladding radius). The outputs are the neutron flux field and the spatially resolved absorption cross-section map.

To minimize the number of samples (computationally expensive simulations) required to train an accurate surrogate model, we employ Latin Hypercube Sampling (LHS). LHS ensures that the samples provide good coverage of the design space while minimising redundancy. By combining efficient sampling techniques with accelerated solvers, a suitable dataset can be generated in practical timeframes.

Figure 3, below, illustrates the neutron flux field in a subcritical and a supercritical configuration. When a reactor is subcritical, the effective multiplication factor keff —the ratio of neutrons in one generation to the previous—is less than 1, and the chain reaction isn’t self-sustaining. When a reactor is supercritical, keff > 1, the neutron population increases. By sampling enrichment and geometry across a wide but realistic range, the dataset naturally includes both regimes, providing the surrogate exposure to flux fields associated with subcritical and supercritical conditions.

Model training with PhysicsNeMo

NVIDIA PhysicsNeMo is an open-source Python framework for building, training, and fine-tuning AI surrogate models that emulate complex numerical simulations. It is purpose-built for AI physics workloads and enables developers to focus on building domain specific AI-augmented applications rather than the underlying AI software stack.

Importantly, PhysicsNeMo provides model architectures and training pipelines for developing surrogate models that predict spatially resolved fields (for example, pressure, temperature, neutron flux), rather than only scalar quantities.

Unlike general-purpose machine learning libraries, PhysicsNeMo provides modular, physics-aware components—from neural operators and graph neural networks to diffusion and transformer-based models—that are designed to capture complex, continuous physical phenomena using state-of-the-art architectures. By integrating these architectures with optimized data pipelines and distributed training utilities, the framework enables researchers and engineers to train high-fidelity surrogate models efficiently on multi-GPU and multi-node platforms, significantly reducing both development time and computational overhead compared with building custom solutions from scratch.

Moreover, PhysicsNeMo integrates seamlessly with PyTorch, so domain experts can leverage familiar deep learning tools while extending them with capabilities tailored to Computer Aided Engineering (CAE) problems. The framework also includes curated examples and reference pipelines for different domains, from turbulent fluid dynamics to electromagnetics, making it easy to start developing new applications.

The combination of scalability, extensibility, and optimized performance provided by PhysicsNeMo enables the development of surrogate models that deliver near real-time predictions without sacrificing fidelity.

Training Fourier Neural Operators

In this guide, we train a Fourier Neural Operator (FNO) using PhysicsNeMo. FNOs are well-suited to the task of predicting neutron flux distributions because the flux field exhibits relatively smooth spatial variations within material regions.

FNOs learn a field-to-field operator in the spectral domain, where a truncated set of low-frequency Fourier modes provides a compact representation of smooth solutions and naturally yields a global receptive field. This allows the model to capture long-range spatial coupling, significantly reducing the computational overhead compared to deep stacks of local convolutions.

- Input format: Fuel, cladding, and moderator are encoded as three binary mask channels via one-hot encoding. Fuel enrichment is a scalar value broadcast over the domain and appended as a fourth channel. See Figure 4, below:

2. Preprocessing: Target data (neutron flux and macroscopic absorption cross-section) is normalised in log-space to handle the large dynamic range.

3. Training:

- The input to the FNO is a 4-channel tensor (B, 4, H, W)

- Each pixel is a 4D feature vector: (fuel, cladding, moderator, enrichment).

- The model jointly predicts the neutron flux field and the absorption cross-section field as a 2-channel output tensor (B, 2, H, W) in normalised log-space and is trained with MSE against the normalised target.

4. Inference: Input is the same 4-channel representation. The model predicts normalised log-space flux and absorption cross-section fields, which are then denormalised to recover physical values. See Figure 5, below:

Baseline model results

To demonstrate the advantages of predicting the full neutron flux field, we first establish a baseline: a feature-based regression model—specifically, a gradient boosting regressor. The baseline model directly predicts the scalar homogenised cross-section from a set of scalar descriptors capturing the key geometric features and material parameters of a pin cell (see Figure 2, above).

Results obtained using the baseline gradient boosting regressor model are illustrated in Figure 6, below. The baseline model demonstrates reasonably good predictive accuracy, with an R2 score of 0.80. However, because the input representation compresses the pin cell geometry into a small set of scalars, it does not capture the full spatial effects that define the neutron flux and, consequently, the homogenised cross-section.

We can significantly improve upon the baseline model by employing a two-step physics-aligned approach: jointly predict the neutron flux field and the absorption cross-section field using a multi-output FNO, then compute the homogenised cross-section from these predicted values. Fig. 5 illustrates the predicted flux field for a representative pin cell. This physics-aligned approach significantly improves predictive accuracy, achieving an R2 score of 0.97 (see Figure 7, below).

These results demonstrate an example of non-injective feature representation, where multiple distinct pin cells have similar scalar descriptors but distinct outputs, limiting what a feature-based regression model can learn.

The two-step physics-aligned approach preserves the information required to distinguish between these cases and generalises better. Across engineering and scientific domains, there are numerous problems where retaining spatial information, rather than compressing it into scalars, leads to substantially better generalisability.

Integrating AI surrogates

This guide provides a practical workflow for developers and engineers in the nuclear industry to build AI surrogates using PhysicsNeMo and integrate them into their design processes.

We have focused on a relatively simple pin cell example, where jointly predicting the neutron flux field and the absorption cross-section field—and then computing the homogenised cross-section—yields substantially higher accuracy than directly predicting the homogenised cross-section from a set of scalar descriptors.

A feature-based regression model that maps scalar descriptors directly to the homogenised cross-section suffers from a non-injective feature representation: Distinct geometries can share similar scalar summaries while producing meaningfully different flux distributions and hence different flux-weighted homogenised values.

In contrast, an FNO learns the operator mapping from geometry/material fields to both the flux field and the absorption cross-section field, preserving the spatial information that actually determines the flux weighting. Computing the homogenised cross-section from the predicted fields then enforces the correct physics-based aggregation, which substantially improves predictive accuracy and generalisation.

Going further

NVIDIA PhysicsNeMo significantly eases the process of training industry-scale surrogate models, providing a collection of optimized model architectures and utilities that simplify the implementation of distributed training (both data parallel and domain parallel).

By abstracting away the details of training models at scale, PhysicsNeMo enables developers and engineers to focus on outcomes and dramatically reduce the time and computational cost of design exploration by offering fast surrogate modeling. Additionally, developers can leverage NVIDIA Omniverse libraries to create real-time digital twins.

We hope that this guide will serve as motivation for domain experts to utilize PhysicsNeMo in their design workflows, extending this approach to the most computationally demanding problems in the nuclear industry.

Getting started

If you are a SciML developer or an AI Physics AI practitioner, PhysicsNeMo is a powerful tool in your arsenal to supercharge and extend your PyTorch stack. Instead of building everything from scratch, you can import PhysicsNeMo modules to develop AI Physics surrogates with unprecedented speed and simplicity. You can get started easily and step by step using these resources:

- Using PhysicsNeMo for building fuel pin cell surrogate

- PhysicsNeMo Getting Started Guide

- PhysicsNeMo Reference Samples