Like so many schools and universities during the COVID-19 pandemic, the University of Pisa, one of Italy’s oldest universities, was challenged to find solutions for conducting research while many students had to attend classes remotely.

The university’s Research Computing department has a focused study in AI deep learning and machine learning applications, where they normally perform computations using bare-metal systems on-premises. This type of implementation is typically separate from the virtualized infrastructure running traditional applications also required for students and faculty, creating a siloed environment that leads to management challenges and complexity.

During the pandemic, they were an early-access user of the new NVIDIA AI Enterprise software suite, which is optimized for VMware vSphere environments and provides similar computational performance as bare metal systems.

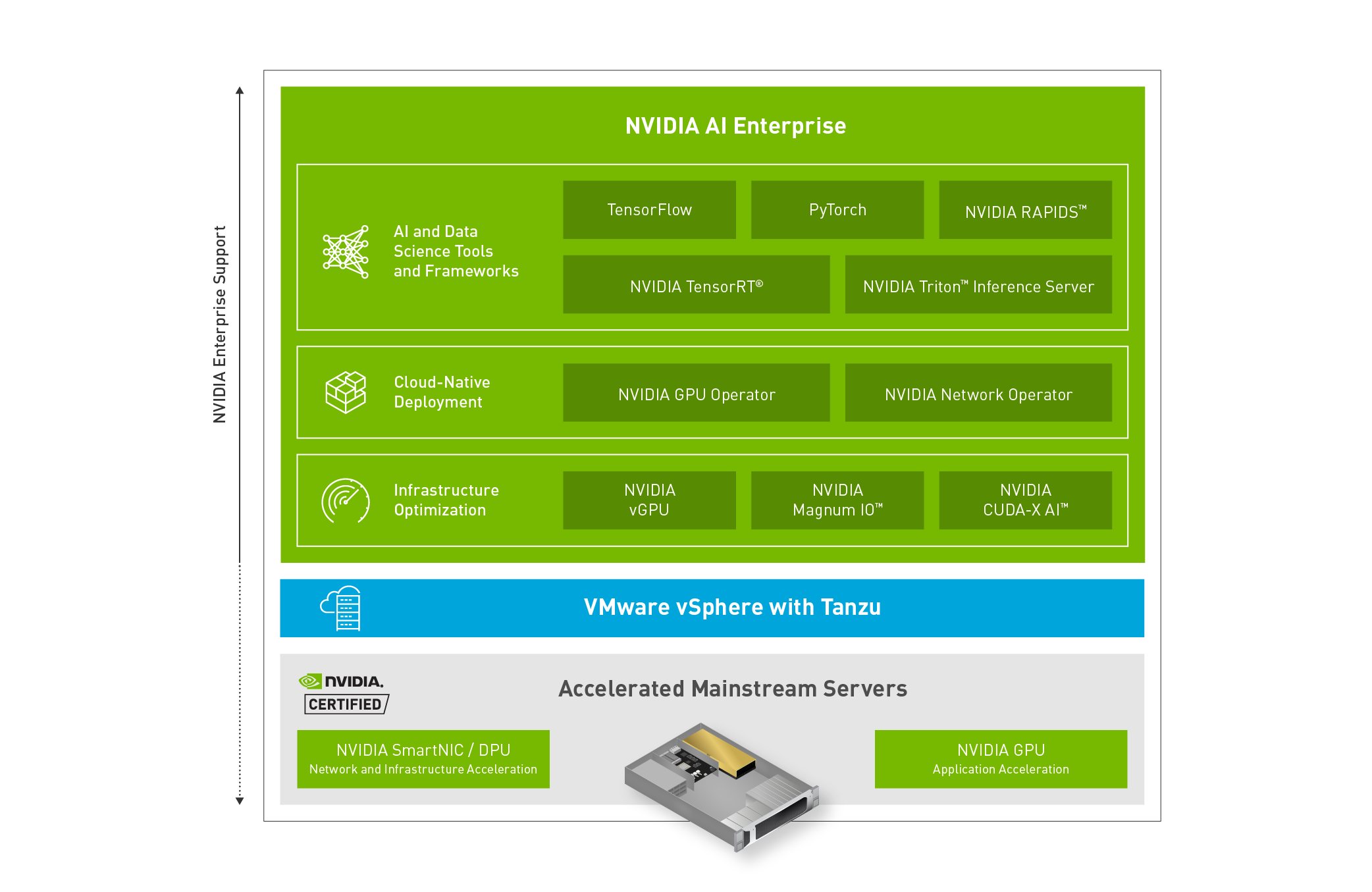

NVIDIA AI Enterprise is an end-to-end, cloud-native, suite of AI software, certified for VMware vSphere 7 Update 2 or later and accelerated mainstream servers that are NVIDIA-Certified, such as Dell EMC PowerEdge. The NVIDIA AI Enterprise software suite includes key enabling technologies and software from NVIDIA for rapid deployment, management, and scaling of AI workloads in the virtualized data center. NVIDIA AI Enterprise is certified, licensed, and supported by NVIDIA.

Maurizio Davini, CTO for the University of Pisa, and Prof. Davide Bacciu’s team knew their on-premises infrastructure not only satisfied the computational needs for many groups in the school but had an experienced IT support system in place.

To establish a scalable and more manageable environment that could better integrate with existing infrastructure, the university looked to NVIDIA AI Enterprise to run on VMware vSphere with NVIDIA-Certified Dell EMC VxRail hyperconverged servers. First, the university needed to gauge whether the virtualized AI infrastructure could stand up to the performance provided by the local bare-metal systems.

Bare metal performance in a virtualized environment

To conduct an “apples-to-apples” comparison, CTO Davini’s bare-metal setup used a single NVIDIA A100 Tensor Core GPU in a Dell EMC PowerEdge XE8545 server with NVIDIA DGX OS installed. For the virtualized environment, an NVIDIA A100 GPU and NVIDIA BlueField data processing units (DPUs) were installed in a Dell EMC VxRail server with VMware vSphere and the NVIDIA AI Enterprise software suite. The Research Computing department then executed two AI workloads involving graphs of specific biological data and another using MIDI files for music generation.

With data on hand as coordinators of the CLAIRE-COVID-19 bioinformatics groups, CTO Davini and Prof. Bacciu’s team ran a deep-learning workload of graphs consisting of biological interaction networks of protein, disease, gene, and cellular information. Applying deep learning techniques to graphs is one of the fastest growing research topics in AI, where the technology is advancing symbolic and subsymbolic hybridization systems in several application fields.

Each network size was a dataset of over 6,000 molecular graphs exceeding 45,000 nodes and 74,000 edges. They trained 36 different configurations of graph networks using 2-3 layers and hidden neurons ranging in total between 350 and 700 units, borrowed from the Graph Isomorphism Network, combined with a multilayer perceptron for classification.

This experiment was performed using PyDGN, a publicly available Python library on GitHub that is specifically for graph neural networks. Every graph neural network configuration was trained for 10,000 epochs, which is the number of completed passes of the entire training dataset by the machine learning algorithm.

Professor Bacciu’s team also developed a transformer-based adversarial auto-encoder for music generation, which they dubbed, MusAE 2.0. Transformer-based deep learning uses an attention algorithm, which is a method for weighing and prioritizing the relationships between variables in a data set. It is traditionally used in the field of natural language processing, where it attempts to identify the context of a word or sound within a set of input data in order to predict and generate the desired output.

The team’s model used an embedding size of 256 values, where an embedding is using data, in this case songs, and representing them as numbers known as vectors so that they can be computed by the machine learning algorithm. Each layer consists of 512 hidden neurons, for a total of four attention heads, which are sets of matrices showing the calculated weighted attention of the relationships between the data.

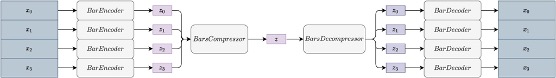

His team used six layers for both the encoder and decoder, essentially converting the data into vector format and then converting that output to the desired format of music. Prof. Bacciu’s model was trained using the Lakh MIDI dataset, with a collection of 100,000 MIDI files.

In Figure 2, \(x_{n}\) are the data inputs that are encoded into representational data, \(Z_{ns}\), then compressed to a smaller representation, \(Z\), which includes the relevant data observed from the inputs. \(Z\) is then decompressed and decoded back into MIDI format with the desired output features.

After conducting the AI experiments, running training times for an average of 25 minutes each, CTO Davini and the various testing teams concluded that there is negligible performance difference between a GPU-accelerated bare-metal setup and a virtualized environment with NVIDIA AI Enterprise with NVIDIA-Certified accelerated servers. These findings demonstrate the GPU-accelerated options available to the researchers and scientists at the University of Pisa.

Bare-metal systems will continue being a vital component in the school’s AI research infrastructure. Going forward, now NVIDIA-Certified Dell EMC VxRail hyperconverged servers with VMware vSphere running workloads using NVIDIA AI Enterprise software offer the same compute performance, but with the benefit of a flexible, easily deployable, and manageable platform. Because NVIDIA AI Enterprise uses mainstream servers, those servers can also easily run other enterprise applications alongside their AI workloads, ensuring optimized utilization that can adapt to the university’s needs.

Making accelerated AI accessible to all

One of the most notable features of the department using NVIDIA AI Enterprise is the ease and speed of deploying computational resources. By using DeepOps, the department is able to automate deployment of Kubernetes across clusters of working nodes. DeepOps is used in the installation of necessary GPU drivers, loading the NVIDIA Container Toolkit for Docker, and other resources for GPU-accelerated workloads.

In turn, Kubernetes is then used for auto-deploying, scaling, and managing containerized applications. The department also takes advantage of the NVIDIA NGC Catalog, a repository of standardized packages including containers, pretrained models, and AI SDKs included in the NVIDIA AI Enterprise suite, to make the installation of necessary software easy and available to any student.

CTO Davini and the University of Pisa plan to keep their mixed environment of bare-metal systems and the NVIDIA AI Enterprise virtualized environment, including Dell’s EMC PowerEdge XE8545 and the VxRail servers, into the future. The department’s focus is traditionally executing experiments for deep learning and quantum computing simulators, but they are also seeing benefits in high performance computing (HPC) workloads because of the NVIDIA AI Enterprise solution on NVIDIA-Certified servers. This solution enables ease of management, scalability, and performance, and the University of Pisa views these benefits as key factors in continuing their next-generation computing infrastructure.

For more information, watch the upcoming Making AI Accessible from Anywhere to Students, Faculty, and Researchers GTC session, hosted by Maurizio Davini, the university’s CTO.