Mixture of Experts (MoE)

Mar 12, 2026

Build Next-Gen Physical AI with Edge‑First LLMs for Autonomous Vehicles and Robotics

Physical AI is rapidly evolving, from next-generation software-defined autonomous vehicles (AVs) to humanoid robots. The challenge is no longer how to run a...

7 MIN READ

Mar 11, 2026

Introducing Nemotron 3 Super: An Open Hybrid Mamba-Transformer MoE for Agentic Reasoning

Agentic AI systems need models with the specialized depth to solve dense technical problems autonomously. They must excel at reasoning, coding, and long-context...

12 MIN READ

Mar 09, 2026

Removing the Guesswork from Disaggregated Serving

Deploying and optimizing large language models (LLMs) for high-performance, cost-effective serving can be an overwhelming engineering problem. The ideal...

10 MIN READ

Feb 27, 2026

Develop Native Multimodal Agents with Qwen3.5 VLM Using NVIDIA GPU-Accelerated Endpoints

Alibaba has introduced the new open source Qwen3.5 series built for native multimodal agents. The first model in this series is a ~400B parameter native...

3 MIN READ

Feb 02, 2026

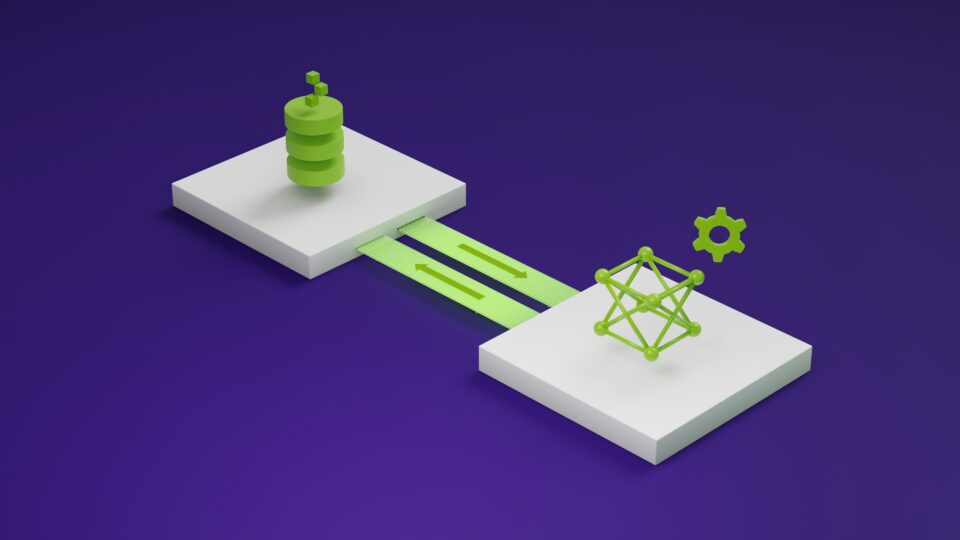

Optimizing Communication for Mixture-of-Experts Training with Hybrid Expert Parallel

In LLM training, Expert Parallel (EP) communication for hyperscale mixture-of-experts (MoE) models is challenging. EP communication is essentially all-to-all,...

11 MIN READ

Jan 08, 2026

Delivering Massive Performance Leaps for Mixture of Experts Inference on NVIDIA Blackwell

As AI models continue to get smarter, people can rely on them for an expanding set of tasks. This leads users—from consumers to enterprises—to interact with...

6 MIN READ

Nov 06, 2025

Democratizing Large-Scale Mixture-of-Experts Training with NVIDIA PyTorch Parallelism

Training massive mixture-of-experts (MoE) models has long been the domain of a few advanced users with deep infrastructure and distributed-systems expertise....

7 MIN READ

Apr 30, 2024

Leverage Mixture of Experts-Based DBRX for Superior LLM Performance on Diverse Tasks

This week’s model release features DBRX, a state-of-the-art large language model (LLM) developed by Databricks. With demonstrated strength in programming and...

3 MIN READ

Mar 14, 2024

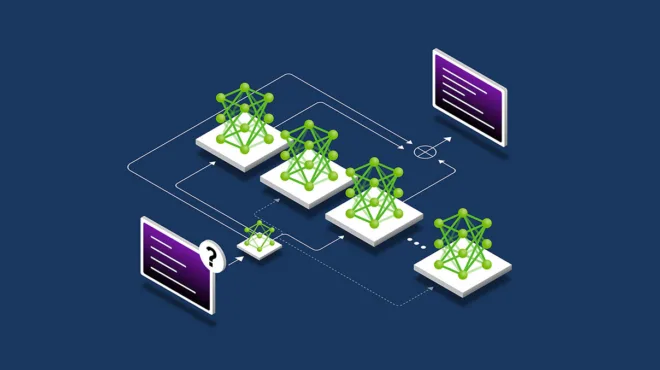

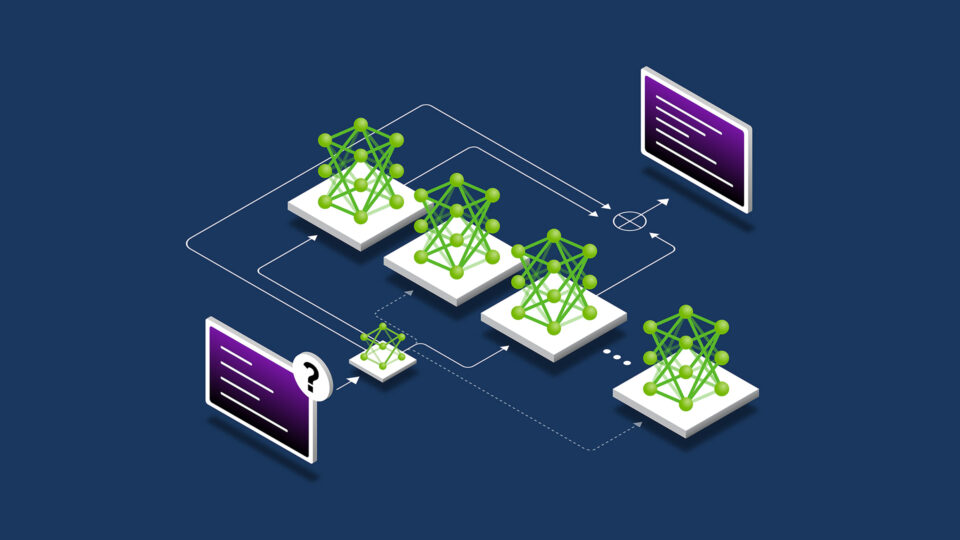

Applying Mixture of Experts in LLM Architectures

Mixture of experts (MoE) large language model (LLM) architectures have recently emerged, both in proprietary LLMs such as GPT-4, as well as in community models...

12 MIN READ