Posts by Mohammad Shoeybi

Agentic AI / Generative AI

Aug 08, 2023

Curating Trillion-Token Datasets: Introducing NVIDIA NeMo Data Curator

The latest developments in large language model (LLM) scaling laws have shown that when scaling the number of model parameters, the number of tokens used for...

8 MIN READ

AI / Deep Learning

Apr 12, 2021

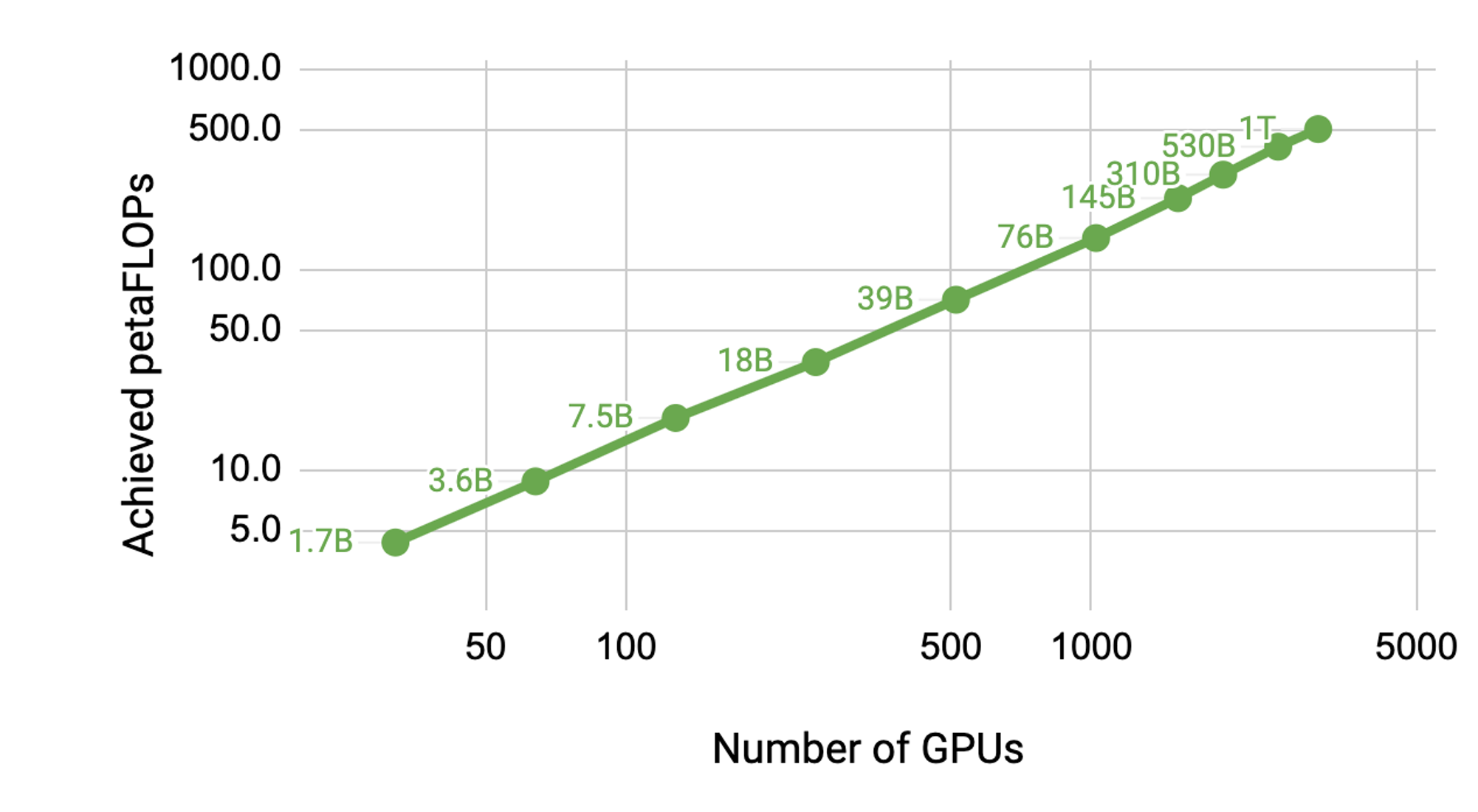

Scaling Language Model Training to a Trillion Parameters Using Megatron

Natural Language Processing (NLP) has seen rapid progress in recent years as computation at scale has become more available and datasets have become larger. At...

17 MIN READ

Simulation / Modeling / Design

Oct 06, 2020

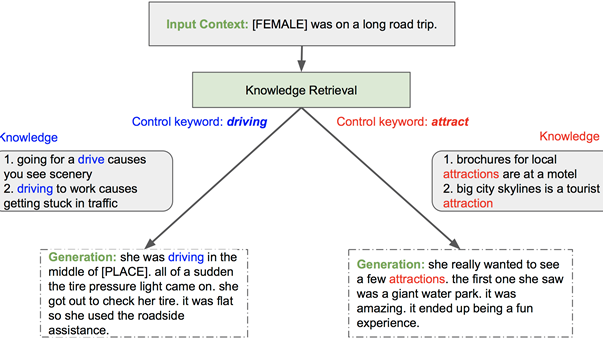

Adding External Knowledge and Controllability to Language Models with Megatron-CNTRL

Large language models such as Megatron and GPT-3 are transforming AI. We are excited about applications that can take advantage of these models to create better...

8 MIN READ

Conversational AI / NLP

May 14, 2020

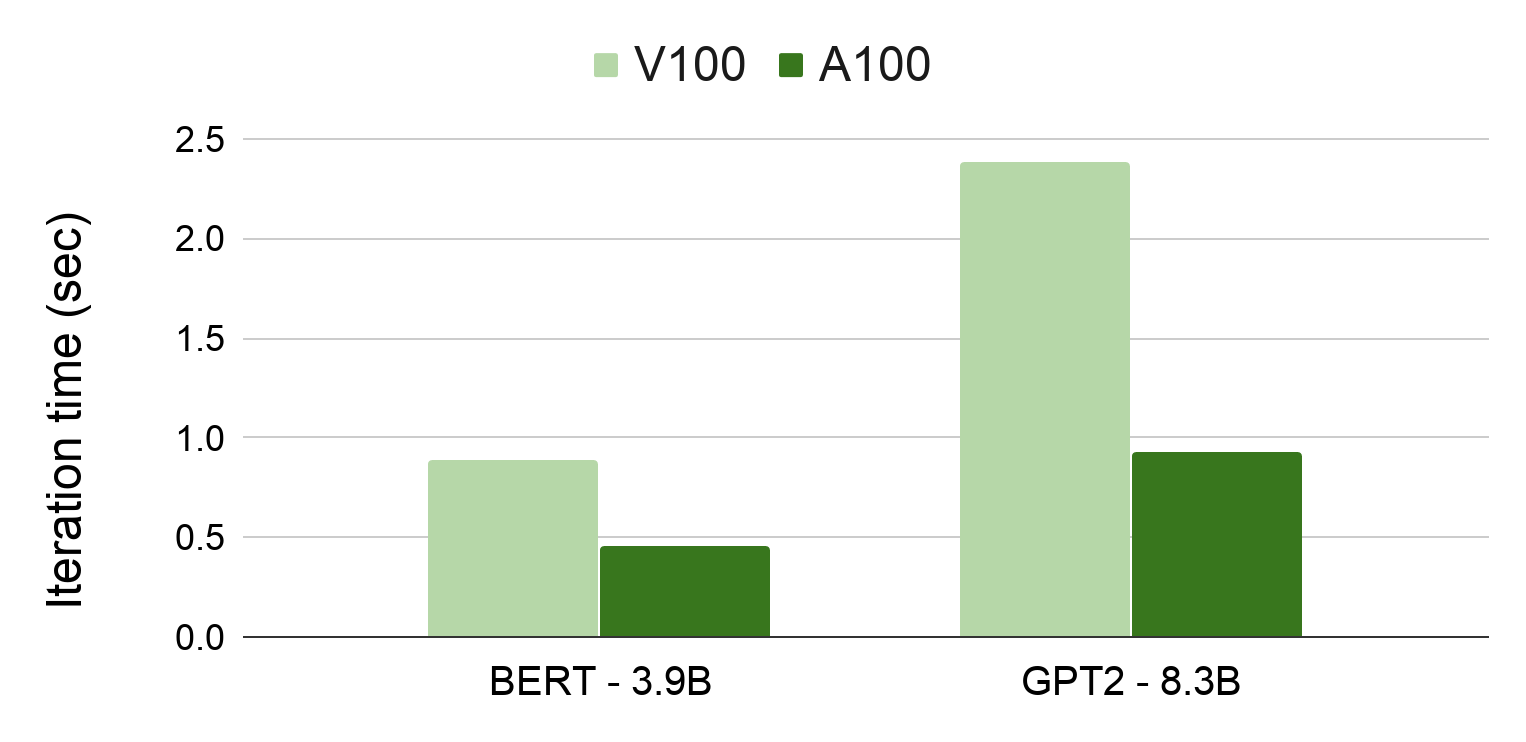

State-of-the-Art Language Modeling Using Megatron on the NVIDIA A100 GPU

Recent work has demonstrated that larger language models dramatically advance the state of the art in natural language processing (NLP) applications such as...

9 MIN READ