Posts by Jeff Pool

Computer Vision / Video Analytics

Jul 20, 2021

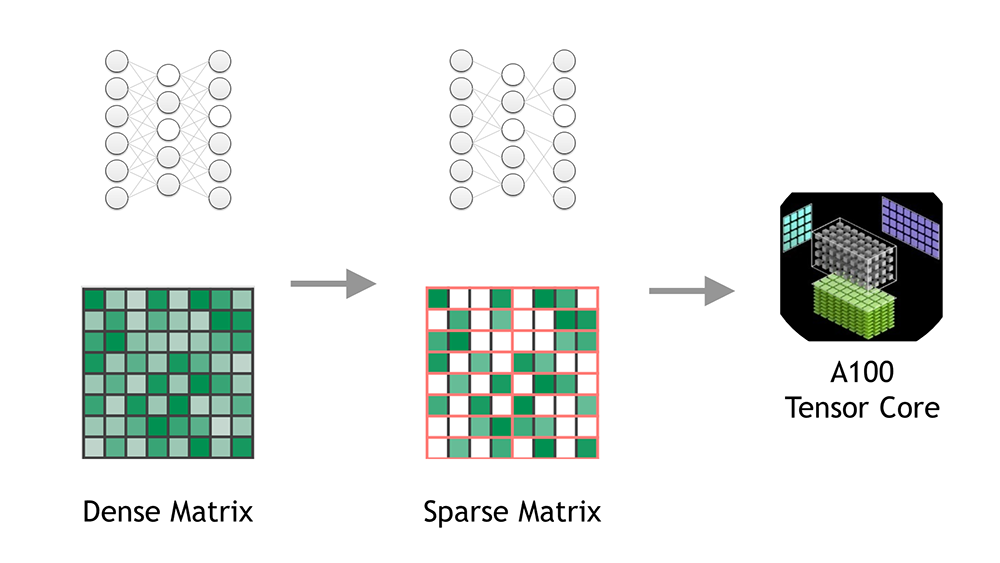

Accelerating Inference with Sparsity Using the NVIDIA Ampere Architecture and NVIDIA TensorRT

This post was updated July 20, 2021 to reflect NVIDIA TensorRT 8.0 updates. When deploying a neural network, it's useful to think about how the network could be...

8 MIN READ

Simulation / Modeling / Design

Dec 08, 2020

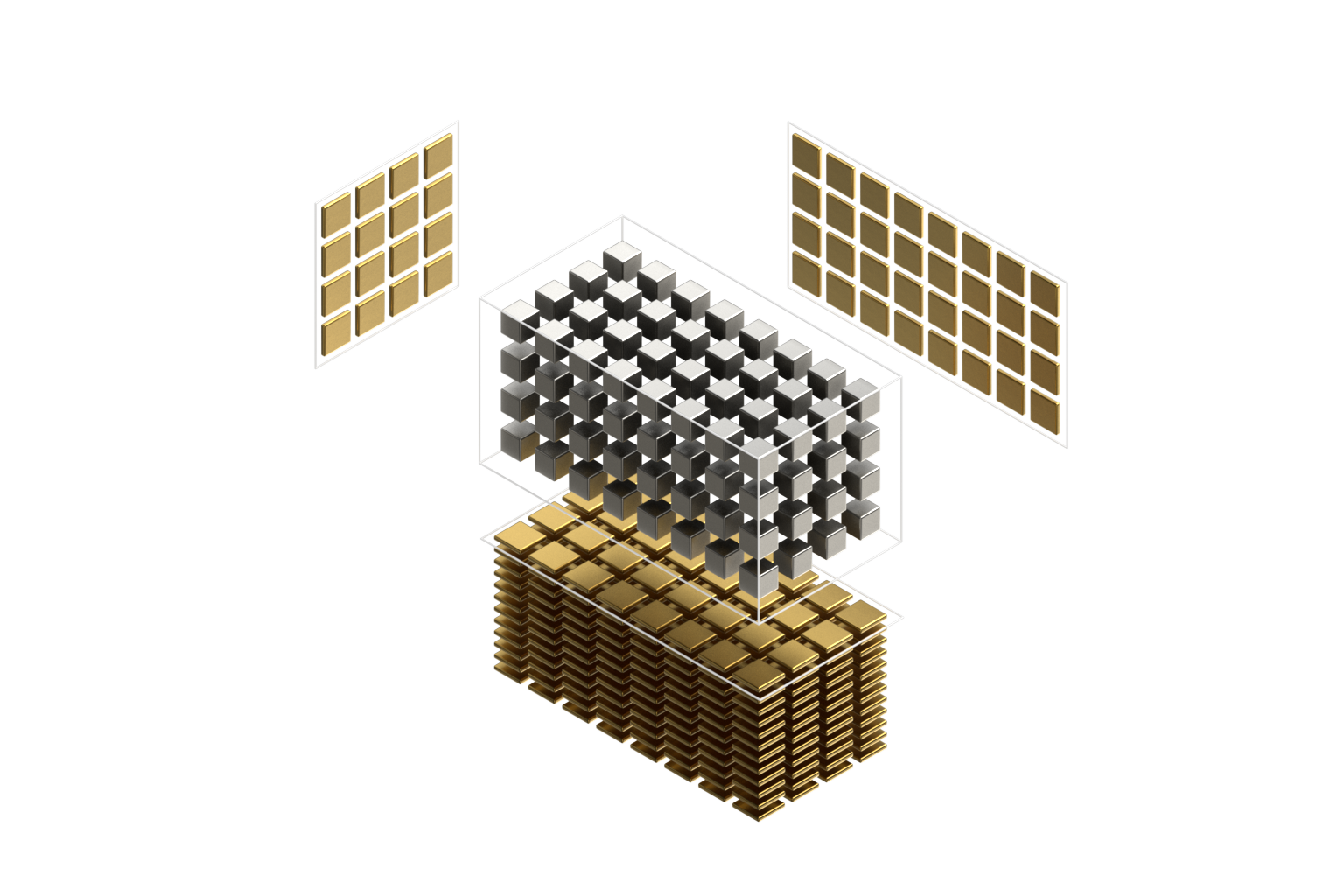

Exploiting NVIDIA Ampere Structured Sparsity with cuSPARSELt

Deep neural networks achieve outstanding performance in a variety of fields, such as computer vision, speech recognition, and natural language processing. The...

9 MIN READ