Hopper

Apr 02, 2026

Accelerating Vision AI Pipelines with Batch Mode VC-6 and NVIDIA Nsight

In vision AI systems, model throughput continues to improve. The surrounding pipeline stages must keep pace, including decode, preprocessing, and GPU...

10 MIN READ

Mar 25, 2026

Scaling Token Factory Revenue and AI Efficiency by Maximizing Performance per Watt

In the AI era, power is the ultimate constraint, and every AI factory operates within a hard limit. This makes performance per watt—the rate at which power is...

10 MIN READ

Mar 16, 2026

How NVIDIA Dynamo 1.0 Powers Multi-Node Inference at Production Scale

Reasoning models are growing rapidly in size and are increasingly being integrated into agentic AI workflows that interact with other models and external tools....

14 MIN READ

Jan 08, 2026

Delivering Massive Performance Leaps for Mixture of Experts Inference on NVIDIA Blackwell

As AI models continue to get smarter, people can rely on them for an expanding set of tasks. This leads users—from consumers to enterprises—to interact with...

6 MIN READ

Jan 05, 2026

Inside the NVIDIA Vera Rubin Platform: Six New Chips, One AI Supercomputer

Update March 16, 2026: The NVIDIA Vera Rubin platform now has a seventh chip. Learn more about NVIDIA Groq 3 LPX: The Low-Latency Inference Accelerator for the...

63 MIN READ

Dec 31, 2025

AI Factories, Physical AI, and Advances in Models, Agents, and Infrastructure That Shaped 2025

2025 was another milestone year for developers and researchers working with NVIDIA technologies. Progress in data center power and compute design, AI...

4 MIN READ

Dec 16, 2025

Accelerating Long-Context Inference with Skip Softmax in NVIDIA TensorRT LLM

For machine learning engineers deploying LLMs at scale, the equation is familiar and unforgiving: as context length increases, attention computation costs...

6 MIN READ

Dec 12, 2025

How to Scale Fast Fourier Transforms to Exascale on Modern NVIDIA GPU Architectures

Fast Fourier Transforms (FFTs) are widely used across scientific computing, from molecular dynamics and signal processing to computational fluid dynamics (CFD),...

8 MIN READ

Dec 11, 2025

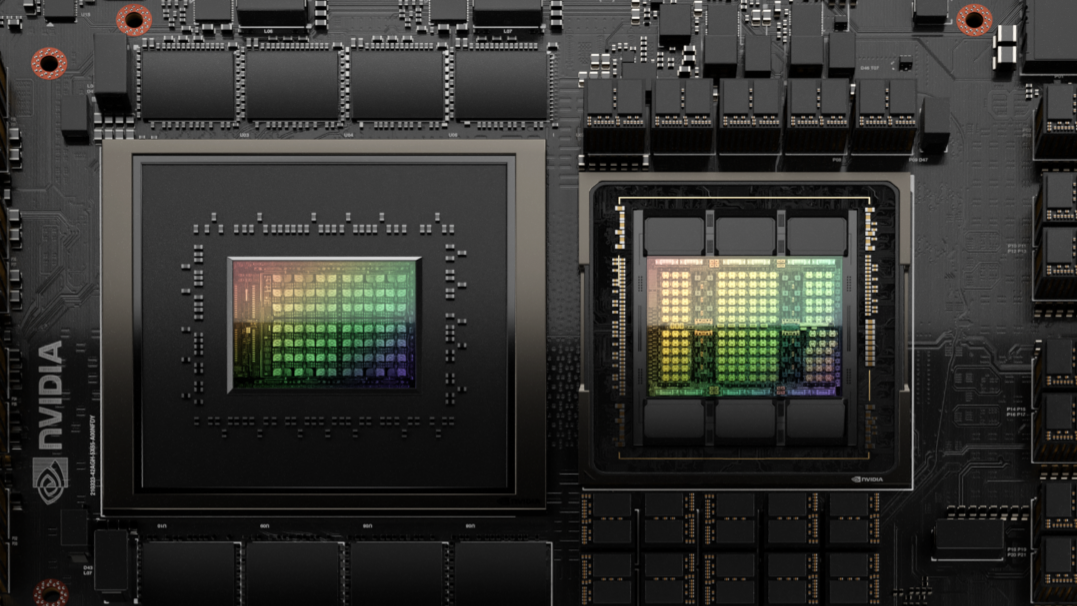

NVIDIA Blackwell Enables 3x Faster Training and Nearly 2x Training Performance Per Dollar than Previous-Gen Architecture

AI innovation continues to be driven by three scaling laws: pre-training, post-training, and test-time scaling. Training is foundational to building smarter...

7 MIN READ

Sep 16, 2025

Autodesk Research Brings Warp Speed to Computational Fluid Dynamics on NVIDIA GH200

Computer-aided engineering (CAE) forms the backbone for modern product development across industries, from designing safer aircraft to optimizing renewable...

8 MIN READ

Sep 05, 2025

Accelerate Large-Scale LLM Inference and KV Cache Offload with CPU-GPU Memory Sharing

Large Language Models (LLMs) are at the forefront of AI innovation, but their massive size can complicate inference efficiency. Models such as Llama 3 70B and...

7 MIN READ

Sep 02, 2025

Improving GEMM Kernel Auto-Tuning Efficiency on NVIDIA GPUs with Heuristics and CUTLASS 4.2

Selecting the best possible General Matrix Multiplication (GEMM) kernel for a specific problem and hardware is a significant challenge. The performance of a...

8 MIN READ

Aug 21, 2025

Less Coding, More Science: Simplify Ocean Modeling on GPUs With OpenACC and Unified Memory

NVIDIA HPC SDK v25.7 delivers a significant leap forward for developers working on high-performance computing (HPC) applications with GPU acceleration. This...

11 MIN READ

Jun 10, 2025

How Modern Supercomputers Powered by NVIDIA Are Pushing the Limits of Speed — and Science

Modern high-performance computing (HPC) is enabling more than just quick calculations — it’s powering AI systems that are unlocking scientific...

6 MIN READ

May 30, 2025

Telcos Across Five Continents Are Building NVIDIA-Powered Sovereign AI Infrastructure

AI is becoming the cornerstone of innovation across industries, driving new levels of creativity and productivity and fundamentally reshaping how we live and...

12 MIN READ

May 27, 2025

Advanced Optimization Strategies for LLM Training on NVIDIA Grace Hopper

In the previous post, Profiling LLM Training Workflows on NVIDIA Grace Hopper, we explored the importance of profiling large language model (LLM) training...

10 MIN READ