Networking / Communications

Apr 02, 2026

Accelerating Vision AI Pipelines with Batch Mode VC-6 and NVIDIA Nsight

In vision AI systems, model throughput continues to improve. The surrounding pipeline stages must keep pace, including decode, preprocessing, and GPU...

10 MIN READ

Apr 01, 2026

Accelerate Token Production in AI Factories Using Unified Services and Real-Time AI

In today’s AI factory environment, performance is not theoretical. It is economic, competitive, and existential. A 1% drop in usable GPU time can mean...

8 MIN READ

Mar 25, 2026

Scaling Token Factory Revenue and AI Efficiency by Maximizing Performance per Watt

In the AI era, power is the ultimate constraint, and every AI factory operates within a hard limit. This makes performance per watt—the rate at which power is...

10 MIN READ

Mar 23, 2026

Deploying Disaggregated LLM Inference Workloads on Kubernetes

As large language model (LLM) inference workloads grow in complexity, a single monolithic serving process starts to hit its limits. Prefill and decode stages...

14 MIN READ

Mar 17, 2026

Building the AI Grid with NVIDIA: Orchestrating Intelligence Everywhere

AI-native services are exposing a new bottleneck in AI infrastructure: As millions of users, agents, and devices demand access to intelligence, the challenge is...

11 MIN READ

Mar 16, 2026

Introducing NVIDIA BlueField-4-Powered CMX Context Memory Storage Platform for the Next Frontier of AI

AI‑native organizations increasingly face scaling challenges as agentic AI workflows drive context windows to millions of tokens and models scale toward...

12 MIN READ

Mar 16, 2026

Design, Simulate, and Scale AI Factory Infrastructure with NVIDIA DSX Air

Building AI factories is complex and requires efficient integration across compute, networking, security, and storage systems. To achieve rapid Time to AI and...

5 MIN READ

Mar 16, 2026

NVIDIA Vera Rubin POD: Seven Chips, Five Rack-Scale Systems, One AI Supercomputer

Artificial intelligence is token-driven. Every prompt, reasoning step, and agent interaction generates tokens. Over the past year, token consumption has grown...

19 MIN READ

Mar 09, 2026

Enhancing Distributed Inference Performance with the NVIDIA Inference Transfer Library

Deploying large language models (LLMs) requires large-scale distributed inference, which spreads model computation and request handling across many GPUs and...

13 MIN READ

Feb 28, 2026

Building Telco Reasoning Models for Autonomous Networks with NVIDIA NeMo

Autonomous networks are quickly becoming one of the top priorities in telecommunications. According to the latest NVIDIA State of AI in Telecommunications...

10 MIN READ

Feb 28, 2026

5 New Digital Twin Products Developers Can Use to Build 6G Networks

To make 6G a reality, the telecom industry must overcome a fundamental challenge: how to design, train, and validate AI-native networks that are too complex to...

6 MIN READ

Feb 03, 2026

Accelerating Long-Context Model Training in JAX and XLA

Large language models (LLMs) are rapidly expanding their context windows, with recent models supporting sequences of 128K tokens, 256K tokens, and beyond....

9 MIN READ

Feb 02, 2026

Optimizing Communication for Mixture-of-Experts Training with Hybrid Expert Parallel

In LLM training, Expert Parallel (EP) communication for hyperscale mixture-of-experts (MoE) models is challenging. EP communication is essentially all-to-all,...

11 MIN READ

Jan 07, 2026

Redefining Secure AI Infrastructure with NVIDIA BlueField Astra for NVIDIA Vera Rubin NVL72

Large-scale AI innovation is driving unprecedented demand for accelerated computing infrastructure. Training trillion-parameter foundation models, serving them...

7 MIN READ

Jan 06, 2026

Scaling Power-Efficient AI Factories with NVIDIA Spectrum-X Ethernet Photonics

NVIDIA is bringing the world’s first optimized Ethernet networking with co-packaged optics to AI factories, enabling scale-out and scale-across on the NVIDIA...

4 MIN READ

Jan 05, 2026

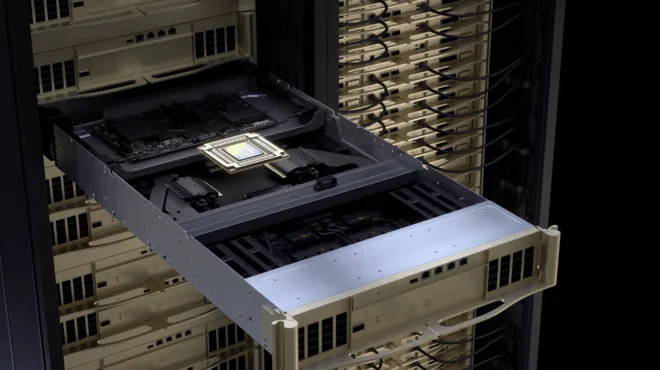

Inside the NVIDIA Vera Rubin Platform: Six New Chips, One AI Supercomputer

Update March 16, 2026: The NVIDIA Vera Rubin platform now has a seventh chip. Learn more about NVIDIA Groq 3 LPX: The Low-Latency Inference Accelerator for the...

63 MIN READ