Posts by Markel Ausin

Conversational AI / NLP

Jul 28, 2022

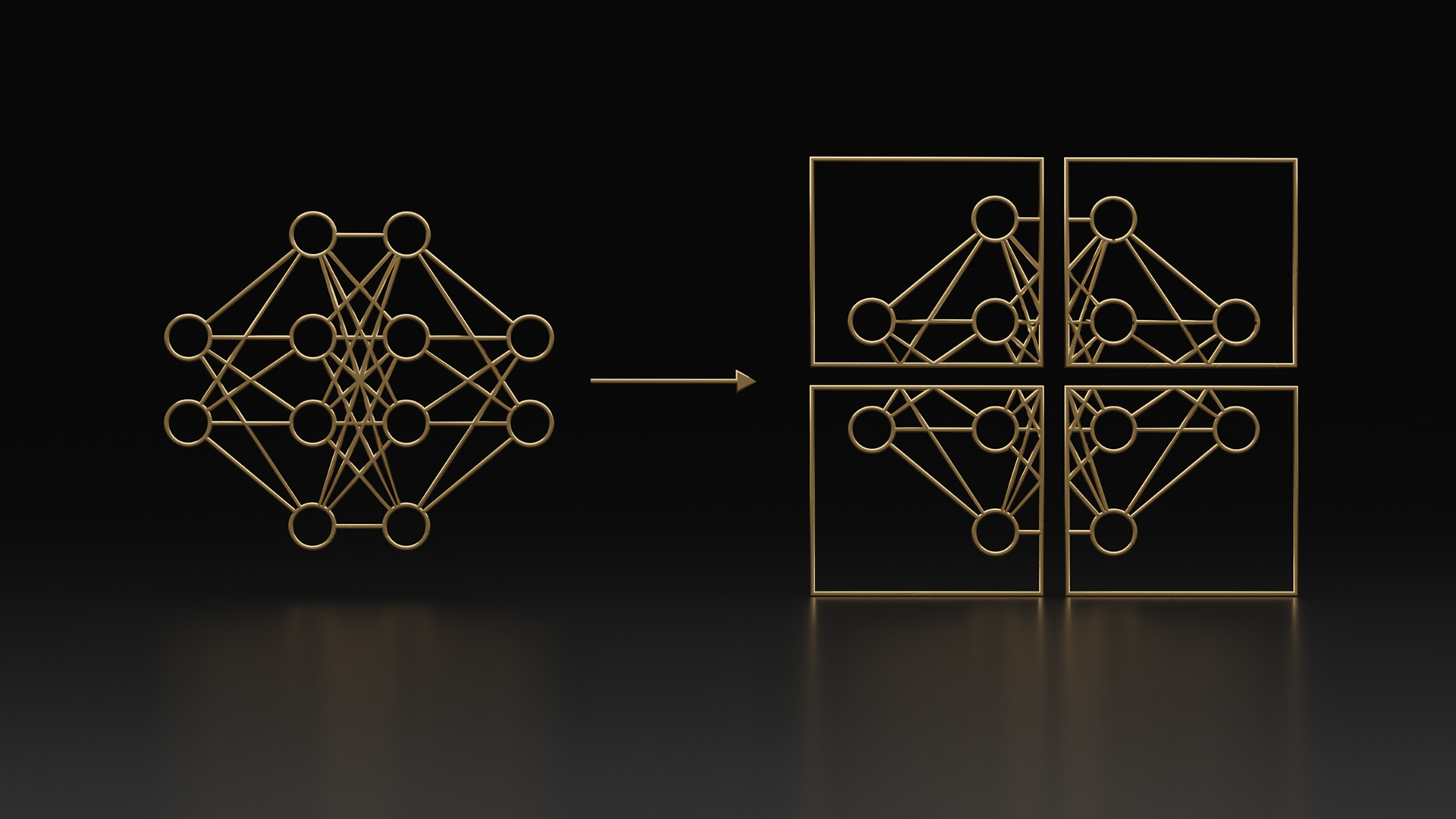

NVIDIA AI Platform Delivers Big Gains for Large Language Models

As the size and complexity of large language models (LLMs) continue to grow, NVIDIA is today announcing updates to the NeMo framework that provide training...

7 MIN READ