Posts by Deepak Narayanan

Data Center / Cloud

Mar 10, 2025

Ensuring Reliable Model Training on NVIDIA DGX Cloud

Training AI models on massive GPU clusters presents significant challenges for model builders. Because manual intervention becomes impractical as job scale...

8 MIN READ

Agentic AI / Generative AI

Jul 12, 2024

Train Generative AI Models More Efficiently with New NVIDIA Megatron-Core Functionalities

First introduced in 2019, NVIDIA Megatron-LM sparked a wave of innovation in the AI community, enabling researchers and developers to use the underpinnings of...

11 MIN READ

AI / Deep Learning

Apr 12, 2021

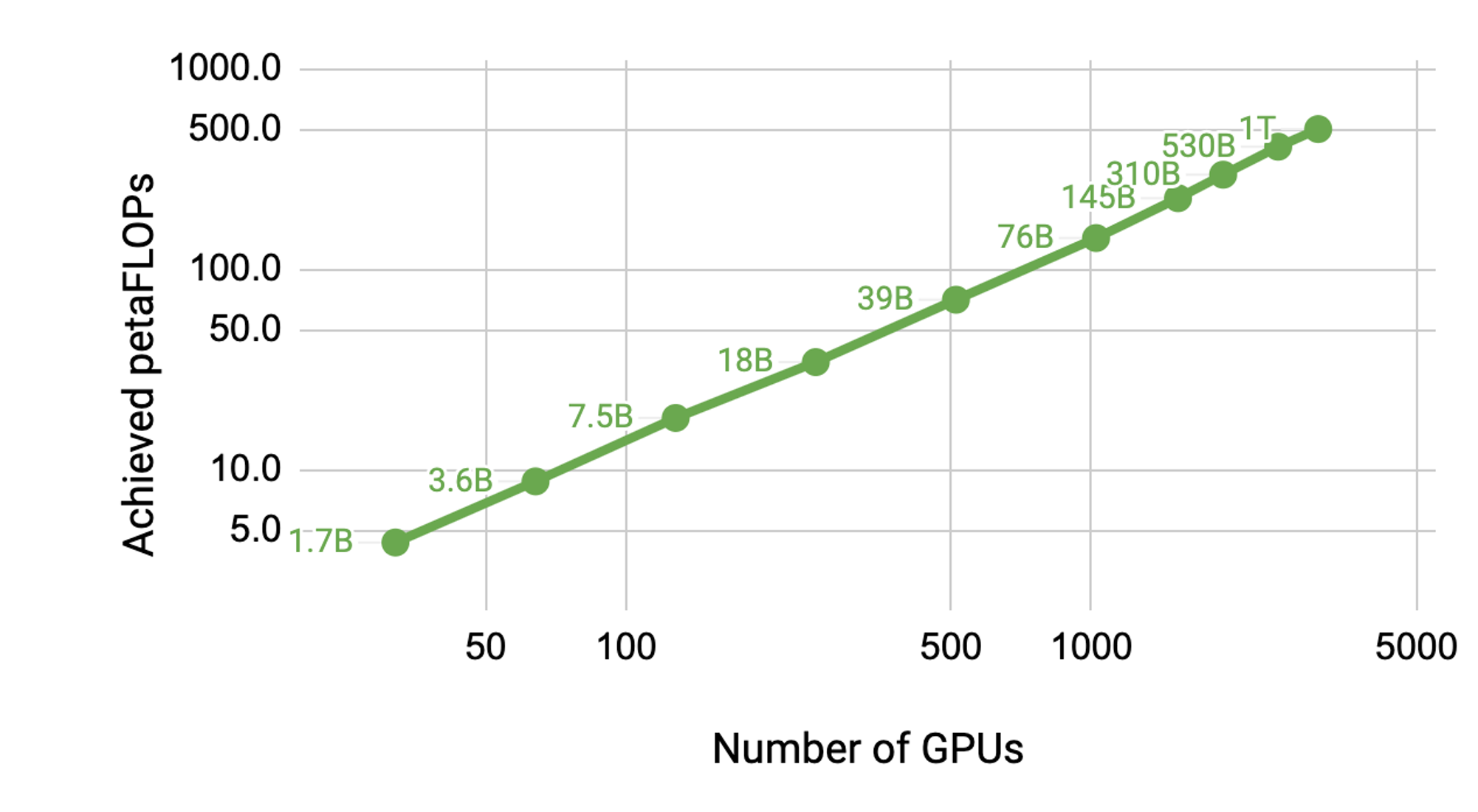

Scaling Language Model Training to a Trillion Parameters Using Megatron

Natural Language Processing (NLP) has seen rapid progress in recent years as computation at scale has become more available and datasets have become larger. At...

17 MIN READ