DRIVE Training

The best way to learn is by doing, and to help you get started, we have assembled a series of tutorials and instructional materials featuring the latest developer innovations. Learn about the DRIVE AGX Platform, AV development and testing, generative AI in automotive, improving safety with software defined vehicles, autonomous vehicle simulation and much more.

GTC

| GPU Technology Conference (GTC) |

|---|

|

GTC highlights the latest breakthroughs in autonomous vehicles, AI, HPC, accelerated data science, graphics, and more. You can view our most recent automotive GTC sessions at the link below: Additionally, you may view our DRIVE Developer Day sessions, which offer deep dives into safe and robust autonomous vehicle development by clicking below. |

Webinars

| Upcoming Webinars |

|---|

|

In each hour-long session, NVIDIA experts will dive into the details of various aspects of the end-to-end AV computational pipeline and will be available for live Q&A. Stay tuned for upcoming automotive developer webinars to learn more about the NVIDIA DRIVE AGX Platform, meanwhile check out More Webinars. |

NVIDIA Research

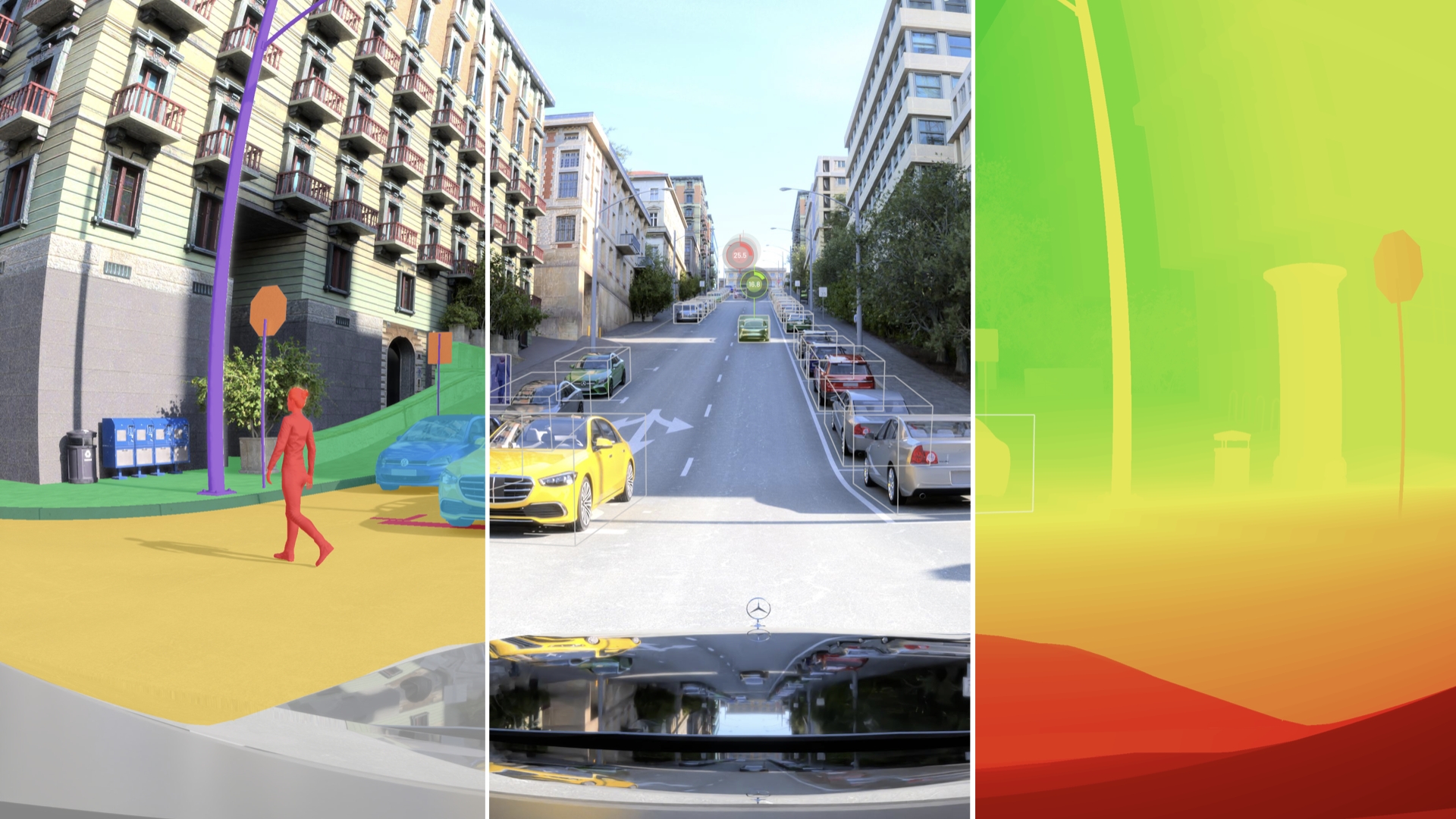

NVIDIA Research for Autonomous Vehicles

NVIDIA Research brings together a diverse and interdisciplinary set of researchers to address core topics in vehicle autonomy, ranging from perception and prediction to planning and control, as well as advance the state of the art in a number of critical related fields such as decision making under uncertainty, reinforcement learning, and the verification and validation of safety-critical AI systems.

NVIDIA Research Autonomous Vehicle Research Group led by Dr. Marco Pavone focuses on:

- Next-Generation AV Architectures

- AV Foundation Models

- Simulation

- AI Safety for AVs

Learn more through our publications

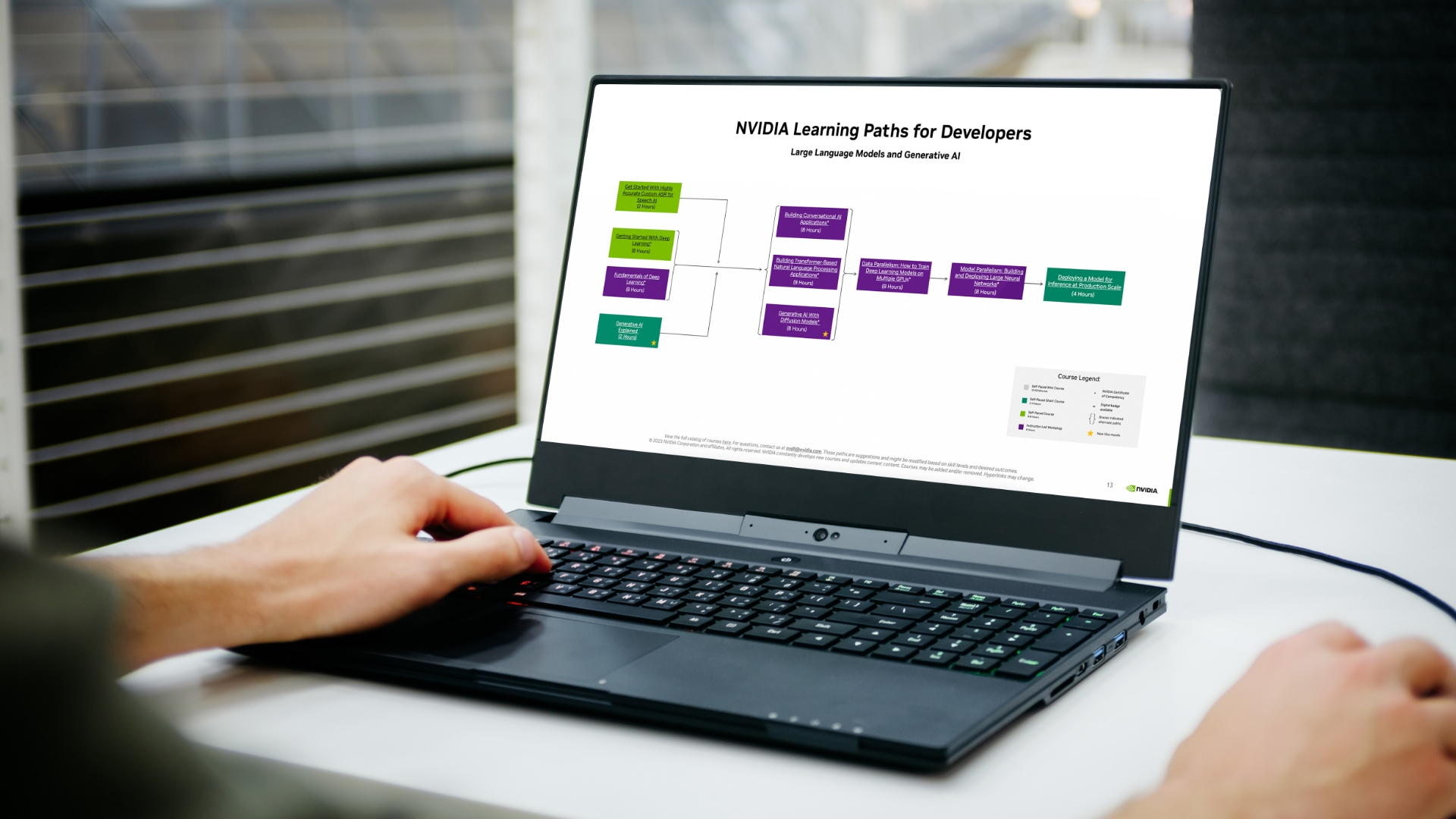

Deep Learning Institute (DLI)

The NVIDIA Deep Learning Institute (DLI) offers resources for diverse learning needs—from learning materials to self-paced and live training to educator programs. Individuals, teams, organizations, educators, and students can now find everything they need to advance their knowledge in AI, accelerated computing, accelerated data science, graphics and simulation, and more.

Explore DLI Solutions

DRIVE VIDEOS

Peek under the hood of NVIDIA DRIVE with our latest video series.