Egomotion Sample#

Description#

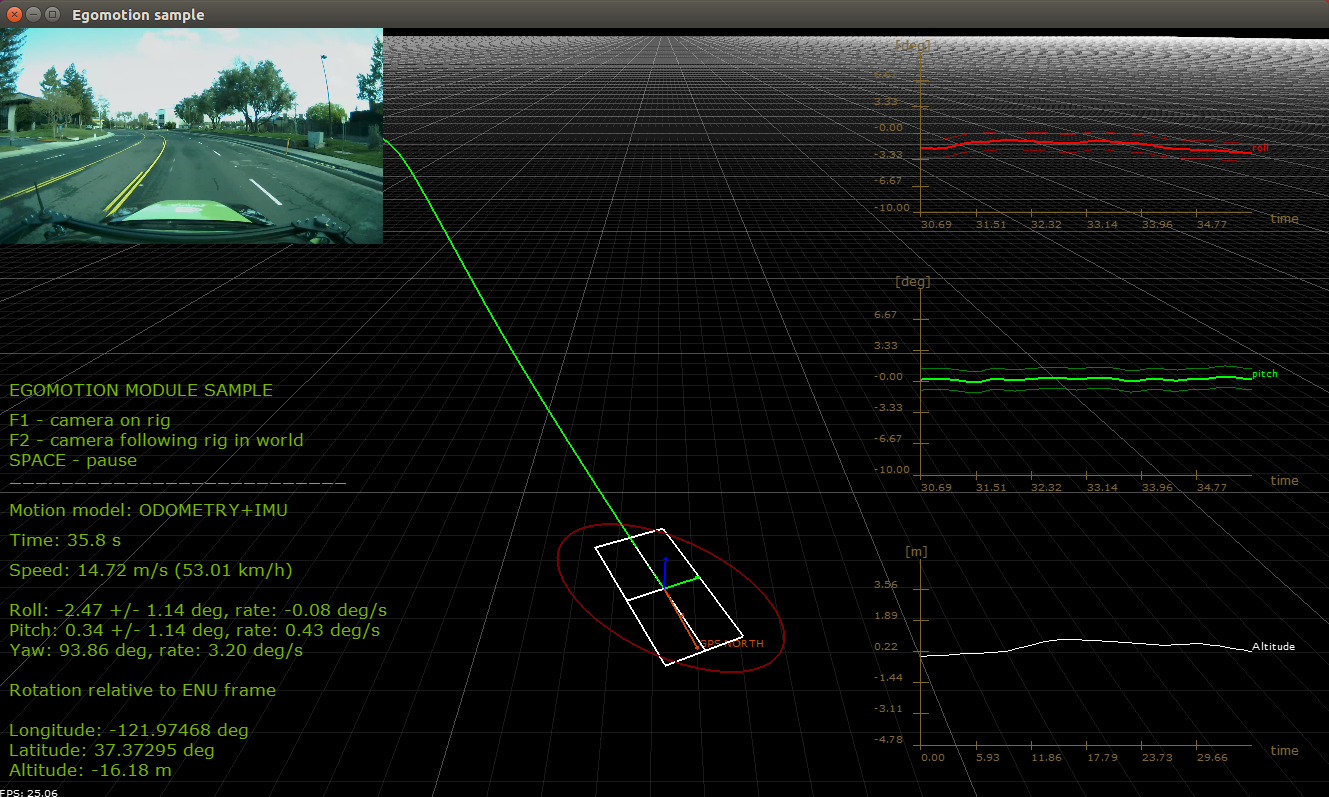

The Egomotion sample application shows how to use steering angle and velocity CAN measurements. It also explains how to use IMU and GPS measurements to compute vehicle position and orientation within the world coordinate system.

Running the Sample#

The command line for the sample is:

./sample_egomotion [options]

Options#

The sample accepts the following optional arguments:

- --camera-sensor-name={string}#

Name of the camera sensor in the given rig file. Defaults to the first camera sensor found in the rig file.

- --vehicle-sensor-name={string}#

Name of the sensor providing vehicle data in the given rig file. Defaults to the first CAN sensor found in the rig file.

- --gps-sensor-name={string}#

Name of the GPS sensor in the given rig file. Defaults to the first GPS sensor found in the rig file.

- --imu-sensor-name={string}#

Name of the IMU sensor in the given rig file. Defaults to the first IMU sensor found in the rig file.

- --mode=0|1[=1]#

The sample application supports different egomotion estimation modes. To switch the mode, pass

--mode=0/1as the argument.0represents odometry-based egomotion estimation. The vehicle motion is estimated using Ackerman principle.1uses IMU measurements to estimate vehicle motion. Gyroscope and linear accelerometers are filtered and fused to estimatevehicle orientation. Using speed vehicle odometry reading, the vehicle’s traveling path can be estimated. This mode also filters GPS locations if they are passed to the module.

- --output={file}#

If specified, the sample application outputs the odometry data to this file. The vehicle’s position in world coordinates (x,y) together with a timestamp in microseconds will be written out as:

.... 5680571648,-7.67,118.30 5680604981,-7.75,118.33 5680638314,-7.83,118.36 5680671647,-7.91,118.38 5680704980,-7.98,118.41

- --outputkml={file.kml}#

If specified, the sample application outputs the GPS and estimated location.

- --rig={file.json}[=data/samples/recordings/cloverleaf/rig-nominal-intrinsics.json]#

Rig file containing all information about vehicle sensors and calibration.

- --speed-measurement-type=0|1|2[=1]#

Speed measurement to be used, refer to

dwEgomotionSpeedMeasurementType.

- --enable-suspension=0|1[=0]#

Enables egomotion suspension modeling. It requires odometry and IMU (

--mode=1).

See also Common Options.

You must provide a rig file that contains the rig configuration and sensor parameters.

Note

Depending on

--outputand--outputkml, you may need to start the sample with sudo.For more details on sensors parameters and usage refer to CAN Bus, GPS, IMU.

Examples#

Running the sample with default arguments#

./sample_egomotion

Running the sample with output file#

sudo ./sample_egomotion --output=/home/nvidia/out.txt

Output#

The sample application creates a window, displays a video, and plots the vehicle’s position at a 30 Hertz sampling rate. Current speed, roll, pitch, and yaw are also printed.

Egomotion Sample#

Additional Information#

For more details see Egomotion.