Tutorials

April 12, 2024

Explainer: What Is a Convolutional Neural Network?

April 10, 2024

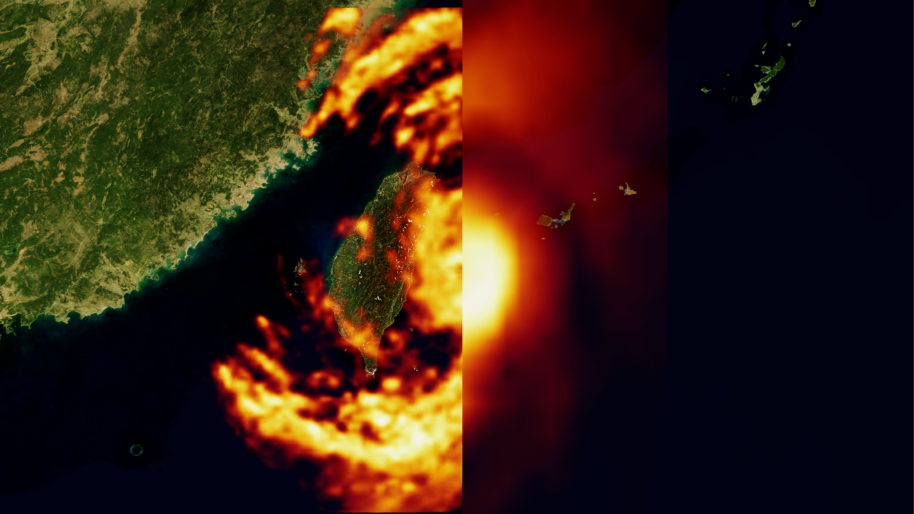

How Generative AI is Empowering Climate Tech with NVIDIA Earth-2

April 09, 2024

Next-Generation Live Media Apps on Repurposable Clusters with NVIDIA Holoscan for Media

News

April 11, 2024

New Video Series: OpenUSD for Developers

March 19, 2024

Generative AI for Digital Humans and New AI-powered NVIDIA RTX Lighting

March 19, 2024

NVIDIA Speech and Translation AI Models Set Records for Speed and Accuracy

March 19, 2024

Boost Multi-Omics Analysis with GPU-Acceleration and Generative AI

Training

Model Parallelism: Building and Deploying Large Neural Networks

Instructor-Led, Certificate Available