NVIDIA Data Center Deep Learning Product Performance

Reproducible Performance

Learn how to lower your cost per token and maximize AI models with The IT Leader’s Guide to AI Inference and Performance.

View Performance Data For:

Latest NVIDIA Data Center Products

Training to Convergence

Deploying AI in real-world applications requires training networks to convergence at a specified accuracy. This is the best methodology to test whether AI systems are ready to be deployed in the field to deliver meaningful results.

AI Inference

Real-world inferencing demands high throughput and low latencies with maximum efficiency across use cases. An industry-leading solution lets customers quickly deploy AI models into real-world production with the highest performance from data center to edge.

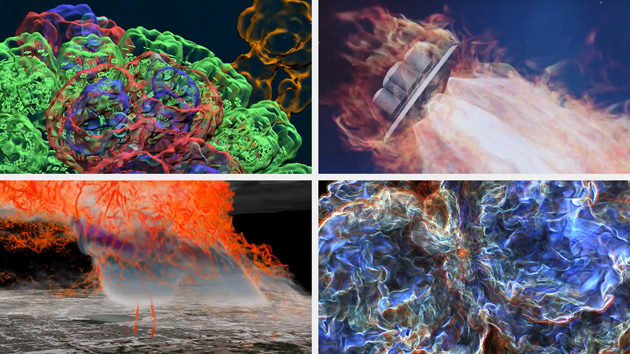

High-Performance Computing (HPC) Acceleration

Modern HPC data centers are crucial for solving key scientific and engineering challenges. NVIDIA Data Center GPUs transform data centers, delivering breakthrough performance with reduced networking overhead, resulting in 5X–10X cost savings.

NVIDIA Blackwell Ultra Delivers up to 50x Better Performance and 35x Lower Cost for Agentic AI

Built to accelerate the next generation of agentic AI, NVIDIA Blackwell Ultra delivers breakthrough inference performance with dramatically lower cost. Cloud providers such as Microsoft, CoreWeave, and Oracle Cloud Infrastructure are deploying NVIDIA GB300 NVL72 systems at scale for low-latency and long-context use cases, such as agentic coding and coding assistants.

This is enabled by deep co-design across NVIDIA Blackwell, NVLink™, and NVLink Switch for scale-out; NVFP4 for low-precision accuracy; and NVIDIA Dynamo and TensorRT™ LLM for speed and flexibility—as well as development with community frameworks SGLang, vLLM, and more.

Deep Learning Product Performance Resources

NVIDIA Data Center Deep Learning Product Performance FAQs

NVIDIA inference cost per million tokens has improved dramatically across generations: NVIDIA Blackwell Ultra (GB300 NVL72) delivers up to 50x higher throughput per megawatt and up to 35x lower cost per token than NVIDIA Hopper for low-latency agentic workloads, through hardware–software codesign, according to SemiAnalysis InferenceX benchmarks (Q1 2026). Software optimization drives continuous improvement—GB200 token output improved 4x in three months, resulting in a proportional decrease in token cost.

In MLPerf Inference v6.0 (April 2026), systems powered by NVIDIA Blackwell Ultra GPUs (GB300 NVL72) delivered the highest throughput across the widest range of models and scenarios. On DeepSeek-R1, GB300 NVL72 delivered 2.5 million tokens per second—up to 2.7x higher token throughput compared to GB300 NVL72 debut submissions just six months prior, as a result of NVIDIA TensorRT™-LLM software updates.

NVIDIA Blackwell B200 achieves up to 60,000 tokens per second per GPU on GPT-OSS-120B with the latest TensorRT-LLM stack, according to SemiAnalysis InferenceX benchmarks as of April 2026—representing a roughly 4x throughput improvement over H200 with TensorRT-LLM. This level of throughput allows NVIDIA Blackwell B200 to achieve $0.02 per million tokens on the same model using TensorRT-LLM.

NVIDIA's TensorRT-LLM and Dynamo software stack delivers continuous inference cost improvements without hardware changes. NVIDIA Blackwell B200 cost per million tokens dropped from $0.11 at launch to $0.02 on GPT-OSS-120B within two months, according to SemiAnalysis InferenceX benchmarks as of April 2026—a 5x improvement from software alone. Each TensorRT-LLM release typically delivers throughput gains through kernel fusion, quantization improvements, and scheduling optimizations.

Explore software containers, models, Jupyter notebooks, and documentation.