This week’s Spotlight is on Valerie Halyo, assistant professor of physics at Princeton University.

Researchers in the field of high energy physics, such as Valerie, are exploring the most fundamental questions about the nature of the universe, looking for the elementary particles that constitute matter and its interactions.

One of Valerie’s goals is to extend the physics accessible at the Large Hadron Collider (LHC) at CERN in Geneva, Switzerland to include a larger phase space of the topologies that include long-lived particles. This research goes hand in hand with new ideas related to enhancing the “trigger” performance at the LHC.

(In particle physics, a trigger is a system that rapidly decides which events in a particle detector to keep when only a small fraction of the total can be recorded.)

NVIDIA: Valerie, what are some of the important science questions in the study of the universe?

Valerie: Some of the key questions being explored are:

- What is the dark matter that keeps galaxies together throughout the universe?

- What is the origin of the large asymmetry between matter and anti-matter in the universe?

- Why is gravity so weak?

- Are the Standard Model particles all fundamental?

- Are there more forces and can they be unified?

- Last but not least, is the scalar discovered at the LHC on July 4, 2012 the Standard Model Higgs or a different Higgs Boson of the electroweak symmetry breaking and fermion masses? Surprises are still possible and the hunt for rare decays and processes is going on in parallel.

It’s exciting to be working in the area of high-energy physics because answers to these questions and many more might be hidden in the multi TeV (tera-electron volt) region accessible by the Large Hadron Collider.NVIDIA: In layman’s terms, what is the Large Hadron Collider (LHC)?

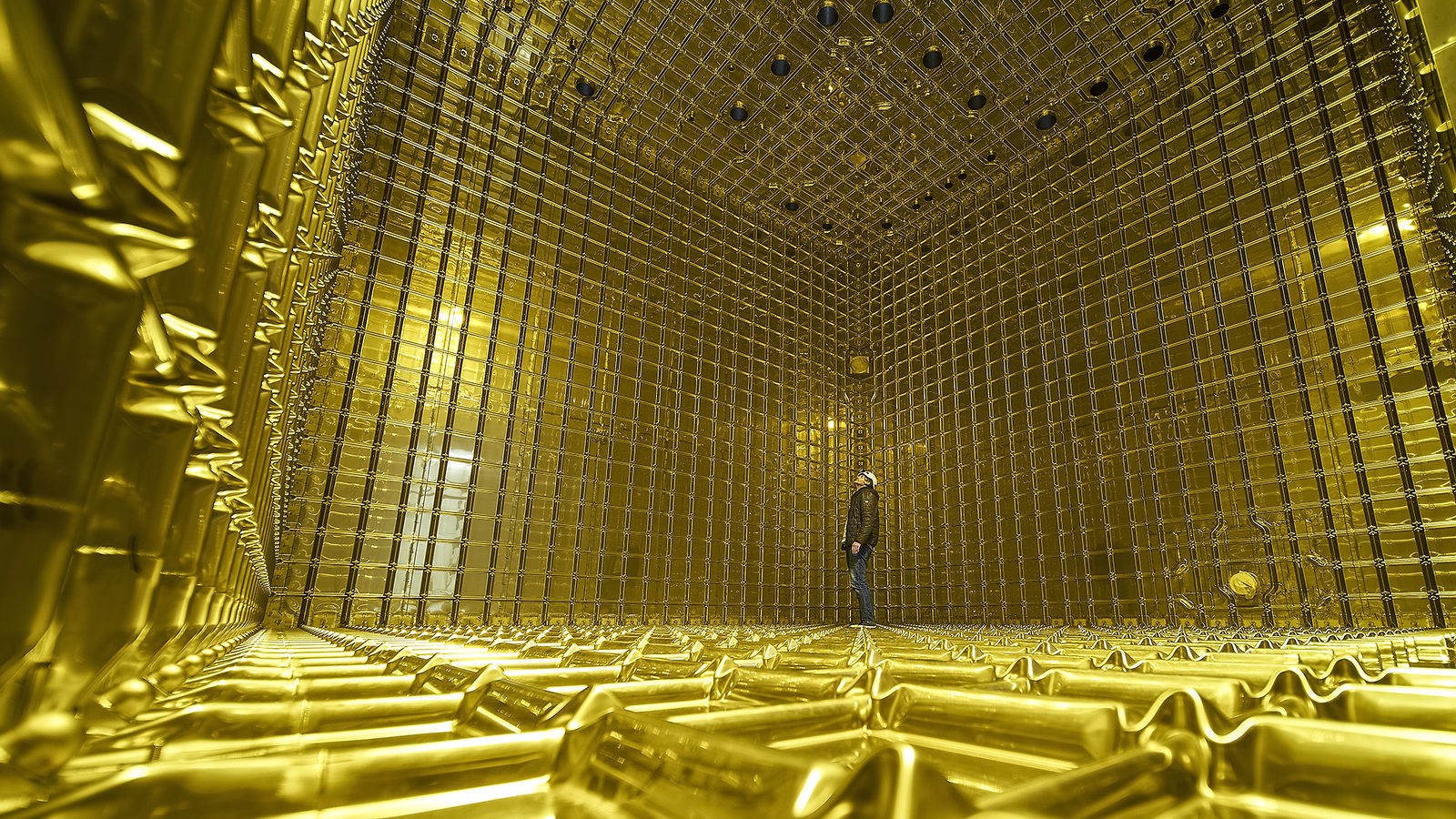

Valerie: The LHC is the world’s largest and most powerful particle accelerator. It first started up on September 10, 2008 and per the CERN website, remains the latest addition to CERN’s accelerator complex. It consists of a 27-kilometer ring of superconducting magnets with a number of accelerating structures to boost the energy of the particles along the way to a record energy of 8 TeV.

Inside the accelerator, two high-energy proton beams travel in opposite directions close to the speed of light before they are made to collide at four locations around the accelerator ring. These locations correspond to the positions of four particle detectors – ATLAS, CMS, ALICE and LHCb.

These detectors record the particles left by debris from the collisions and look for possible new particles or interactions. LHC is the best microscope able to probe new physics at 10-19 m and is also the best “time machine,” creating conditions similar to what happened a picosecond after the Big Bang at temperatures 8 orders of magnitude larger than the core of the Sun.

Hence, the LHC is a discovery machine that provides us the opportunity to explore the physics hidden in the multi TeV region.

NVIDIA: How can GPUs accelerate research in this field?

Valerie: The Compact Muon Solenoid (CMS), one of the general-purpose detectors at the LHC, features a two-level trigger system to reduce the 40 MHz beam crossing data rate to approximately 100 Hz.

(In particle physics, a trigger is a system that rapidly decides which events in a particle detector to keep when only a small fraction of the total can be recorded.)

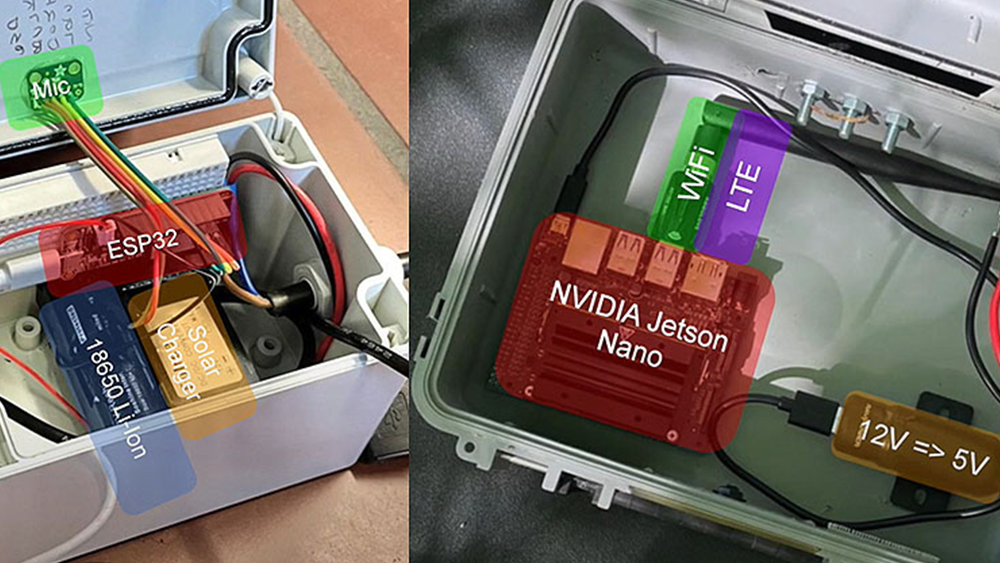

The Level-1 trigger is based on custom hardware and designed to reduce the rate to about 100 kHz, corresponding to 100 GB/s, assuming an average event size of 1 MB.

The High Level Trigger (HLT) is purely software-based and must achieve the remaining rate reduction by executing sophisticated offline-quality algorithms. GPUs can easily be integrated in the HLT server farm and allow, for example, simultaneous processing of all the data recorded by the silicon tracker as particles traverse the tracker system.

The GPUs will process the data at an input rate of 100 kHz and output all the necessary information about the speed and direction of the particles for up to 5000 particles in less than 60 msec. This is more than expected even at design luminosity. This will allow for the first time not only the identification of particles emanating from the interaction point but also reconstruction of the trajectories for long-lived particles. It will enhance and extend the physics reach by improving the set of events selected and recorded by the trigger.

While both CPUs and GPUs are parallel processors, CPUs are more general purpose and designed to handle a wide range of workloads like running an operating system, web browsers, word processors, etc. GPUs are massively parallel processors that are more specialized, and hence efficient, for compute-intensive workloads.

NVIDIA: When did you first realize that GPUs were interesting for high-level triggering?

Valerie: The Trigger and Data Acquisition (DAQ) systems in a modern collider experiment, such as the ATLAS and CMS experiments at the LHC, provide essential preliminary online analysis of the raw data for the purpose of filtering potentially interesting events into the data storage system.

It is essentially the “brain of the experiment.” Wrong decisions may cause certain topologies to evade detection forever. Realizing the significant role of the trigger, the current window of opportunity we have during the two years of the LHC shutdown, and the responsibility to enhance or even extend the physics reach at the LHC after the shutdown, led me to revisit the software-based trigger system.

By its very nature of being a computing system, the HLT relies on technologies that have evolved extremely rapidly. It is no longer possible to rely solely on increases in processor clock speed as a means for extracting additional computational power from existing architectures.

However, it is evident that innovations in parallel processing could accelerate the algorithms used in the trigger system and might yield significant impact.

One of the most interesting technologies, which continue to see exponential growth, is modern GPUs. By making a massive number of parallel execution units highly programmable for general purpose computing, and the supported API (CUDA C), it was obvious that putting this together with the flexibility of the CMS HLT software and hardware computing farm could enhance the HLT performance and potentially extend the reach of the physics at the LHC.

NVIDIA: What approaches did you find the most useful for CUDA development?

Valerie: Our work includes a combination of fundamental algorithm development and GPU implementation for high performance. Algorithm prototypes were often tested using sequential implementations since that allowed for quick testing of new ideas before spending the time required to optimize the GPU implementations.

In terms of GPU performance it’s a continual process of implementing, understanding the performance in terms of the capability of the hardware, and then refactoring to alleviate bottlenecks and maximize performance. Understanding the performance and then refactoring the code depends on knowledge of the hardware and programming model, so documentation about details of the architecture and tools like the Profiler are very useful.

As a specific example, the presentation at GTC 2013 by Lars Nyland and Stephen Jones on atomic memory operations was especially useful in understanding the performance of some of our code. It was a particularly good session and I’d recommend watching it online if you weren’t able to attend.

NVIDIA: Describe the system you are running on.

Valerie: The performance of the algorithms was evaluated using Intel multicore CPUs and NVIDIA Tesla C2075 and K20c GPUs. CPU code was written in C/C++ and OpenMP, while the GPU code uses CUDA C.

NVIDIA: Is your work in the research stage? What’s the next step?

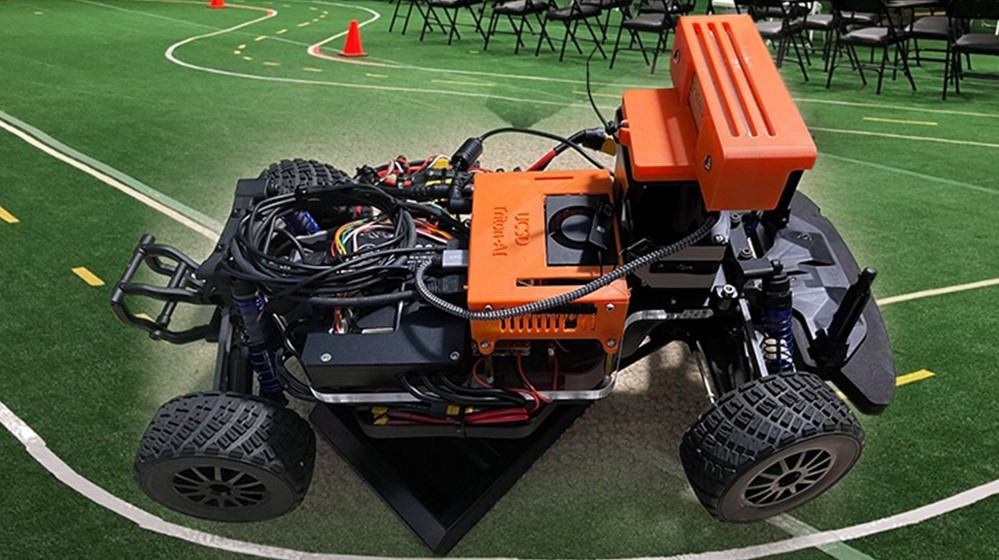

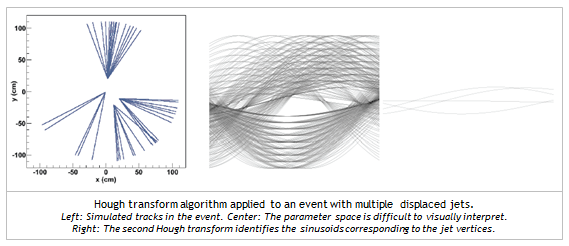

Valerie: The work is still in the research stage, with preliminary results from testing the track reconstruction and the trigger algorithms using the Hough transform algorithm on simulation data and data taken at LHC by the CMS detector. Now that we have demonstrated proof of principle using input data from a simple detector simulation our goal is to methodically quantify and optimize the performance of the track reconstruction. The new proposed trigger algorithms aim to select long-lived particles or even Black Holes decaying in the silicon tracker system using input data recorded by the CMS detector. In principle it can also be implemented in the ATLAS detector.

NVIDIA: Beyond triggering, how do you envision GPUs helping to process or analyze LHC data?

Valerie: Reconstruction of the particle trajectories is essential both during online data taking and offline while we attempt to reprocess data that was already archived and stored for further analysis, so faster reconstructions improve the turnaround of new results.

In addition, experiments have to deal with massive production of Monte Carlo data each year. Billions of events have to be generated to match the running conditions of the collision and detector while data was taken. Improvements in the speed of reconstruction allow higher production rates and better efficiency of the computing resources, while also saving money.

NVIDIA: What advice would you offer others in your field looking at CUDA?

Valerie: Even if you aren’t directly developing and optimizing code it’s clear that the future is massively parallel processors, and having a basic understanding of processor architecture and parallel computing is going to be important.

A great algorithm design isn’t very useful if it can’t be implemented in an efficient way on modern parallel processors.

Read more CUDA GPU Computing Spotlights.