Case Study

How Justt Scaled Chargeback Document Extraction Using NVIDIA Nemotron Parse

About Justt: Justt is an AI-native leading chargeback management platform that autonomously builds and submits tailored dispute responses for every illegitimate chargeback. By combining large-scale data enrichment, dynamic evidence generation, and continuous learning, Justt enables merchants to recover more revenue at scale—without manual effort.

Challenge

What Justt needed to do

Justt set out to modernize its unstructured data extraction pipeline to deliver high-fidelity results for customers interacting directly with the platform. Because users depend on real-time outputs to manage chargebacks, even small inaccuracies from legacy systems required costly manual corrections and disrupted workflows. Traditional CPU-based approaches struggled to accurately interpret complex document layouts and semantic relationships at scale. As document volumes grew, the system hit strict latency ceilings and rising infrastructure costs, making it clear that a fundamental architectural shift was required to achieve both accuracy and efficiency.

Constraints

Infrastructure: The existing pipeline was built on CPU-based OCR workflows, primarily using the community library. While effective for simple documents, this approach struggled with complex layouts and dense semantic relationships, limiting both throughput and extraction accuracy. Supporting modern deep learning–based inference required a transition to a GPU-accelerated architecture.

-

Performance and latency: Justt’s customers interact directly with the platform and expect immediate results. To support time-sensitive chargeback workflows, the extraction pipeline needed to deliver near-real-time performance while operating within strict end-to-end latency limits.

Cost efficiency: The system processes large volumes of documents daily, making cost per document a critical factor. Although GPU acceleration offered significant performance benefits, the solution had to maximize utilization and efficiency to meet the economic constraints of a production-scale, enterprise deployment.

System architecture

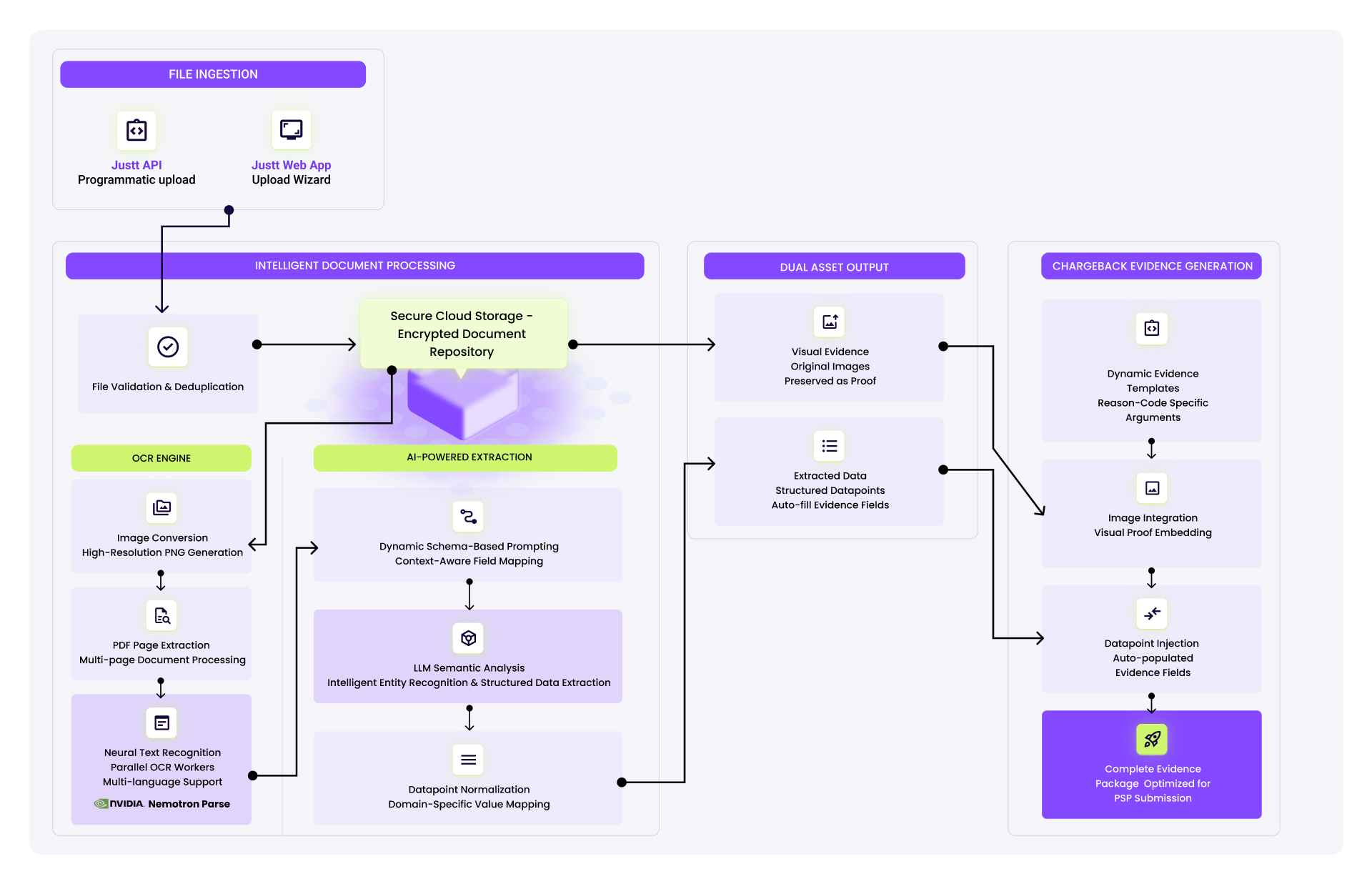

To meet the performance, accuracy, and cost constraints, Justt designed a GPU-accelerated document processing architecture optimized for high-throughput inference. The system integrates Nemotron Parse, part of the NVIDIA Nemotron™ family, to enhance visual document understanding, enabling robust extraction across complex layouts while preserving semantic relationships between fields.

Nemotron Parse standardizes context-aware field mapping across document types, allowing extracted data to be normalized into high-fidelity outputs without manual post-processing. By running inference on GPUs, the architecture achieves low-latency processing while supporting efficient scaling as document volumes increase.

Click Image to Enlarge

Figure 1. Justt’s intelligent document processing pipeline design includes an OCR engine and AI-powered extraction component for visual and text extraction and analysis.

Architecture overview and data flow

Extraction pipeline

Nemotron Parse is integrated into the extraction pipeline, replacing previous OCR-based solutions and extending visual extraction capabilities to handle complex document structures such as tables, multi-column layouts, and spatially dependent fields.

Inference layer

The model runs on a GPU-accelerated inference layer optimized for low-latency, high-throughput processing. Inference workloads are batched and scheduled to maximize GPU utilization while maintaining predictable end-to-end latency under variable document volumes.

Field mapping layer

A context-aware mapping component consumes the semantically rich outputs produced by Nemotron Parse and normalizes extracted fields into a consistent schema. This layer was adapted to accommodate the model’s output format, applying schema versioning and confidence thresholds to ensure stable downstream integration.

Application interface

Structured results are returned to downstream services in near real time, enabling interactive customer workflows without manual post-processing. Extraction quality and latency metrics are continuously monitored to enforce service-level objectives.

Integration details

Key design decisions:

Justt focused on adapting its intelligent document processing pipeline to support multimodal inputs and outputs along with how it was deployed.

Pipeline adaptation: Integrating Nemotron Parse required modifying multiple components in the extraction pipeline to support the model’s input and

-

Model serving architecture: Due to hardware requirements for GPU-accelerated inference, Nemotron Parse was deployed as a dedicated model-serving service on AWS, decoupled from the core platform application. This separation enabled independent scaling of inference workloads without impacting application-layer performance.

Evaluation and quality measurement: The evaluation framework was updated to reflect the new architecture, introducing revised metrics to measure extraction accuracy and error rates produced by the GPU-accelerated pipeline.

How Nemotron Parse was integrated

Inference trigger and preprocessing: Nemotron Parse is invoked as part of the document ingestion workflow. Upon customer file upload, documents are converted into page-level image representations and passed to the model for inference.

-

Post-processing and field alignment: The model’s outputs are forwarded to an LLM-based processing layer that performs domain-specific field alignment, translating extracted content into Justt’s standardized schema.

What didn’t work initially

Initial installation required dedicated time to set a new environment and adjust the installation to the relevant hardware profile early on. To use Nemotron Parse, the model swap was straightforward and did not require additional optimization or customization.

Results

Measured outcomes

Transitioning from legacy OCR-based extraction to Nemotron Parse resulted in a 25% reduction in extraction error rate across evaluated document sets. The improved accuracy reduced the need for manual corrections during document uploads and increased the reliability of downstream chargeback evidence generation.

Customers such as HEI Hotels & Resorts, a leading hospitality operator, use the platform powered by this architecture to process chargebacks efficiently across multiple properties while improving recovery rates.

Before and after comparisons

-

25% fewer manual corrections: Customers spend significantly less time correcting extracted data during the upload process, enabling faster case preparation and reduced operational overhead.

-

Higher-quality evidence generation: By minimizing false positives and human error at the extraction stage, the system produces cleaner, more reliable data for chargeback defense workflows, contributing to improved revenue recovery outcomes.

Lessons Learned

Strategy for success

Model selection matters: Smaller, domain-optimized models such as Nemotron Parse can significantly extend document extraction capabilities while maintaining acceptable latency and cost profiles in production environments.

Common pitfall to avoid

Skipping output validation: Without robust validation and confidence checks, extraction errors can propagate downstream and negatively affect business outcomes, even when model-level accuracy improves.

A tip for teams building similar systems

Consolidate extraction logic: Investing in advanced models and unified evaluation frameworks can reduce long-term maintenance effort and eliminate brittle, siloed extraction pipelines.

Related tutorials and resources

For developers facing a similar challenge, the following resources provide step-by-step guidance and deeper technical detail on building and deploying document understanding pipelines.

How to Build a Document Processing Pipeline for RAG

NVIDIA Nemotron

Step-by-step instructions on how to set up a multimodal intelligent document processing pipeline using RAG.

Nemotron Parse

NVIDIA Nemotron

Visit the documentation for an overview of how to use the specialized VLM Nemotron Parse.

Use the Nemotron Parse Model

NVIDIA Nemotron

Available on Hugging Face or as an NVIDIA NIM™ API on build.nvidia.com.

Watch a Tutorial on Building a Document Processing Pipeline

NVIDIA Nemotron

Live technical walkthrough on using Nemotron Parse to process complex PDFs using RAG.

Build With the NVIDIA Blueprint for Enterprise RAG

NVIDIA Blueprint

Deploy an Enterprise RAG pipeline with accelerated microservices.

Acknowledgements

Many thanks to the teams at Justt and NVIDIA—especially Roenen Ben Ami, Dor Bank, and Michal Well at Justt, and Lior Cohen and Gal Mizan at NVIDIA—for their help in writing and reviewing this case study.