Marine biologists have a new AI tool for monitoring and protecting coral reefs. The project—a collaboration between Google and Australia’s Commonwealth Scientific and Industrial Research Organization (CSIRO)—employs computer vision detection models to pinpoint damaging outbreaks of crown-of-thorns starfish (COTS) through a live camera feed. Keeping a closer eye on reefs helps scientists address growing populations quickly, to protect the valuable Great Barrier Reef ecosystem.

Despite covering less than 1% of the vast ocean floor, coral reefs support about 25% of sea species including fish, invertebrates, and marine mammals. When healthy, these productive marine environments provide commercial and subsistence fishing and income for tourism and recreational businesses. They also protect coastal communities during storm surges and are a rich source of antiviral compounds for drug discovery research.

Assemblages of COTS are found throughout the Indo-Pacific region and feed on coral polyps—the living part of hard coral reefs. They typically occur in low numbers, posing little harm to the ecosystem. However, as outbreaks increase in frequency—in part due to nutrient run-off and a decline in natural predators—they are causing significant damage.

Healthy reefs take about 10 to 20 years to recover from COTS outbreaks, defined by 30 or more adults per 10,000 square meters, or when densities consume coral faster than it can grow. Degraded reefs facing environmental stressors such as climate change, pollution, and destructive fishing practices are less likely to recover, resulting in irreversible damage, diminished coral cover, and biodiversity loss.

Scientists control outbreaks through interventions. Two common approaches involve injecting a starfish with bile salts or removing populations from the water. But, traditional reef surveying, which consists of towing a snorkeler behind a boat for visual identification, is time-consuming, labor-intensive, and less accurate.

According to the project’s TensorFlow post, “CSIRO developed an edge ML platform (built on top of the NVIDIA Jetson AGX Xavier) that can analyze underwater image sequences and map out detections in near real time.” The authors, Megha Malpani an AI/ML product manager at Google, and Ard Oerlemans a Google software engineer, are part of a team of researchers working with CSIRO to build the most accurate and performant models.

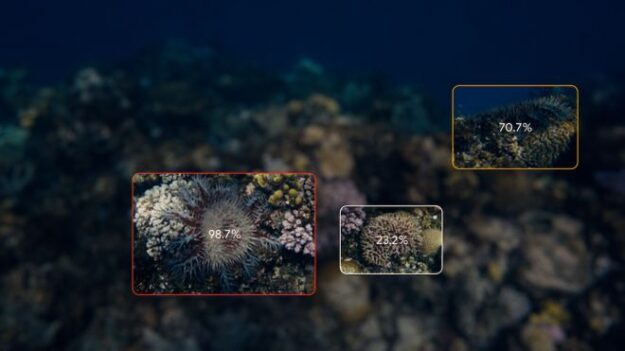

Employing an annotated dataset from CSIRO the researchers developed an accurate object detection model that uses a live camera feed rather than a snorkeler to detect the starfish.

It processes images at more than 10 frames per second with precision across a variety of ocean conditions such as lighting, visibility, depth, viewpoint, coral habitat, and the number of COTS present.

According to the post, when a COTS starfish is detected, it is assigned a unique ID tracker, linking detections over time and video frame. “We link detections in subsequent frames to each other by first using optical flow to predict where the starfish will be in the next frame, and then matching detections to predictions based on their Intersection over Union (IoU) score,” Malpani and Oerlemans write.

With the ultimate goal of quickly determining the total number of COTS, the team focused on the entire pipeline’s accuracy. The “current 1080p model using TensorFlow TensorRT runs at 11 FPS on the Jetson AGX Xavier, reaching a sequence-based F2 score of 0.80! We additionally trained a 720p model that runs at 22 FPS on the Jetson module, with a sequence-based F2 score of 0.78,” the researchers write.

According to the study, the project aims to showcase the capability of machine learning and AI technology applications for large-scale surveillance of ocean habitats.

Their work is open source through the crown-of-thorns starfish detection pipeline on GitHub or on the Google Colab. The project is part of Google’s Digital Future Initiative with CSIRO.

Read more. >>