In the last episode of CUDACasts, we learned how to install the CUDA Toolkit on Windows. Now we’re going to quickly move on and accelerate code on the GPU. For this episode, we’ll use the CUDA C programming language. However, as I will show in future CUDACasts, there are other CUDA enabled languages, including C++, Fortran, and Python.

The simple code we’ll be writing is a kernel called VectorAdd, which adds two vectors, a and b, in parallel, and stores the results in vector c. You can follow along in the video or download the source code for this episode from Github.

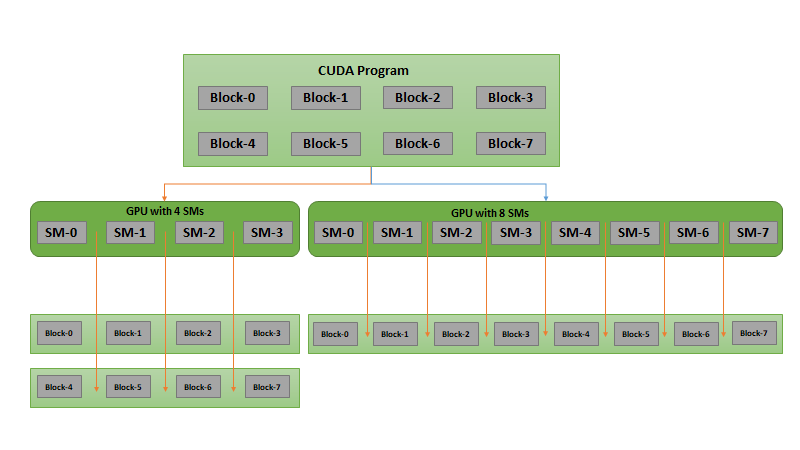

The process for moving VectorAdd from the CPU to the massively parallel GPU follows three simple steps.

- Parallelize the VectorAdd function by converting the serial for loop that adds each pair of elements on the CPU into a parallel kernel that uses an independent GPU thread to add each pair of elements.

- Copy the initialized data from CPU memory to the GPU memory space and the results back.

- Modify the VectorAdd function call to launch the now parallelized kernel on the GPU.

If you’re interested in learning more about CUDA C, you can watch my in-depth Introduction to CUDA C/C++ recorded here. In the next CUDACast, we’ll explore an alternate method for accelerating code using the OpenACC directive based approached.

If you would like to request a topic for a future episode of CUDACasts, or if you have any other feedback, please leave a comment to let us know!